Ready to improve your music discovery workflows? Try Similarity Search in Cyanite.

Music recommendation systems tend to favor tracks that are already popular. This means they reinforce existing visibility rather than surfacing the best possible match.

For artists, getting their music on a platform is no longer the biggest challenge. It’s being findable.

Do smaller artists really have a fair shot at being discovered if they don’t have a big music label backing them up? In most cases, the honest answer most music platforms would give is no. And the infrastructure of discovery is a big part of the reason why.

Bias in collaborative filtering is one of the main reasons why many catalogs fail to unlock the full value of the music they already have.

AI tagging has the potential to turn this around. Sound-based discovery evaluates what a track actually sounds like, not its existing popularity. But getting this right takes deliberate work: building models that don’t encode existing biases, and layering contextual metadata to give platforms the tools to surface the artists that matter to their clients and communities.

Why standard discovery logic favors already-known artists

Most music platforms rely on a combination of popularity signals, manual editorial tagging, and keyword search to surface music. That may sound reasonable on the surface, but in practice, it creates a system that consistently favors what is already known.

- Popularity-based signals like plays, engagement, and saves are inherently self-reinforcing. Tracks that have historically been surfaced more often are more likely to be surfaced again. A track from an established artist with years of placement history will surface in recommendations far more reliably than an equally strong track from a newer or lesser-known creator. Basically, your friend Katie’s latest release stands no chance against Taylor Swift.

- Manual tagging is time-consuming and doesn’t scale, so it actually deepens the problem. When a platform’s editorial team has limited bandwidth, it tends to flow toward priority artists, those with more commercial history or more recent activity. Newer or smaller artists often get less attention, and therefore less metadata depth and, ultimately, less visibility.

Fixing this starts with changing how music is described and retrieved at the infrastructure level, not with better playlists or more editorial effort.

This is where sound-based AI tagging and Similarity Search offer a level playing field. Rather than asking who has the most plays or when a track was last touched by an editor, they ask a simpler question: what does this music actually sound like?

This is exactly the problem Cyanite was built to solve: creating a discovery layer where music is evaluated based on its sound, not its history.

A track from an artist who joined the catalog three years ago and a track onboarded last month are evaluated and described consistently, using the same logic. They become equally visible to the same searches, making popularity an irrelevant factor.

But this only works if the underlying models are built and maintained carefully, because AI doesn’t automatically remove bias. In many cases, it can reinforce it.

Potential biases in AI music tagging

At this point, it’s tempting to assume that AI automatically creates a fairer system. If every track is analyzed objectively, shouldn’t discovery become neutral by default? Not necessarily.

If the AI used for music tagging was trained predominantly on certain types of music, it can quietly encode the biases already present in the industry. We know this from firsthand experience. It’s something we found when we looked at our own models at Cyanite.

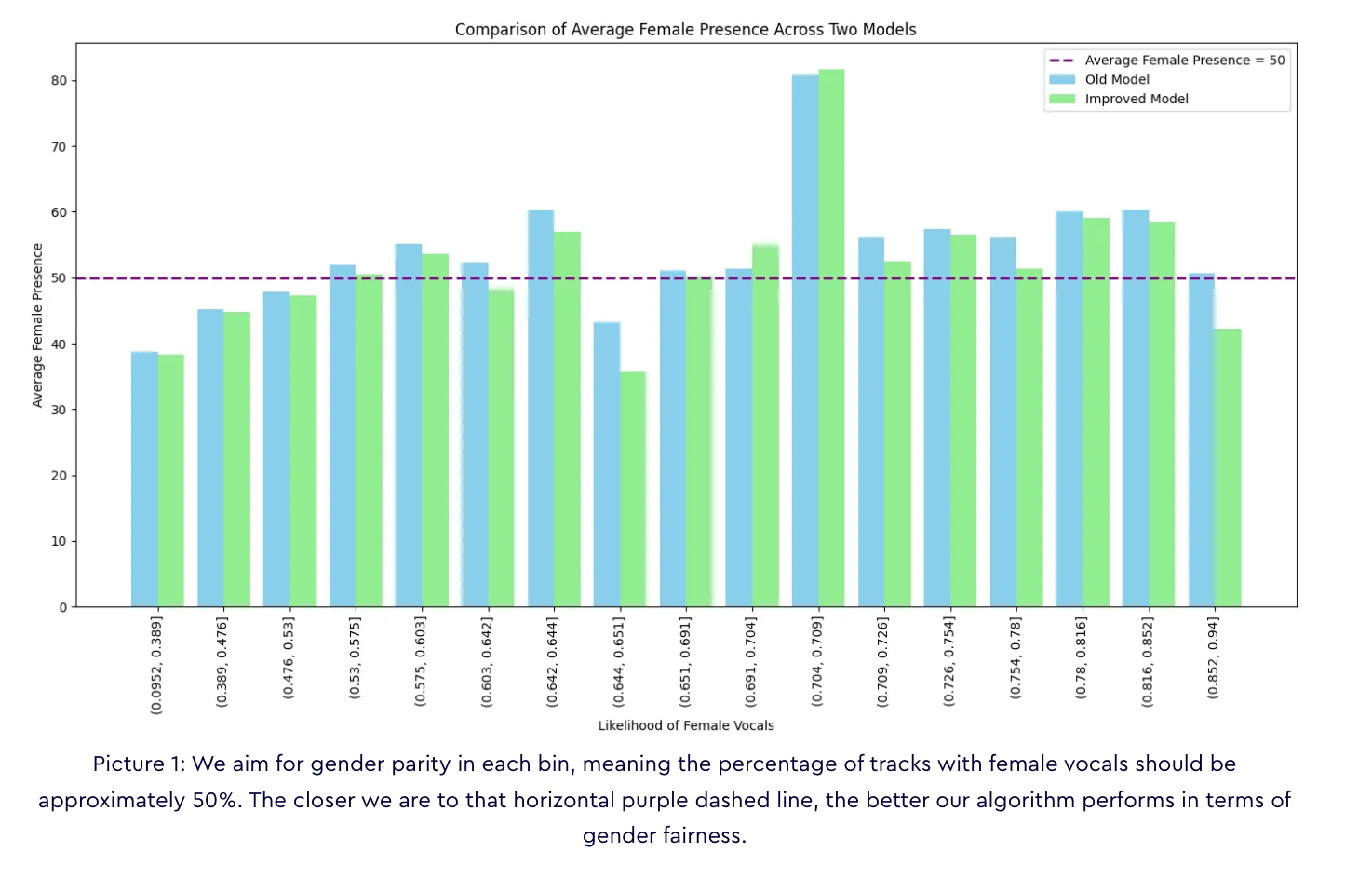

We evaluated how the system represented artists of different genders by creating a baseline model that predicted the likelihood of female vocals being recognized based solely on genre and instrumentation. In male-dominated genres, the baseline model significantly underestimated the presence of female vocals. The model had learned from patterns in the training data that reflected existing structural imbalances.

AI isn’t inherently bad. It doesn’t replicate bias intentionally. But the trouble is that it doesn’t even know it’s doing it. If the data it learns from reflects a world in which certain artists are already underrepresented, the model will carry that forward and amplify it on a catalog-wide scale.

This is why model quality and regular auditing matter as much as the decision to use AI in the first place.

Read all our findings here: AI music search algorithms: gender bias or balance?

Fairer AI models are a start, but they’re not enough on their own

Our response to the gender representation findings was a combination of regular model audits and targeted updates. We launched a new Similarity Search, which achieved a female vocal presence of 51% across all propensity score bins. The model is now designed to maintain balanced representation across the board, not just in genres where female artists are already well-represented.

When the model is built and maintained well, AI tagging becomes a genuinely leveling force. Every track gets a fair description. Every search evaluates sound rather than status. And the catalog’s hidden depth becomes accessible to anyone with a brief.

But some platforms want to actively surface local artists. They may have clients with diversity mandates, broadcaster obligations to support independent music, or brand briefs that specifically call for underrepresented voices. An ethical AI model won’t do any of that on its own. It can create the conditions for fairer discovery, but it won’t define what that discovery looks like for any specific catalog or community. That requires a different layer.

The real role of contextual metadata

Sound-based tagging tells you what a track sounds like. It can describe mood, energy, instrumentation, tempo, and emotional arc with a level of consistency and speed that no manual process can match. However, some questions can’t be answered through sonic analysis alone. Who made the track? Where are they from? Are they an independent artist?

That’s what contextual metadata is for.

Custom tags around artist origin and identity give platforms deliberate control over what gets surfaced. A platform can use them to translate its editorial values, or its client’s values, into something a user can actually filter by. They also shift discovery from a passive output of the algorithm to an active expression of what the platform stands for.

[Screenshot note: Show Cyanite Advanced Search with custom metadata filter options visible]

The demand for this kind of contextual layer is real, and it’s growing.

In a joint study we ran with MediaTracks and Marmoset—which surveyed 144 music licensing professionals, including music supervisors, filmmakers, advertisers, and producers—97% of respondents said they want AI-generated music to be clearly labeled. Transparency is becoming a determining factor in music search.

Check out the full study here: Why AI labels and metadata now matter in licensing

Professionals also rely on origin details and creator context to navigate briefs and explain their track selections to clients. The context behind a song is a core part of how licensing decisions are made. Contextual filtering is therefore becoming more and more essential for music platforms that want to facilitate smarter discovery and better brief alignment. This shift is already underway:

- Content requirements from broadcasters and government-funded media asking for local artists

- Diversity briefs from brands and agencies that need to demonstrate commitment to independent or underrepresented artists

- Publisher and library mandates to surface specific communities within a catalog

- Music supervisors needing to justify selections to clients based on creator background or origin

In some cases, platforms also need to verify certain aspects of that context.

As AI-generated music becomes more prevalent, being able to distinguish between human-made and AI-generated tracks can add an additional layer of transparency. This is especially useful for platforms working with specific labeling requirements or client expectations.

What fair music discovery looks like in practice

The combination of AI search and contextual filters is what makes fairer discovery scalable. Two of our partners show how this plays out across different dimensions of the same problem.

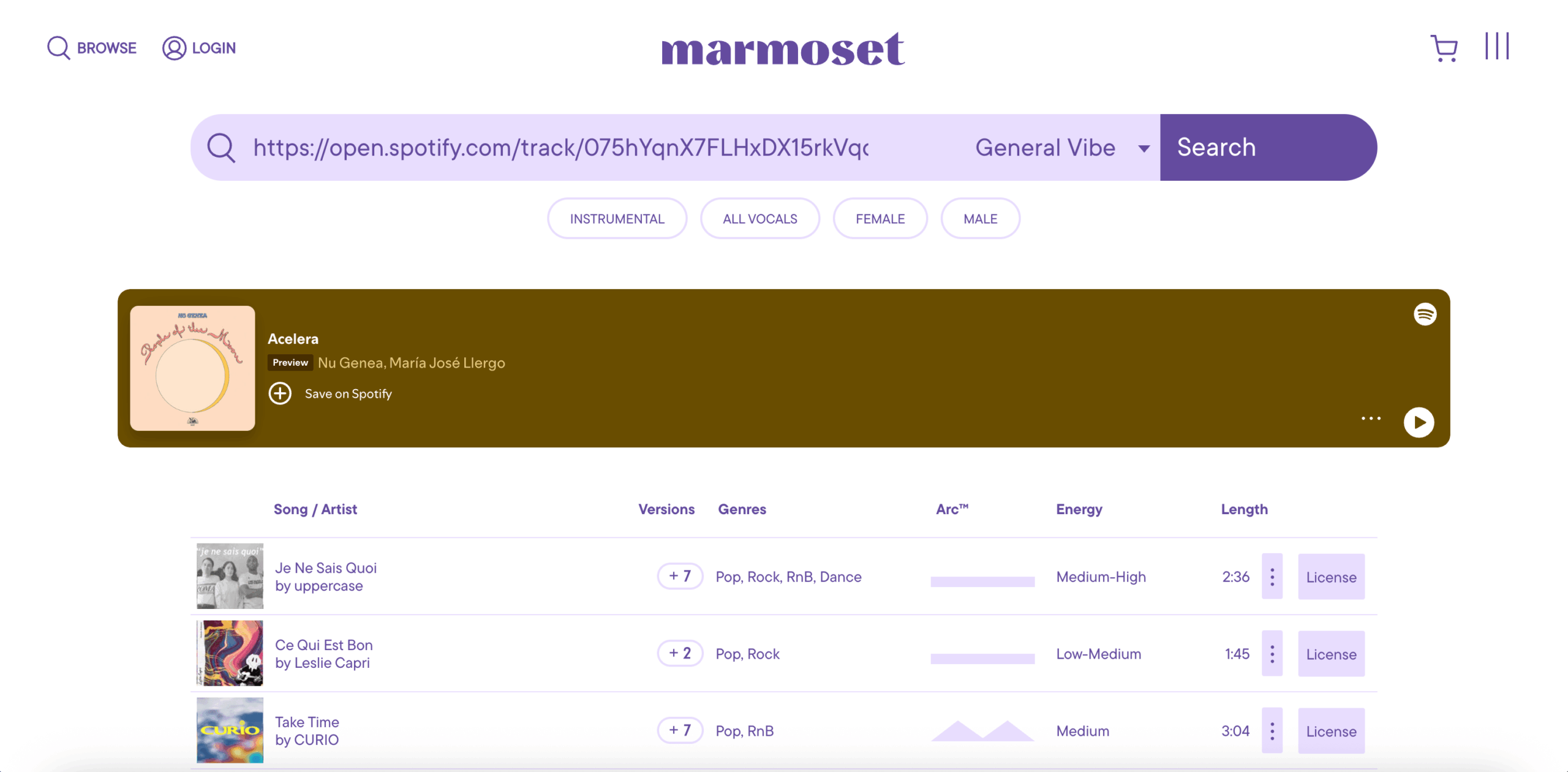

Marmoset: sound-based fairness in action

Marmoset is a full-service music licensing agency and, since 2019, the first certified B Corporation music agency in the world. Their catalog represents hundreds of independent artists and labels. Getting those artists found fairly, consistently, and without depending on their popularity history is central to Marmoset’s mission.

Before integrating Cyanite, Marmoset faced a familiar challenge: a growing catalog, limited bandwidth for manual tagging, and a discovery process that, despite their best intentions, tended to favor artists who had been in the system for longer.

Cyanite’s AI Auto-Tagging gave every track in the catalog a consistent metadata foundation, regardless of when it was added or how frequently it had been licensed.

Similarity Search took that further. As Alex Paguirigan, product manager at Marmoset, put it: “Using Cyanite, we provided a fairer game for our artists to play. At the end of the day, that means more money in their pockets.”

The key mechanism here is that Similarity Search surfaces tracks based on sonic match, not play counts, recency, or editorial prioritization. “The AI doesn’t care if you’re Ed Sheeran or a bedroom producer. If your track fits the reference, it will surface it,” as Jakob, one of our founders, likes to say.

Melodie: what contextual metadata can actually do

Where Marmoset demonstrates fairness through sound-based discovery, Melodie demonstrates how contextual metadata can surface artists by their identity and origin, a different dimension of the same goal.

Melodie is a music licensing platform based in Australia and built on a 50/50 revenue split with artists. It’s curated entirely by hand for quality and emotional resonance. As the catalog grew, the team needed a way to help clients navigate it at speed without losing the editorial integrity that already defined the platform.

Cyanite’s Similarity Search and Free Text Search handled the sonic layer. But what made Melodie’s approach distinctive is how they combined AI search results with contextual filters using Advanced Search.

Their unique spin was including the “Show Australian artists only” tag. For Australian broadcasters, brands, agencies, and government bodies, supporting local artists is often a conscious mandate, and Melodie wanted to make this frictionless.

The workflow from a manager looking for a song to match their brief might look like this in Melodie:

- Using Similarity Search to find tracks that match the vibe of a reference song they had in mind

- Applying the “Show Australian artists only” filter to narrow those results to local creators

Within seconds, they’re listening to tracks that fit both the creative brief and their mandate to support the local music economy.

Adding context to music could easily clutter up the catalog, but Evan Buist, Managing Director of Melodie Music, describes the design principle behind their approach:

“We only introduce tags when they serve a clear purpose for the user and the artist. Crucially, tags are optional pathways, not restrictive labels. They exist to empower choice—like finding tracks created by an Australian artist—not to define an artist by a single attribute. By working closely with our stakeholders, we ensure that our metadata adds value and visibility without diminishing the complexity of the artistry.”

Metadata should expand what’s possible for specific use cases, not constrain how an artist is understood across the rest of the catalog.

Want to create your own custom tags? Click the button below.

The business case for getting this right

Platforms that can surface the right artist for a specific brief have a genuine differentiator in an increasingly commoditized market. As clients bring more nuanced mandates, generic platforms that rely on popularity signals and keyword-only search will struggle to compete with those that can actually deliver on briefs.

Thematic’s creative community, built to connect the right track with the right creator at the right moment, offers another angle. Before integrating Cyanite, finding that match was slow. Creators cycled through tracks, guessing at genre labels and trying to articulate sounds they could hear in their heads but couldn’t describe.

After integrating Cyanite’s Auto-Tagging and Similarity Search, Thematic saw a nearly 9% decrease in the number of times a creator plays a song before downloading it. They also reported a nearly 15% increase in creators downloading tracks directly from the personalized “For You” page, thanks to the Advanced Search functionality which enables song recommendations based on what a specific creator has already downloaded and used. Discovery became effortless: the right track would find a user before they even went looking for it.

For artists, this means better matching and therefore more placements, more exposure, and more income. As Audrey Marshall, co-founder and COO at Thematic, put it: “Discoverability can often be a visibility problem dressed up as a quality problem. A great song that isn’t tagged correctly can sit undiscovered for months.”

What platforms should be asking themselves

If you manage a catalog with a mix of established and emerging artists, a few questions are worth sitting with:

- Do your least-known artists have the same structural shot at being discovered as your most popular ones?

- Have you audited your AI models for the kinds of bias your training data may have introduced?

- Which contextual dimensions matter most for your catalog and your client base?

- How can custom tagging translate your editorial values into something a user can actually filter by?

A track added three years ago with incomplete metadata should not be permanently disadvantaged compared to one onboarded last month with a full tagging workflow. All artists should get a fair chance at discoverability.

AI tagging is a policy decision as much as a technical one

The platforms building a genuinely fairer discovery experience are those investing in consistent, foundational AI tagging, auditing their models regularly to catch the biases training data can introduce, and adding contextual layers that reflect what their catalogs and clients actually need.

When fairness becomes a priority, artists get found and platforms build the kind of trust that compounds over time.

If you want to see what ethical and smarter discovery looks like in practice, explore what Cyanite can do for your catalog.