Upgrade your music discovery. Sign up for Cyanite.

Spotify set a new benchmark for personalized music discovery in 2015 with the launch of Discover Weekly, which now has as many as 751 million monthly users. All streaming platforms are now expected to provide that same level of recommendation.

Spotify relies largely on collaborative filtering to deliver those recommendations at scale. The platform compares listening behavior across millions of users and predicts what someone might like based on similar listeners’ behavior. Running this feature requires enormous behavioral datasets, dedicated data science teams, and continuous optimization.

Few music libraries and streaming platforms have that volume of interaction data or the machine learning resources needed. But operating a personalized discovery system like this is made possible with Cyanite.

Why collaborative filtering alone is limiting

Collaborative filtering predicts what someone might like by comparing their listening behavior with other user activity, such as what they listen to, save, replay, or skip. Operating at this scale requires massive behavioral datasets.

Because recommendations depend on interaction signals, they tend to favor what already performs well. Popular tracks generate more activity and become easier to recommend, while less-played songs receive far less exposure.

The same limitation affects new releases. A track that just entered the catalog has little to no listening data, making it difficult to surface in recommendations.

A scalable alternative: sound-based personalization

Sound-based personalization uses the sound of the music itself as the signal. By measuring sound similarity using AI-powered analysis, platforms and music libraries can generate recommendations without relying on large volumes of user interaction data.

Signals they already collect, such as saved tracks, downloads, or favorites, can be used as reference points for personalization. Several tracks can also be combined to represent a listener’s taste and generate recommendations through multi-track similarity.

Because this approach doesn’t depend on massive engagement data, it scales more easily across platforms and catalogs of different sizes. The result is more sound-driven discovery, with reduced popularity bias and the ability to surface new releases even before they accumulate listening activity.

How this is made possible with Cyanite

Cyanite provides the audio intelligence layer to generate recommendations from the sound of the music itself. A track’s musical characteristics are made comparable across the catalog, allowing teams to start from one or several reference tracks and find similar songs.

Two core capabilities make this music search possible: Similarity Search and Advanced Search.

Similarity Search lets teams search from a reference track, whether it comes from their catalog, an uploaded file, or an external source such as a YouTube preview.

This aligns closely with how listeners actually discover music. When someone searches for “something similar,” they are usually looking for a track that’s similar to a specific song. Similarity Search turns that reference into a starting point and retrieves matching tracks across the catalog.

To see how this works in practice, you can try it directly in the Cyanite Web App.

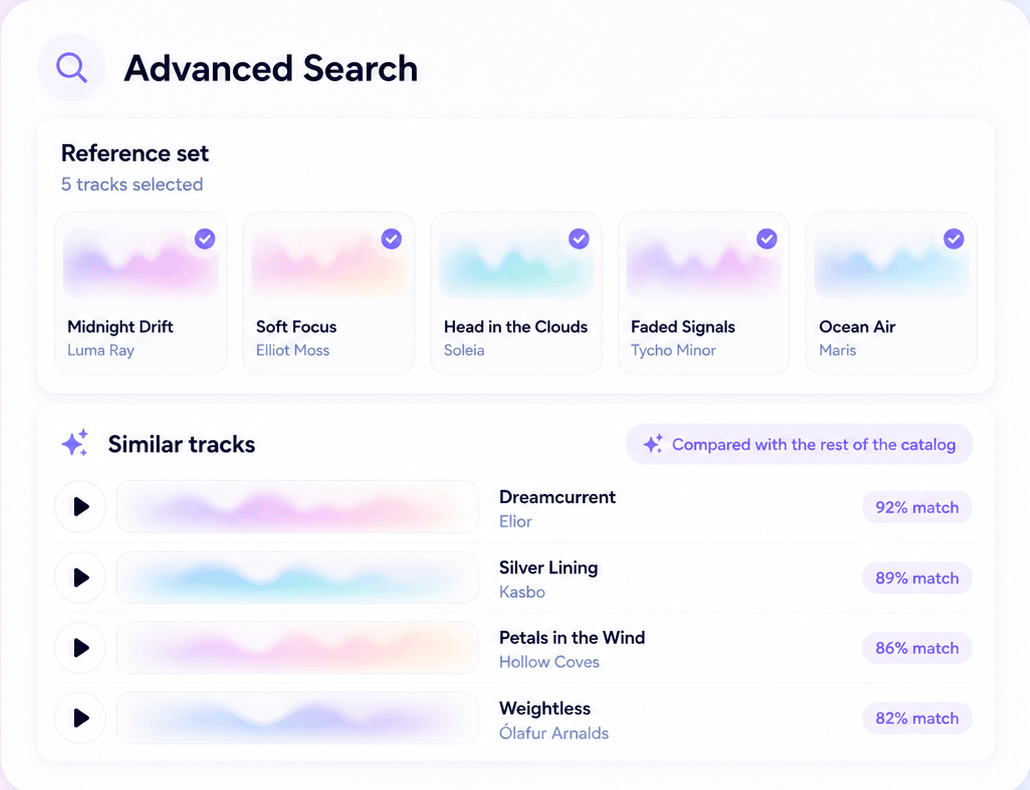

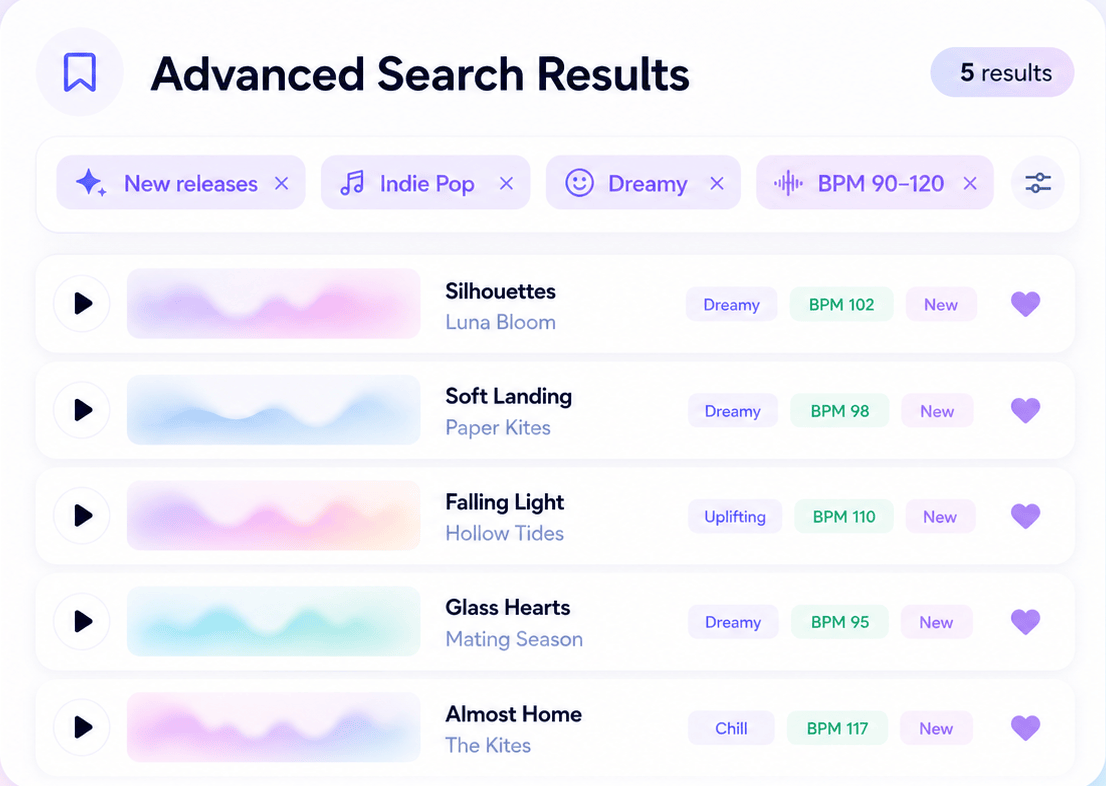

Advanced Search builds on Similarity Search, allowing platforms to generate recommendations from multiple reference tracks. Up to 50 tracks can be used together.

It also adds filtering. Platforms can refine results using Cyanite tags or custom tags, giving them control over what appears in the recommendations. For example, results can be filtered to surface new releases or other catalog attributes while still matching the listener’s taste.

Advanced Search is available through the Cyanite API.

What makes multi-track personalization powerful

When it comes to a listener’s taste, a single track only reflects part of the picture. Using several reference tracks provides more information about what that listener tends to save and enjoy, making patterns easier to detect. With a clearer picture of taste, platforms can generate recommendations that feel more tailored and support playlist-style discovery.

How to personalize discovery without reinforcing popularity bias

Combining similarity with filtering allows platforms to shape discovery intentionally. Recommendations can still reflect a listener’s taste while prioritizing specific catalog segments, such as new releases or curated artist groups. This lets music libraries increase the exposure of new songs in the catalog and helps streaming platforms guide listeners toward new music.

Platforms like Melodie use Cyanite to generate recommendations and combine those results with editorial filters. Users can move quickly from a musical reference to relevant tracks while still surfacing artists and catalog segments Melodie wants to promote.

Read more: How Melodie uses Cyanite and contextual metadata to spotlight Australian artists

Inside a multi-track personalization workflow

Let’s look at how this works in practice.

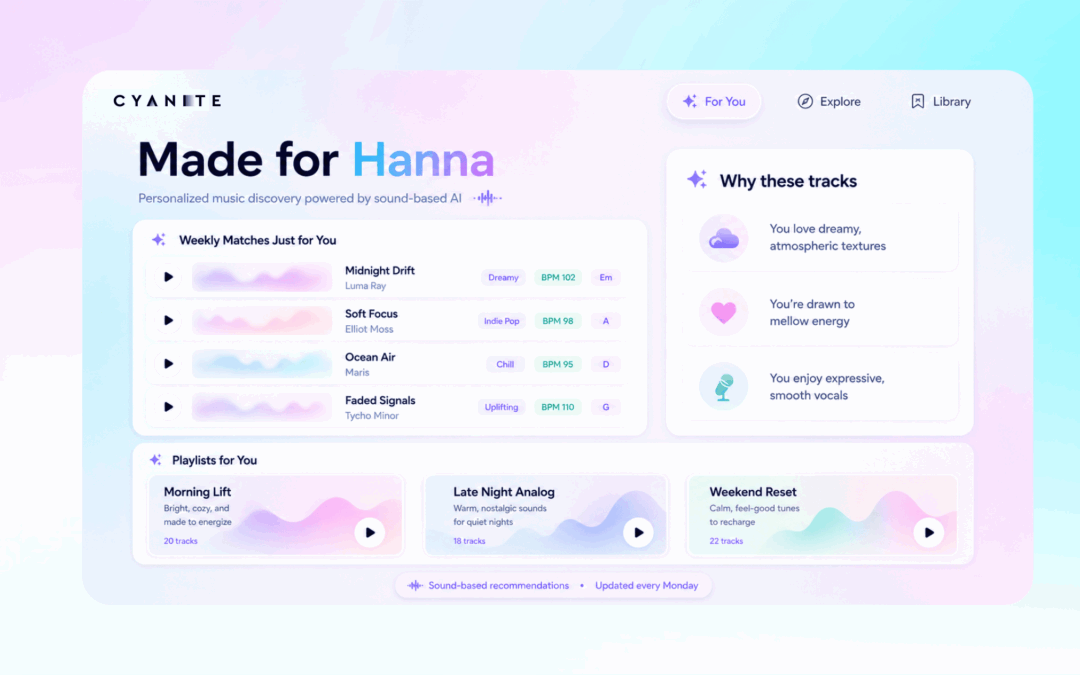

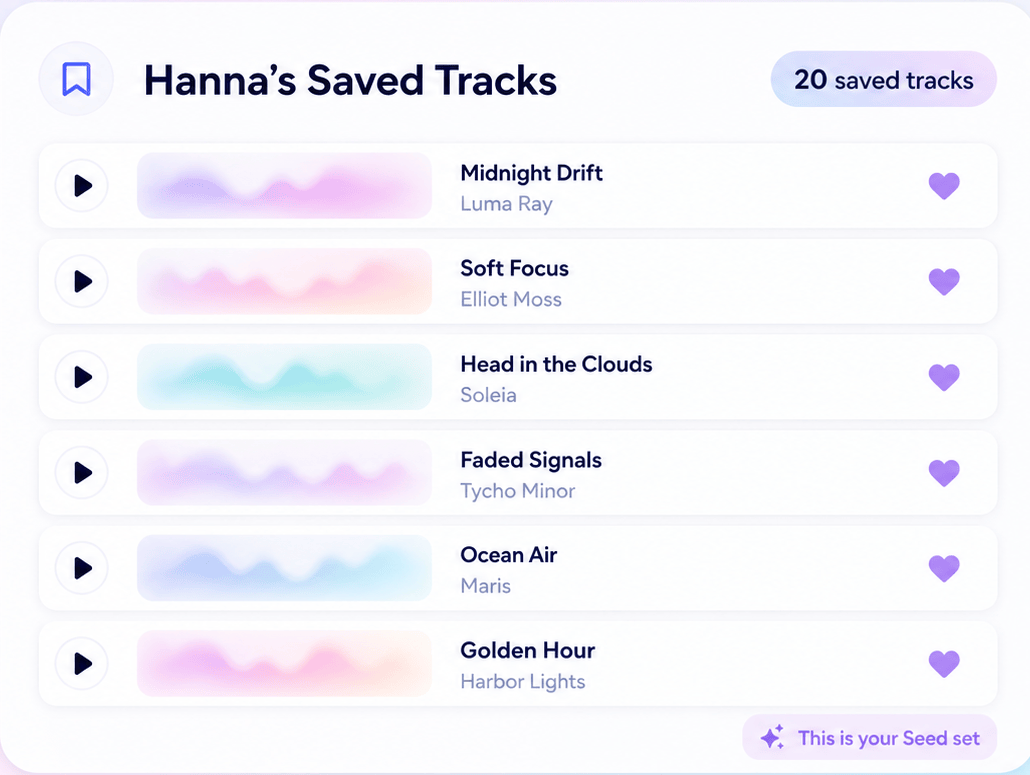

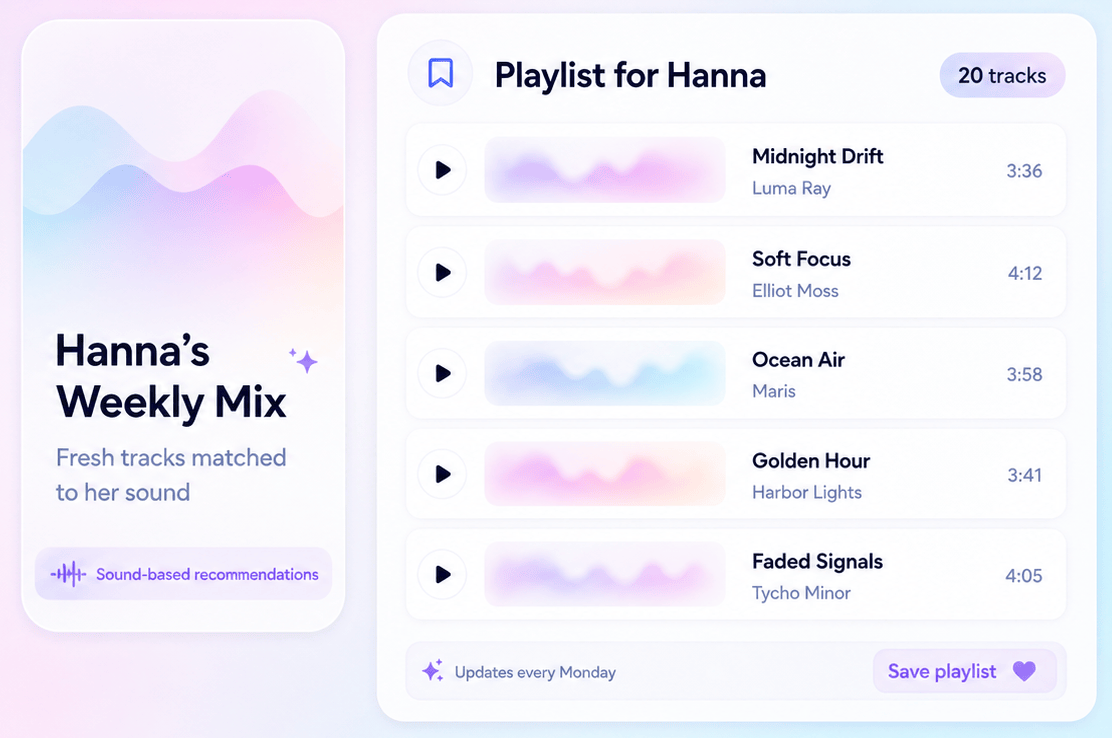

Picture a music library or streaming service that uses Cyanite to power discovery. A user, let’s call her Hanna, has saved 20 tracks as favorites over the past few weeks.

Instead of relying on other listeners’ behavior, the platform can use Hanna’s own listening history as the starting point for recommendations.

Step 1: Use Hanna’s favorites as the seed set

The last 20 tracks Hanna saved become the multi-track reference set. Together, they represent her taste far better than a single reference song.

Step 2: Run similarity search with Advanced Search

Using Cyanite’s Advanced Search feature, a similarity search is run with Hanna’s saved tracks as the reference set. Cyanite compares the sound of those tracks with the rest of the catalog and returns songs with comparable musical characteristics.

Step 3: Filter the results

Filters can then refine the results. For example, tracks tagged as “new releases” can be prioritized. Additional tags, such as genre, mood, or BPM, can narrow the results even further depending on the catalog structure.

Step 4: Generate the playlist

The filtered results can become a personalized playlist for Hanna. The tracks reflect the sound of the music she already enjoys, but she’s also introduced to songs she hasn’t yet heard. The experience feels similar to a Discover Weekly-style playlist, but the recommendations are sound-based.

Sound-based personalization in practice: Thematic’s “For You”

Thematic is a platform that connects content creators with music for their videos, and Cyanite powers personalized recommendations inside its “For You” page.

The feature analyzes a creator’s download history and recommends tracks with similar sounds. It also lets creators quickly access their recently used music.

“Your personalized hub for music discovery. Get tailored song recommendations based on the music you’ve downloaded, your creative style, and your video themes. Plus, quick access to your recent activity means you’ll never lose track of your go-to tracks.”

This shows how sound-based personalization is already implemented in real discovery features.

What this means for music platforms

Sound-based personalization changes how platforms can design discovery experiences and manage their catalogs.

Music libraries

- Engagement increases because recommendations reflect what listeners actually want to hear.

- New releases are surfaced more effectively, helping artists gain visibility earlier.

- Catalog exposure is more balanced. Attention is no longer concentrated on tracks that are already popular.

- Personalization is scalable without maintaining complex machine learning infrastructure.

Streaming services

- Platforms can deliver discovery experiences similar to features like Discover Weekly.

- Users benefit from strong personalization logic built from their listening history.

- There is less dependence on massive behavioral datasets.

- Sound-based discovery becomes part of the platform’s recommendation infrastructure.

When implementing these workflows, platforms also need to ensure that audio and catalog data stay protected. Learn how Cyanite supports privacy-first workflows.

Explore sound-based personalization with Cyanite

Saved tracks, downloads, and favorites are signals music platforms already collect. With Cyanite, those signals can become the foundation of personalized discovery.

Similarity Search can identify tracks that sit close to a reference song in terms of sound. And with Advanced Search, multiple reference tracks and metadata filters can be combined to shape recommendation systems such as personalized playlists or discovery feeds.

As music catalogs grow and discovery becomes more complex, sound-based personalization offers a scalable alternative to behavior-driven recommendation systems.

This allows music libraries and streaming services to turn existing listening activity into meaningful discovery features, using sound as the underlying recommendation logic.

Start experimenting with Cyanite today to explore how sound-based personalization could work in your platform.