Last updated on March 10th, 2026 at 12:37 pm

Upgrade your music discovery. Try Similarity Search in Cyanite.

Music recommendation systems support discovery in large music libraries and applications. As access to digital music has expanded, the volume of available tracks has grown beyond what users can navigate through a simple search or browsing alone.

Music services address this by relying on algorithmic recommendation systems to guide listeners and surface relevant tracks. These systems differ in how they generate recommendations and in the types of data they use, which leads to different results and tradeoffs depending on the use case.

In this article, we’ll go through how music-suggestion systems work and introduce the main approaches behind them, outlining how they are applied in practice.

Why music catalogs struggle

As music catalogs grow, manual search slows down. Results become less reliable and predictable. This is reinforced by inconsistent metadata, often caused by missing tags or legacy catalogs, which makes it difficult to surface the right tracks at the right time.

Lost opportunities are the result.

- Pitching and licensing take longer because relevant tracks are harder to find.

- Monetization suffers when parts of the catalog remain unseen.

- In streaming services and music-tech platforms, weak discovery limits engagement and narrows what users actually explore.

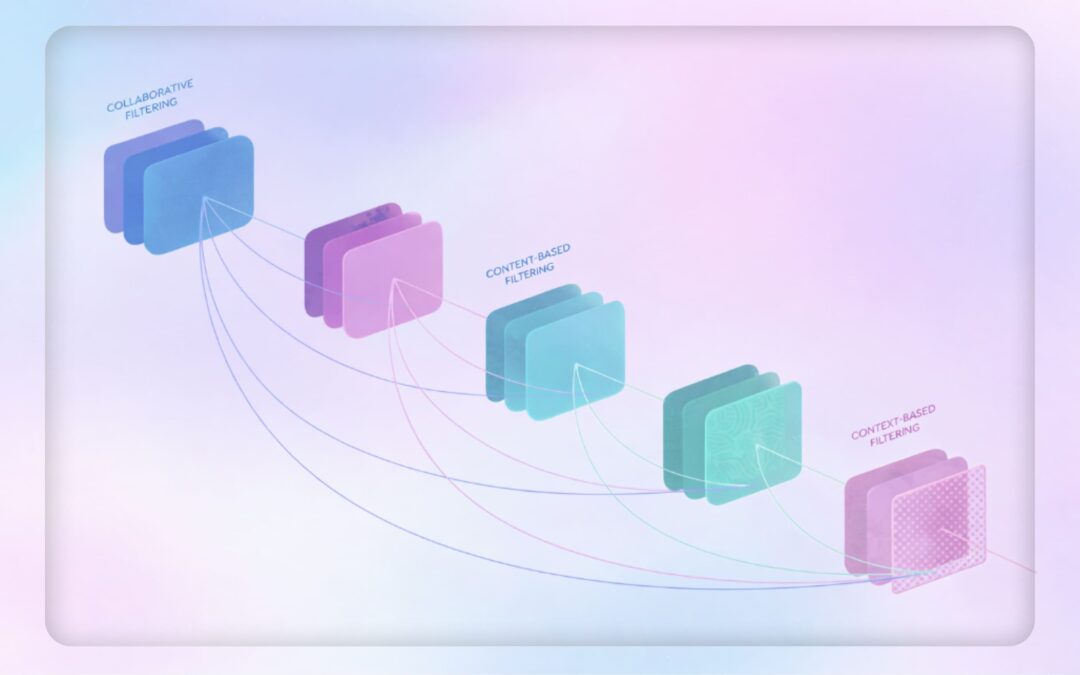

The three different music recommendation approaches

A music recommendation system suggests tracks by analyzing information such as audio similarity, metadata, user behavior, and context. Based on this analysis, the system surfaces music that fits a specific intent or situation.

In practice, this supports catalog workflows like finding tracks for sync projects, building playlists, and generating personalized recommendations within large music libraries.

1. Collaborative filtering

The collaborative filtering approach predicts what users might like based on their similarity to other users. To determine similar users, music-suggestion algorithms collect historical user activity such as track ratings, likes, and listening time.

People used to discover music through recommendations from friends with similar tastes, and the collaborative filtering approach recreates that. Only user information is relevant, since collaborative filtering doesn’t take into account any of the information about the music or sound itself. Instead, it analyzes user preferences and behavior and predicts the likelihood of a user liking a song by matching one user to another.

This approach’s most prominent problem is filter bubbles, which can arise when collaborative filtering algorithms reinforce existing user preferences, potentially narrowing musical exploration. Despite being designed to personalize experiences, these systems may inadvertently create echo chambers by prioritizing content similar to what users have already engaged with.

Another problem with this approach is the cold start. The system doesn’t have enough information at the beginning to provide accurate recommendations. This applies to new users whose listening behavior has not yet been tracked. New songs and artists are also affected, as the system needs to wait before users interact with them.

Collaborative filtering approaches

Collaborative filtering can be implemented by comparing users or items:

- User-based filtering establishes user similarity. User A is similar to user B, so they might like the same music.

- Item-based filtering establishes the similarity between items based on how users have interacted with them. Item A can be considered similar to item B because users rated them both 5/10.

Collaborative filtering also relies on different forms of user feedback:

- Explicit rating is when users provide obvious feedback for items such as likes or shares. However, not all items receive ratings, and sometimes users interact with an item without rating it. In that case, the implicit rating can be used.

- Implicit ratings are predicted based on user activity. When the user doesn’t rate the item but listens to it 20 times, it is assumed that the user likes the song.

2. Context-aware recommendation approach

Context-aware recommendation focuses on how music is used in a given setting. This involves factors like the listener’s activity and circumstances. These things can influence music choice but are not captured by collaborative filtering or content-based approaches.

Research by the Technical University of Berlin links music listening choices to the listener context. This could be environment-related or user-related.

Environment-related context

In the past, recommender systems were developed that established a link between the user’s geographical location and music. For example, when visiting Venice, you could listen to a Vivaldi concert. When walking the streets of New York, you could blast Billy Joel’s “New York State of Mind.” Emotion-indicating tags and knowledge about musicians were used to recommend music that fit a geographical place.

User-related context

User-related context describes the listener’s current situation, including what they are doing, how they are feeling, where they are, the time of day, and whether they are alone or with others.

These factors can significantly influence music choice. For example, when working out, you might want to listen to more energetic music than your usual listening habits and musical preferences would suggest.

3. Content-based filtering

Content-based filtering uses metadata attached to the audio, such as descriptions or keywords (tags), as the basis of the recommendation. When a user likes an item, the system determines that they are likely to enjoy other items with similar metadata.

There are two common ways to assign metadata to content items: through a human-based or automated approach.

The human-based approach can take two forms: professional curation by library editors who characterize content with genre, mood, and other classes, or crowdsourced metadata assignment where a community manually tags content. The more people participate in crowdsourcing, the more accurate and less subjectively biased the metadata becomes.

Human-based approaches require significant resources, particularly crowdsourcing. As Alex Paguirian, Product Manager at Marmoset, comments: “When it comes down to calculating the BPM and key of any given song, you would have to put someone behind a piano with a metronome, which is completely unsustainable and a strange use of labor.” This illustrates why automated systems are increasingly used to characterize music at scale.

The automated approach is where algorithmic systems automatically characterize content. This is what we’re doing at Cyanite. We use AI to understand music and assign relevant tags to the songs in our system.

Musical Metadata

Musical metadata is information that is adjacent to the audio file. It can be objectively factual or subjectively descriptive. In the music industry, the latter is also often referred to as creative metadata.

For example, artist, album, and year of publication are factual metadata. Creative metadata describes the actual content of a musical piece; for example, the mood, energy, and genre. Understanding the types of metadata and organizing the library’s taxonomy in a consistent way is very important, as the content-based recommender uses this metadata to select music. If the metadata is flawed, the recommender might pull out the wrong track.

Content-based recommender systems can use factual metadata, descriptive metadata, or a combination of both. They allow for more objective evaluation of music and can increase access to long-tail content, improving search and discovery in large catalogs.

When it comes to automating this process, companies like Cyanite step in.

Music Metadata extraction through MIR

Music information retrieval (MIR) refers to the techniques used to extract descriptive metadata from music. It’s an interdisciplinary research field combining digital signal processing, machine learning, artificial intelligence, and musicology. In music analysis, its scope ranges from BPM and key detection to higher-level tasks such as automatic genre and mood classification. It also involves research on musical audio similarity and related music search algorithms.

At Cyanite, we apply a combination of MIR techniques and neural network models to analyze full audio tracks and generate structured, sound-based metadata, such as genre, mood, energy, tempo, and instrumentation, at catalog scale.

How Cyanite powers AI recommendations

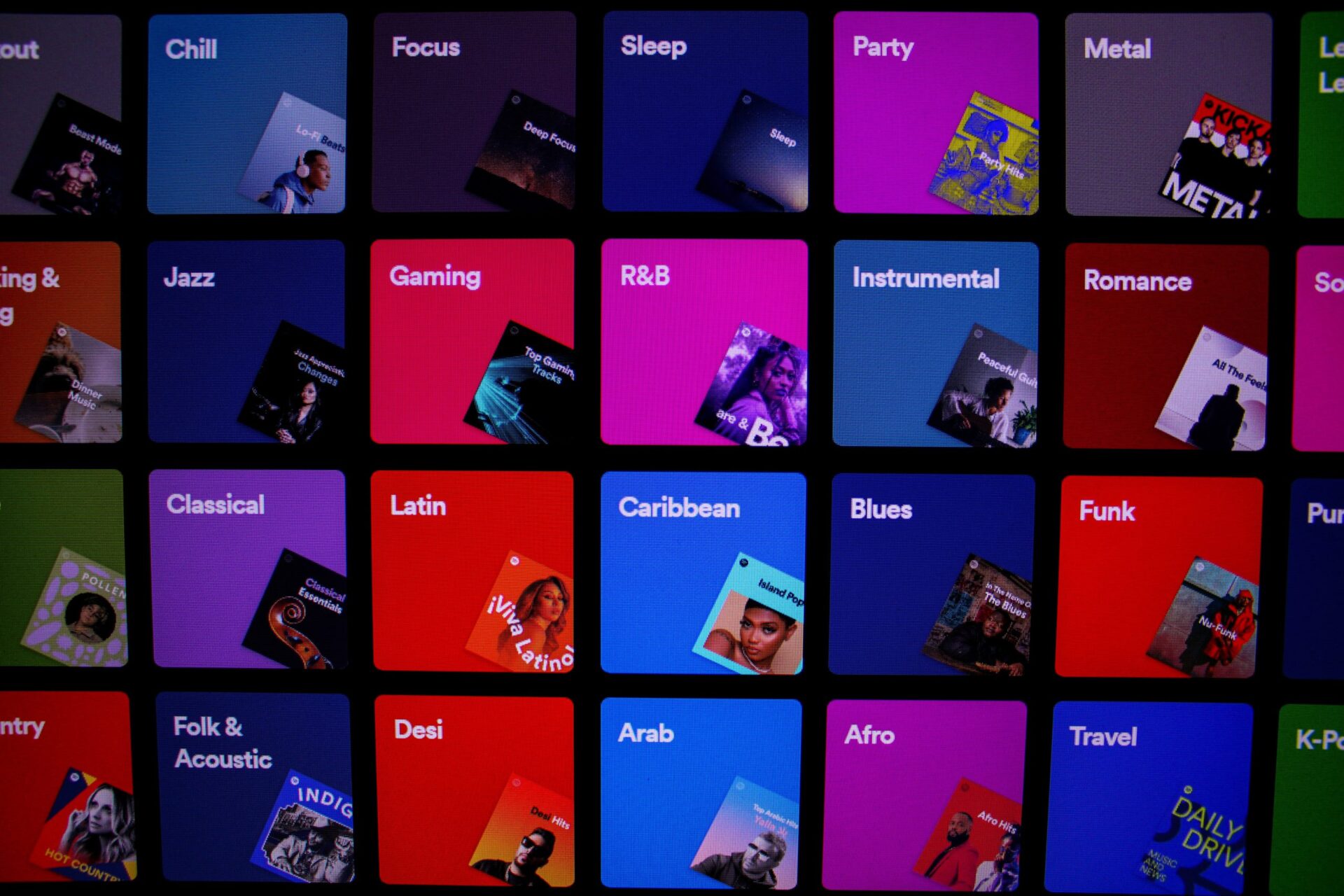

Most consumer music platforms rely on behavior-based recommendation systems. Spotify is one example of a platform that uses collaborative filtering, now likely supported by AI. These systems learn from listening behavior and user similarity, which can lead to filter bubbles. For artists who already have a lot of listeners, this can give them a consistent advantage.

Cyanite’s AI recommendations are based purely on sound. Each track is analyzed to capture its audible musical characteristics through MIR. These characteristics are translated into embeddings, which represent how a track sounds in a form that can be compared at scale.

The algorithms we have built through MIR are considered industry standard and are all developed entirely in-house.

The embeddings serve two purposes.

- They are used to generate musical metadata, also called Auto-Tagging. This produces structured, sound-based metadata such as genre, mood, energy, tempo, key, instrumentation, and voice presence. Auto-Tagging analyzes the full audio of each track and applies these labels consistently across the catalog.

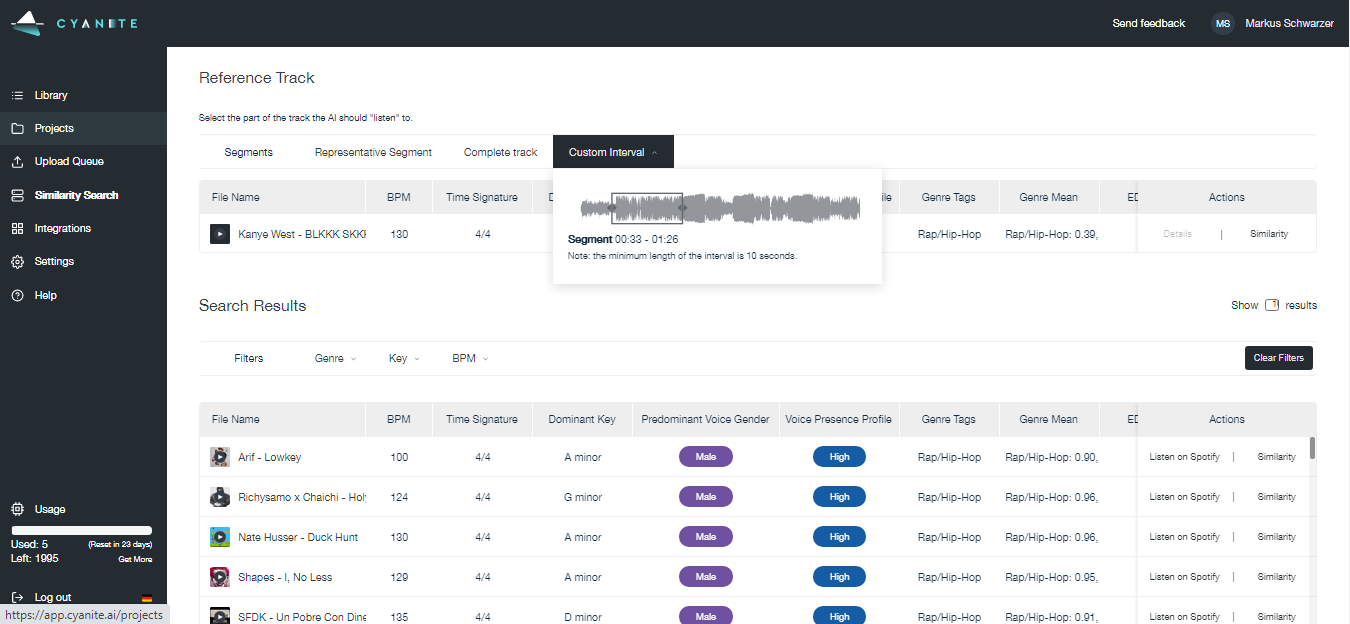

- The same embeddings enable sound-based comparison. When teams work with search and recommendations, Similarity Search compares a reference track with the rest of the catalog by measuring the similarity of embeddings. Tracks that are most alike in sound are returned as a ranked recommendation list. The same embeddings also power Free Text Search, where teams can describe a desired sound in natural language and find tracks that fit that description. In both cases, artist size and popularity don’t influence the result, which helps democratize the search process.

You can try our search algorithms via our Web App, with five free monthly analyses. For more advanced discovery workflows, Advanced Search is available through the API. It builds on Similarity Search and Free Text Search by adding similarity scores, multiple reference tracks, and the option to upload custom tags, which can be used as filters. This allows teams to refine results against their own taxonomy or brief requirements.

The API lets teams run Auto-Tagging and search directly inside their own tools or platforms. They don’t need to work in a separate interface. Auto-Tagging can be used on its own, or teams can combine it with Music Search to find the right tracks for sync, playlists, marketing, and similar day-to-day use cases.

AI recommendation use cases

The following use cases highlight where AI recommendations add practical value in professional music discovery.

- Finding alternatives that are musically similar to a known reference track: Sometimes, a desired sound is easier to point to than describe. At Melodie Music, Marmoset, and Chromatic Talents, reference tracks are used in these situations as concrete starting points. Teams upload or link a reference track, then use Similarity Search to explore alternatives that share comparable musical characteristics.

- Turning vague or subjective descriptions into usable search results: At Melodie Music, users often struggled to translate creative intent into fixed keywords, even in a well-curated catalog. Free Text Search allows them to describe a desired sound in their own words, while Similarity Search lets them move from a reference track to close matches that are alike in feel and structure. This reduces the need to guess the “right” tags and shortens the trial-and-error loop between searching and listening.

- Reducing time spent browsing large music catalogs: Similarity Search and Free Text Search guide users to a smaller, relevant set of tracks. This means teams working with large catalogs spend less time browsing. Instead of scanning hundreds of options, users begin with a reference or written description and listen with clear intent, helping them reach confident decisions faster while retaining creative control.

Finding what your catalog needs

Choosing a music recommendation approach depends on your personal needs and the data you have available. A trend we’re seeing is a hybrid approach that combines features of collaborative filtering, content-based filtering, and context-aware recommendations. However, all fields are under constant development, and innovations make each approach unique. What works for one music library might not be applicable to another.

Common challenges across the field include access to sufficiently large data sets and a clear understanding of how different musical characteristics influence people’s perception and use of music. These challenges become especially visible in large or underutilized catalogs, where discovery can’t rely on user behavior alone.

To try out Cyanite’s technology, register for our free web app to analyze music and try similarity searches without the need for any coding.

FAQs

Q: What is an AI music recommendation system?

A: An AI music recommendation system suggests tracks by analyzing data such as audio characteristics, metadata, user behavior, or listening context. The goal is to surface music that fits a specific intent, use case, or situation within a large catalog. These systems are commonly used in music recommendation apps, professional catalogs, and music-tech platforms.

Q: What are the main types of music recommendation approaches?

A: The three most common approaches are collaborative filtering, content-based filtering, and context-aware recommendation. Many systems combine elements of all three to balance accuracy, scale, and flexibility.

Cyanite uses a content-based, sound-driven approach, generating recommendations by analyzing the audio itself rather than relying on user behavior or listening history. This means our sound-based music recommender system is suited to large and professional catalogs.

Q: How do music companies use AI recommendations today?

A: Music companies use AI song recommendations to speed up sync pitching, build playlists, surface underused catalog assets, support personalization in music-tech products, and select music for branding or retail projects. These workflows rely on music recommendation engines to reduce manual search and improve discovery.

Q: How does Cyanite approach music recommendations?

A: Cyanite analyzes the sound of full tracks to generate structured, audio-based metadata and embeddings. These embeddings are used for Auto-Tagging and Similarity Search and Free Text Search in music catalogs, allowing tracks to be compared and recommended based on how they sound rather than on user interaction data.