Wondering if a track is AI-generated or human-made? Sign up for early access to our AI music detection feature here.

An article by Roman Gebhardt, CAIO at Cyanite.

97% of music professionals want to know whether a track is AI-generated or human-made. That number alone, which comes from a survey we conducted with Marmoset and Mediatracks, tells you how urgent the matter of AI music detection has become.

But demand for detection is only half the story. We’re currently conducting an ongoing survey of artists, in which 80% of respondents have said they don’t trust self-disclosure, and more than 70% say they fear being wrongly labeled as AI-generated.

This highlights a core challenge the industry still needs to solve: how to provide detection signals that are reliable enough to be trusted in real-world decisions.

That’s what this article is about. We’ll look at why detection is genuinely hard, where the real risks lie, and how we’re approaching the problem at Cyanite.

AI music detection is not a simple classification problem

Detection is often framed as a binary issue question, as a track is either AI-generated or it isn’t. That framing suggests the solution is equally simple: just train a model on the right data and you’re done.

In practice, it’s far more complicated. The challenge lies in identifying which characteristics in the audio signal are reliable enough to support a confident conclusion. It’s not just about how a track sounds to a human listener. For instance, it could be partially generated, post-processed, or intentionally altered to remove detectable patterns. Different generation systems introduce different signatures. And as those systems evolve, so do the techniques designed to evade detection, a dynamic often called the “AI Arms Race.”

This means detection doesn’t always give us a clean yes or no answer. It involves assessing the strength of signals, and that strength varies depending on how a track was created. It also raises harder questions: can AI-generated elements be localized within a track? How should partial generation be represented in a meaningful way?

These are active areas of research. What they suggest is that AI music detection is not a fixed problem with a fixed solution.

No detection system can reliably identify all AI-generated music, and any system that claims otherwise should be treated with caution. The goal isn’t perfect recall across every model that exists. It should be trustworthy, reliable decision support under real-world conditions.

The real risk in AI music detection: false positives

The primary risk in AI detection is incorrectly labeling human-created music as AI-generated. These false positives directly impact artists and catalogs. They can lead to wrongful rejections and reputational damage, and ultimately, they can undermine trust in detection systems.

This is why simple accuracy optimization alone is not enough. Detection systems must be designed to produce reliable, high-confidence signals and avoid overinterpretation.

A track should only be labeled as AI-generated when there is strong and consistent evidence to support that conclusion. When we developed our own detection models, that principle became the foundation: focus only on clear, well-understood indicators.

Cyanite’s conservative approach to AI music detection

We approach AI music detection as a reliable transparency issue. It’s not just about classification. Instead of trying to detect everything, we focus on identifying high-confidence signals that can support real-world decisions. Our position is deliberately conservative: we would rather withhold a label than apply one we can’t stand behind.

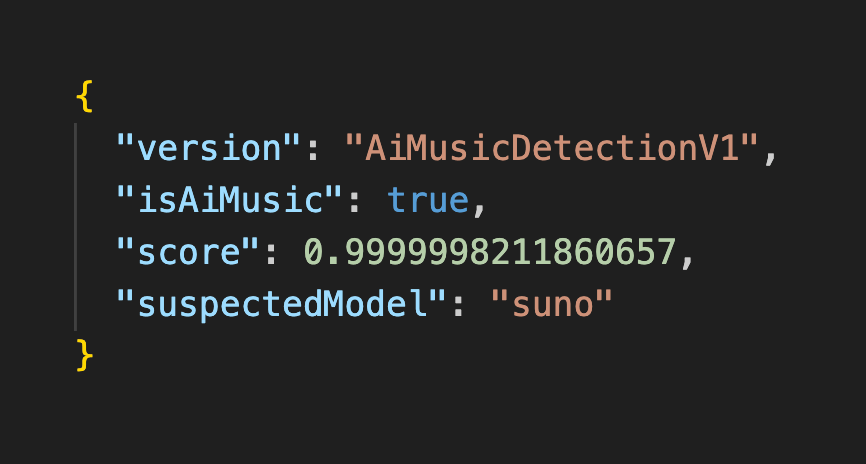

In practice, detection results are not binary flags. They are scores. Fully generated, unprocessed tracks tend to produce signals close to 1.0, indicating a very high likelihood of generated audio. Fully human-created material typically scores close to zero, reflecting the absence of detectable generation-specific patterns.

As content becomes more complex, through post-processing or mixing with human-created material, detection scores for AI-generated material can sit somewhere in between, and they need to be more carefully interpreted. Results should always be understood as signals to support decision-making, not definitive judgments.

Because AI generation is constantly evolving, our detection approach evolves with it. We continuously analyze new generation systems and develop methods based on signals we can validate and understand. In some cases that means model-specific detection. In others, we look for characteristics that generalize across different types of generative models. We combine approaches rather than relying on a single method.

In recent months, a growing ecosystem of tools and services has emerged that aim to obscure or remove detectable characteristics from generated audio. With this in mind, we test how our signals hold up under deliberate obfuscation. In our current testing, the signals we rely on remain detectable even after those modifications. This robustness is by design. It’s central to what makes detection trustworthy enough to act on.

Building independent detection the industry can trust

Detection systems will increasingly influence decisions with real legal and economic consequences: whether a track is accepted or rejected, whether an artist is flagged or cleared.

That kind of influence demands neutrality.

Detection shouldn’t be controlled by the same companies whose tools it’s meant to evaluate, or to any incentive that could quietly bias outcomes.

Independence is something we take seriously at Cyanite. It’s what allows the signals we produce to be relied on across platforms, catalogs, and workflows, by people who need to be able to trust the answer.

AI will continue to shape how music is created. The question is no longer whether it will be used, but whether the industry can build the transparency infrastructure to understand it responsibly. That requires continuous research, careful system design, and a commitment to getting it right rather than claiming to be able to detect everything.

It’s the approach we’ve taken with Cyanite AI Music Detection, and one we’ll keep developing as the landscape evolves.

Want to see our AI detection in action? Request early access here.