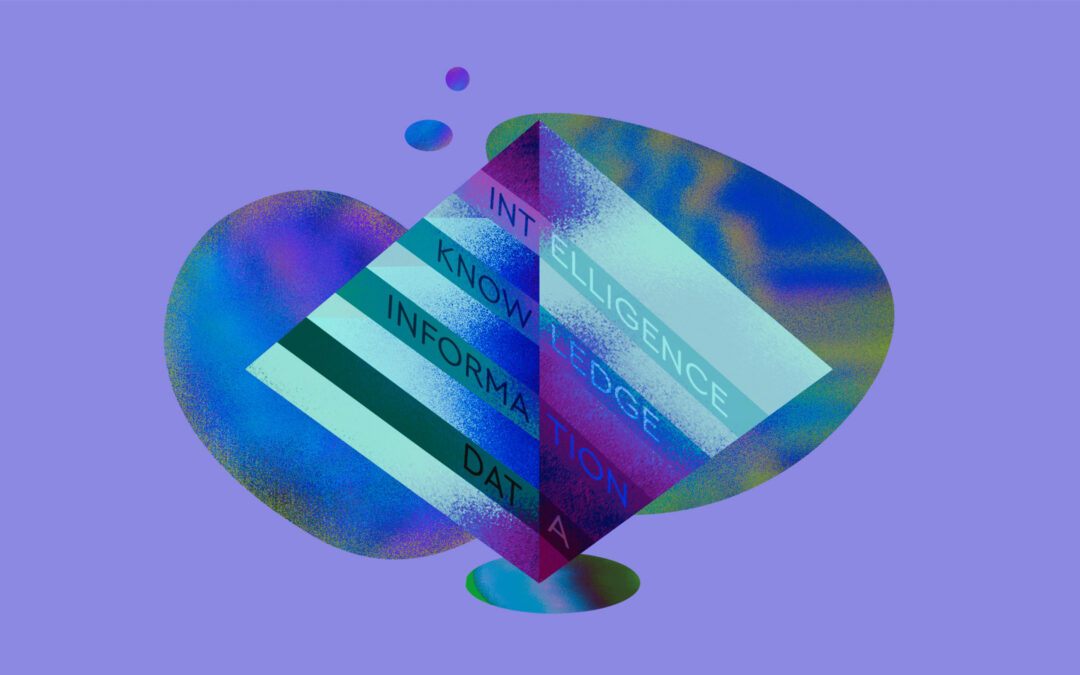

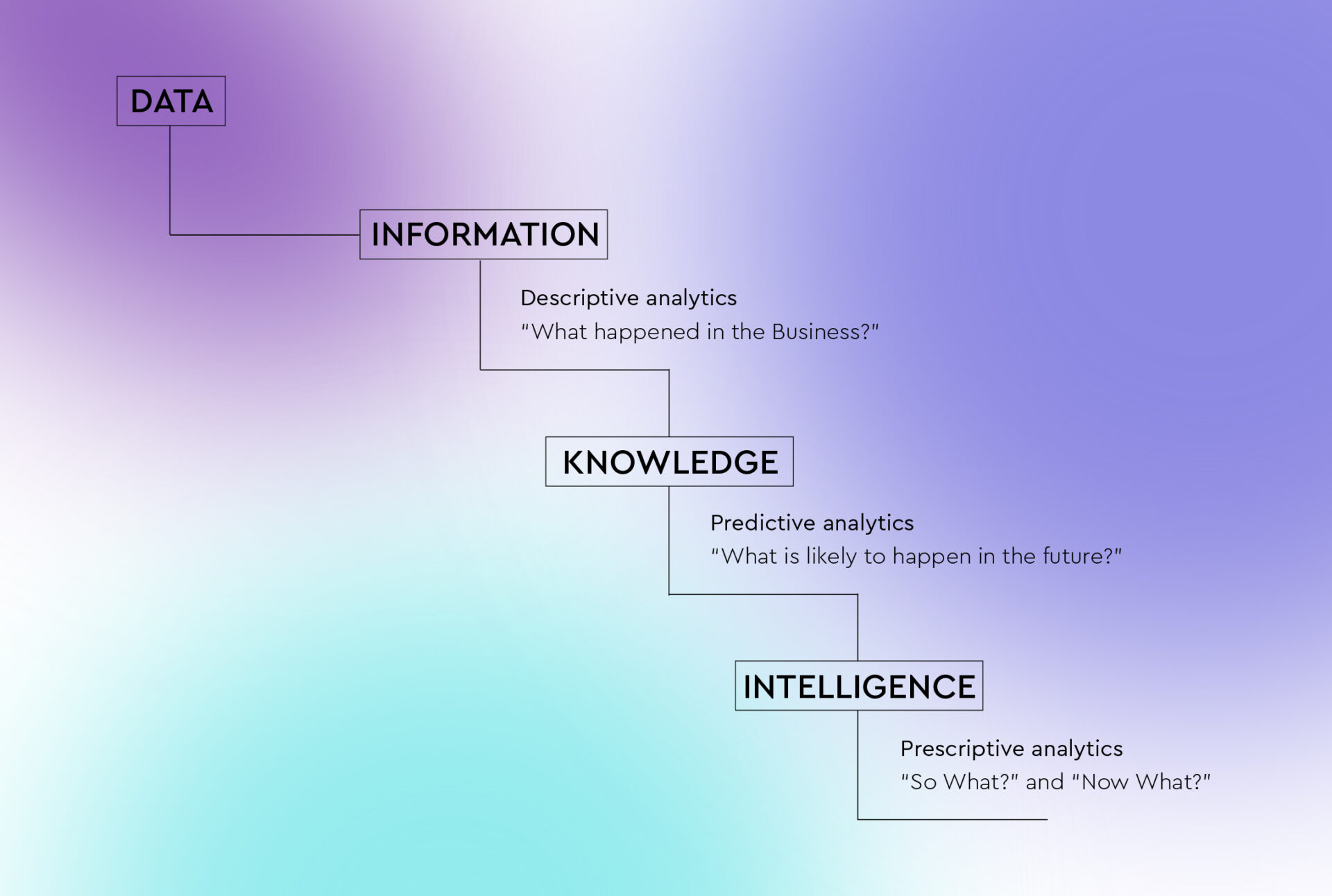

Benchmarking in the Music Industry – Knowledge Layer of the Data Pyramid

How to Turn Music Data into Actionable Insights: This article is the first one in the series. It reviews all layers of the Data Pyramid and shows how to turn raw data into actionable insights.

An Overview of Data in The Music Industry: This article presents all types of metadata in the industry from factual (objective) metadata to performance metadata. It is an essential guide for music professionals to understand all the various data sources available for analysis.

Making Sense of Music Data – Data Visualizations: This article discusses the second step of the Data Pyramid – Information and how information can be presented in the form of data visualizations, making it easier to comprehend large data sets.

At this step, attempts to look into the future and predict outcomes are made. The more specific the problem or context you’re observing is, the more precise your findings will be at this step. The Knowledge Layer produces analytics that help benchmark performance. As Liv Buli from Berklee Online University puts it, at the Knowledge layer you can tell that the artist of a certain size sells well after performing on the TV show and use this information to guide strategy for other artists of the same size. As a result, knowledge makes it possible to look at data in the industry-specific context and understand how you compare in relation to past successes and to competition. In that regard, benchmarking and setting expectations is the final outcome of the knowledge step.

Benchmarking can take different forms within the music industry:

Types of benchmarking

- Process benchmarking

This type of benchmarking deals with processes and aims to optimize internal and external processes in the company. You can improve the process by looking at what competitors are doing or setting one process against another. Processes relate to how things are done in the company, for example, the process of uploading songs to the catalog.

- Strategic benchmarking

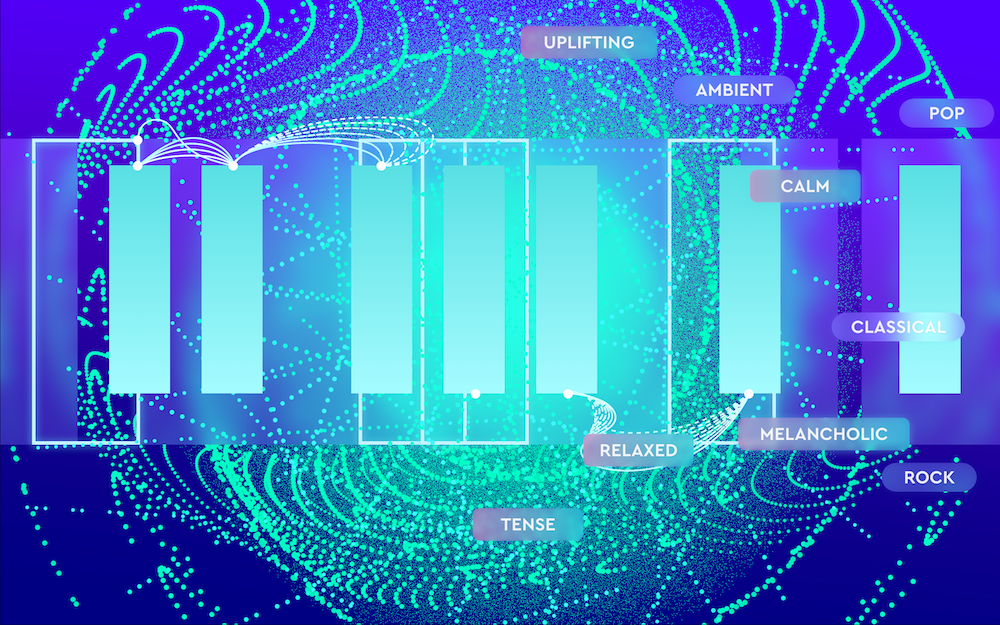

Strategic benchmarking focuses on the business’s best practices which is often more complex than the other two types of benchmarking and includes: competitive priorities, organizational strengths, and managerial priorities. For example, an assessment of how fans responded to the brand sound in the past can help devise a long-term sound branding strategy.

- Performance benchmarking

Performance benchmarking compares product lines, marketing, and sales figures to determine how to increase revenues. For example, as a marketing campaign for a music release develops over time, it can reveal the most vital money channels for exposure. Sales figures can indicate how artists compare to one another in terms of profitability.

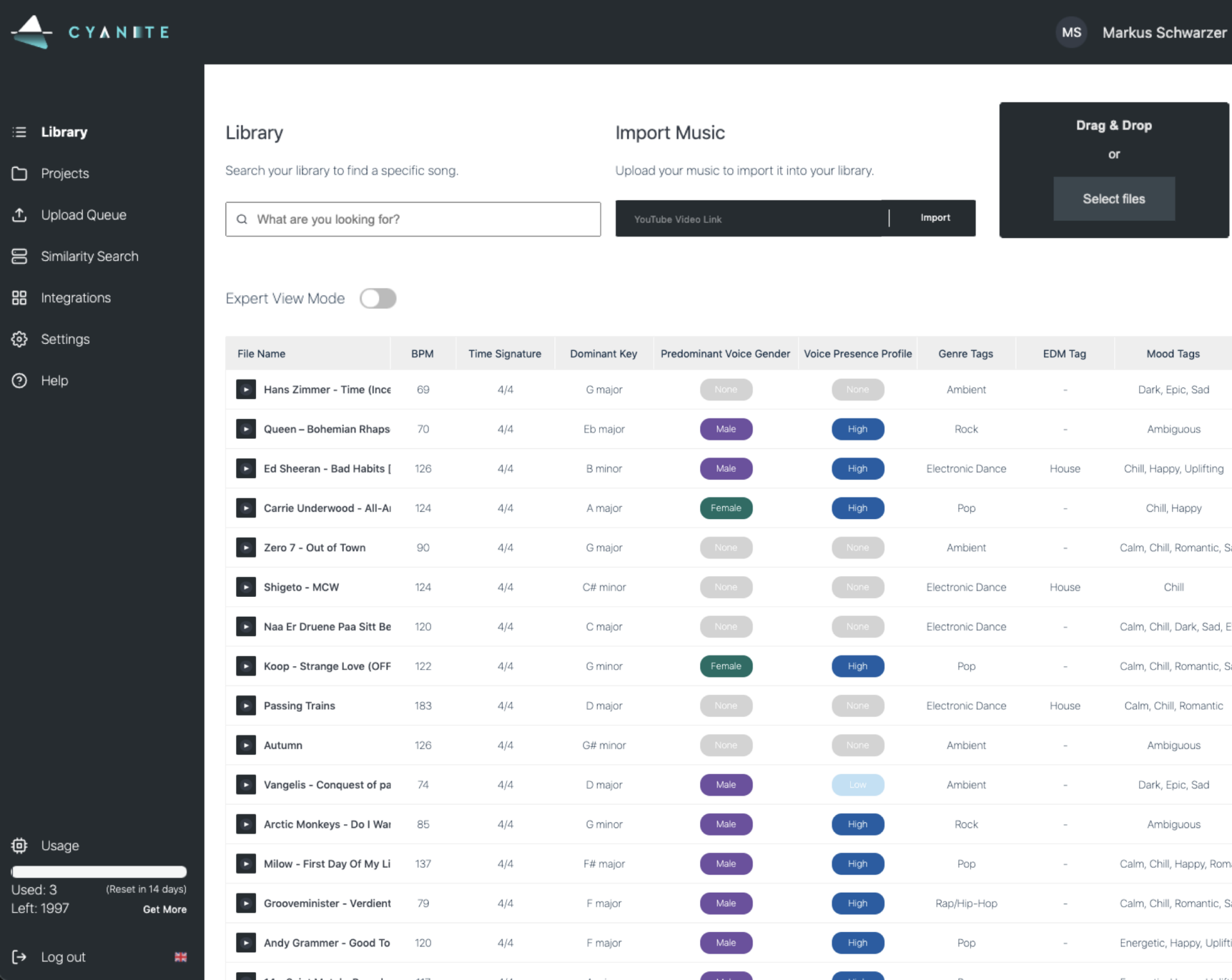

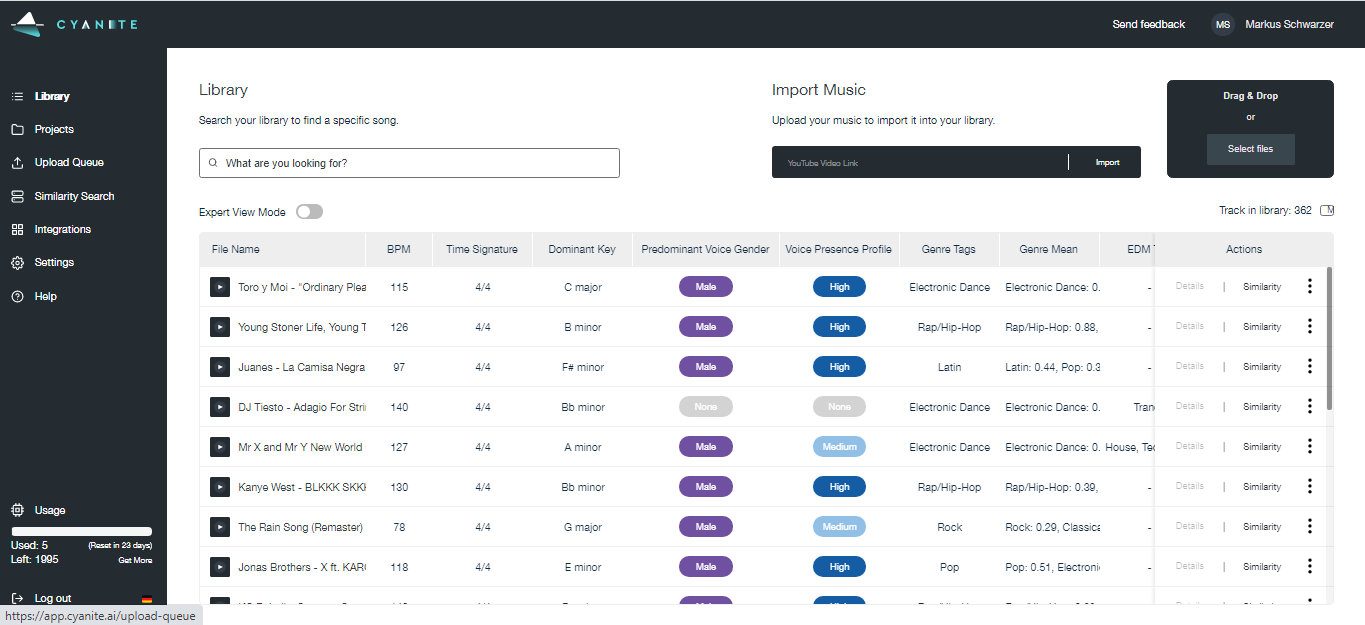

1. Analyzing hit songs and popular artists to discover new talent

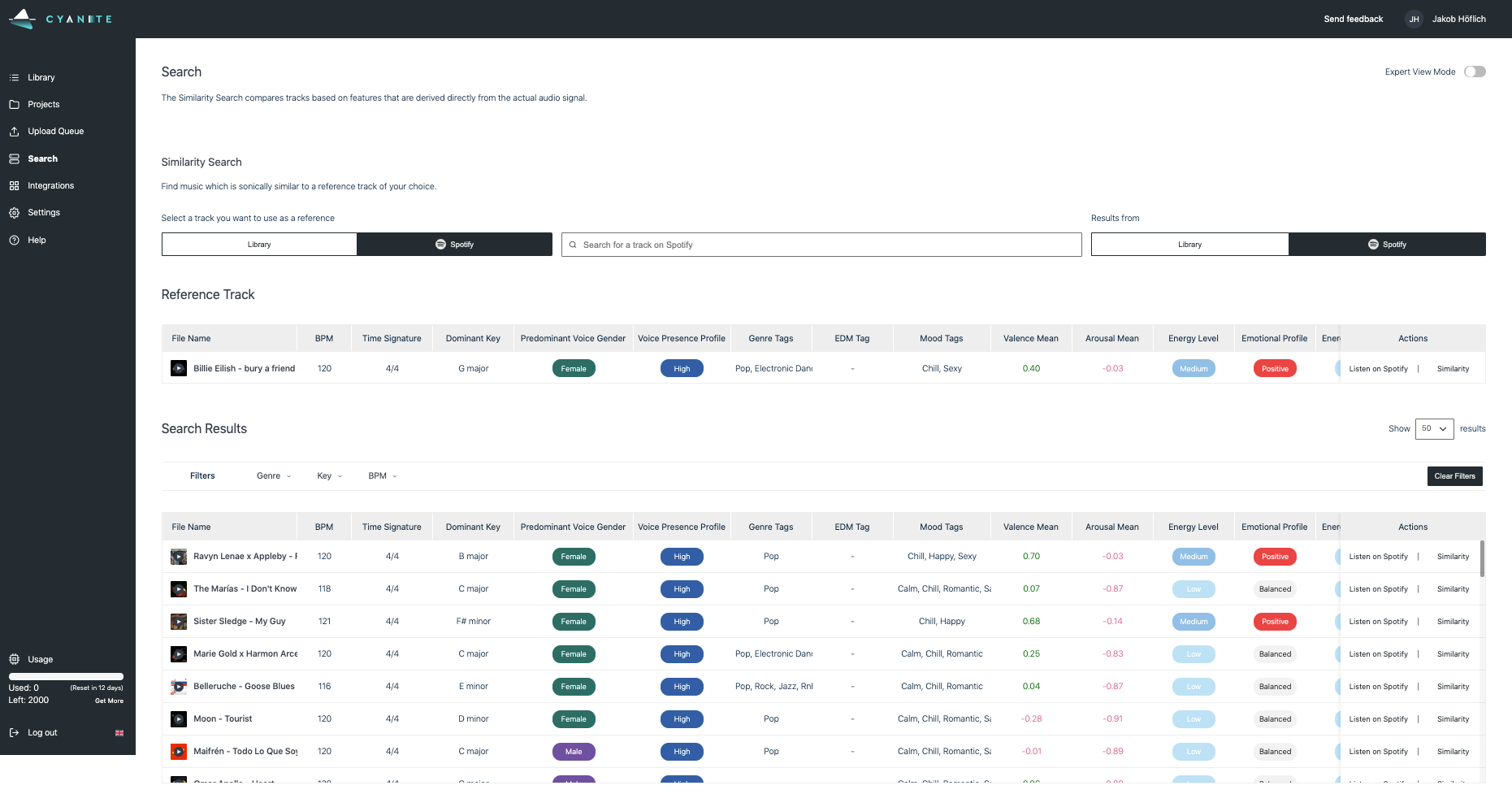

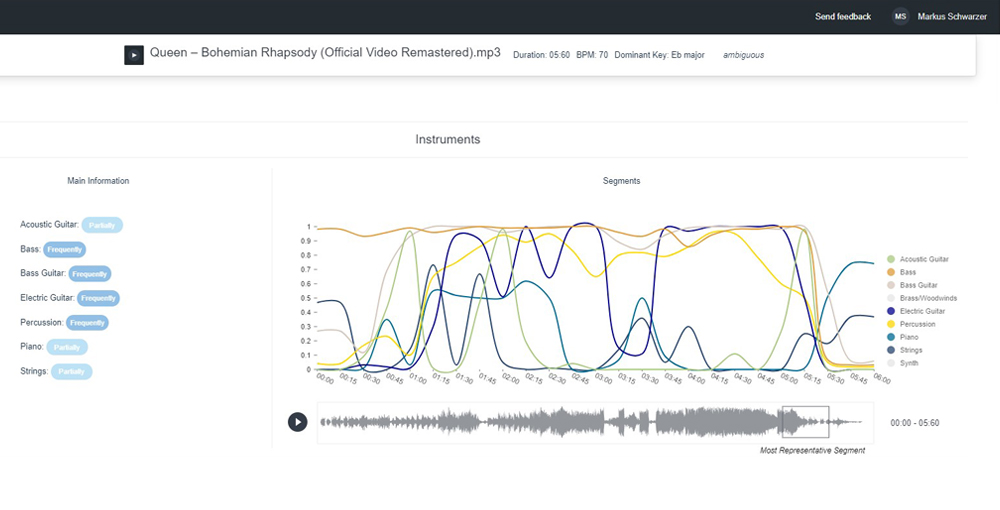

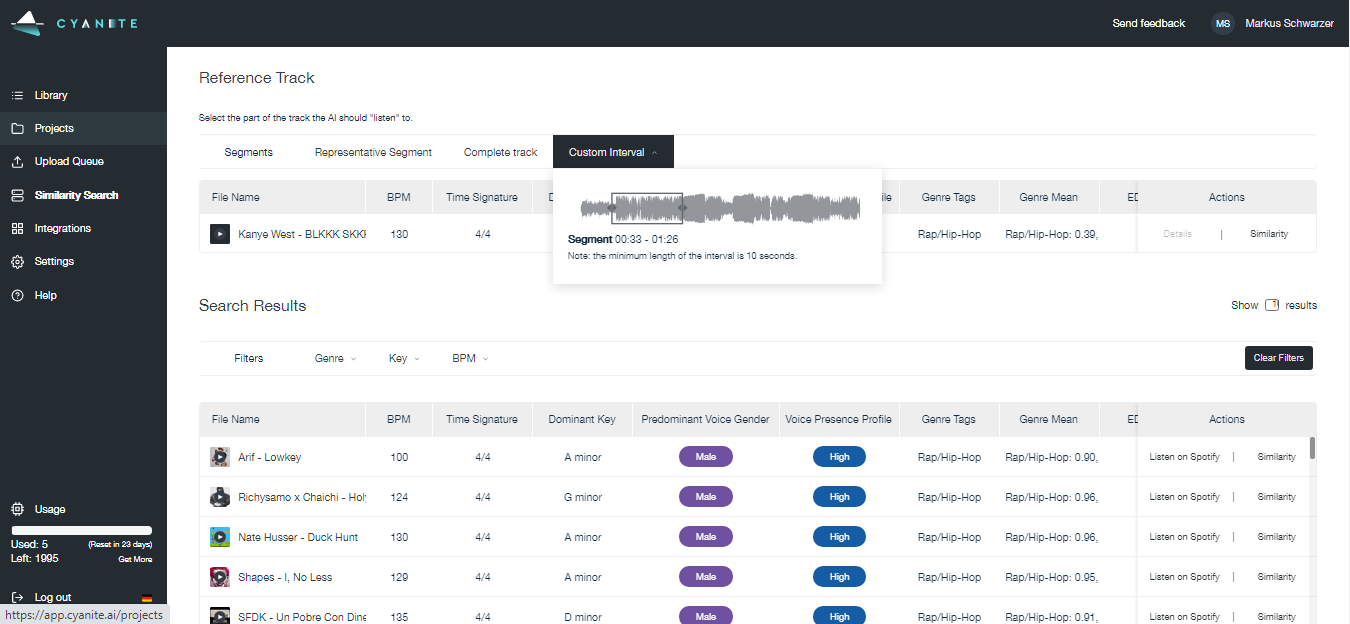

You can utilize Cyanite’s technology to analyze what’s currently working in the music industry in different markets. In particular, you can analyze popular songs and understand what makes them successful in terms of audio-based features such as genre, mood, energy level, etc. Further, you can use the Similarity Search to find tracks with a similar vibe and feel. It then helps you discover and identify new talent which may go along the same lines as current successes. Of course, that is not the whole story of making a hit but it gives you a pretty solid foundation of hitting the current zeitgeist.

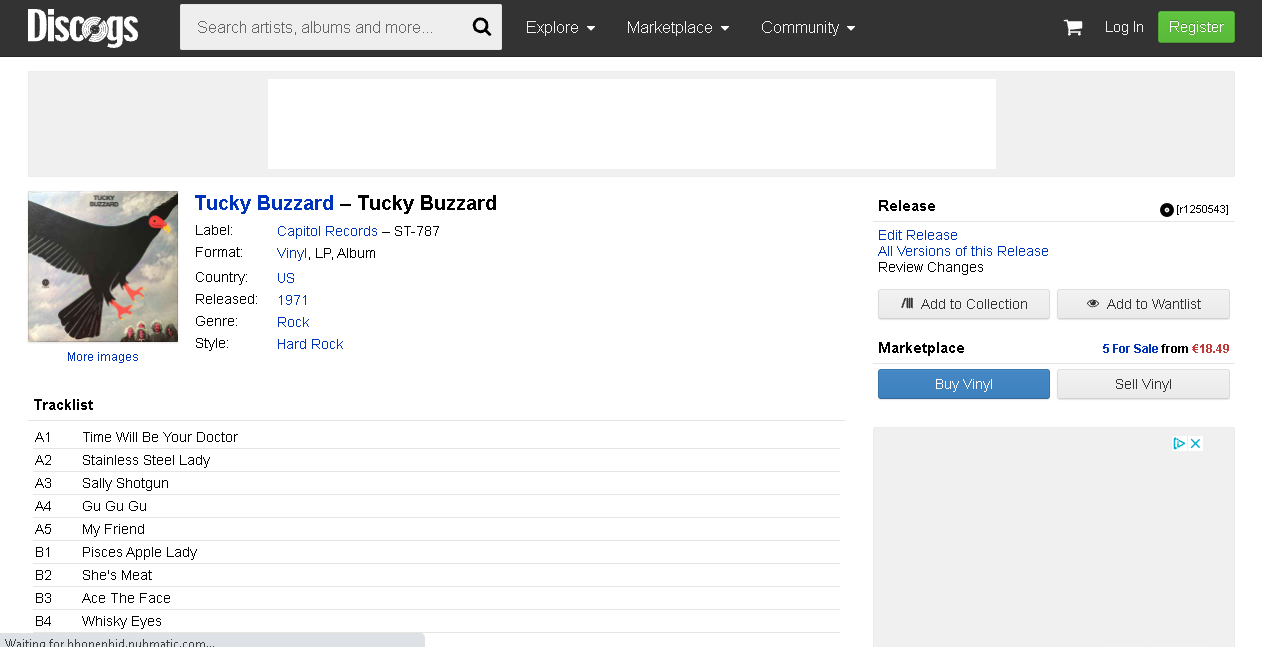

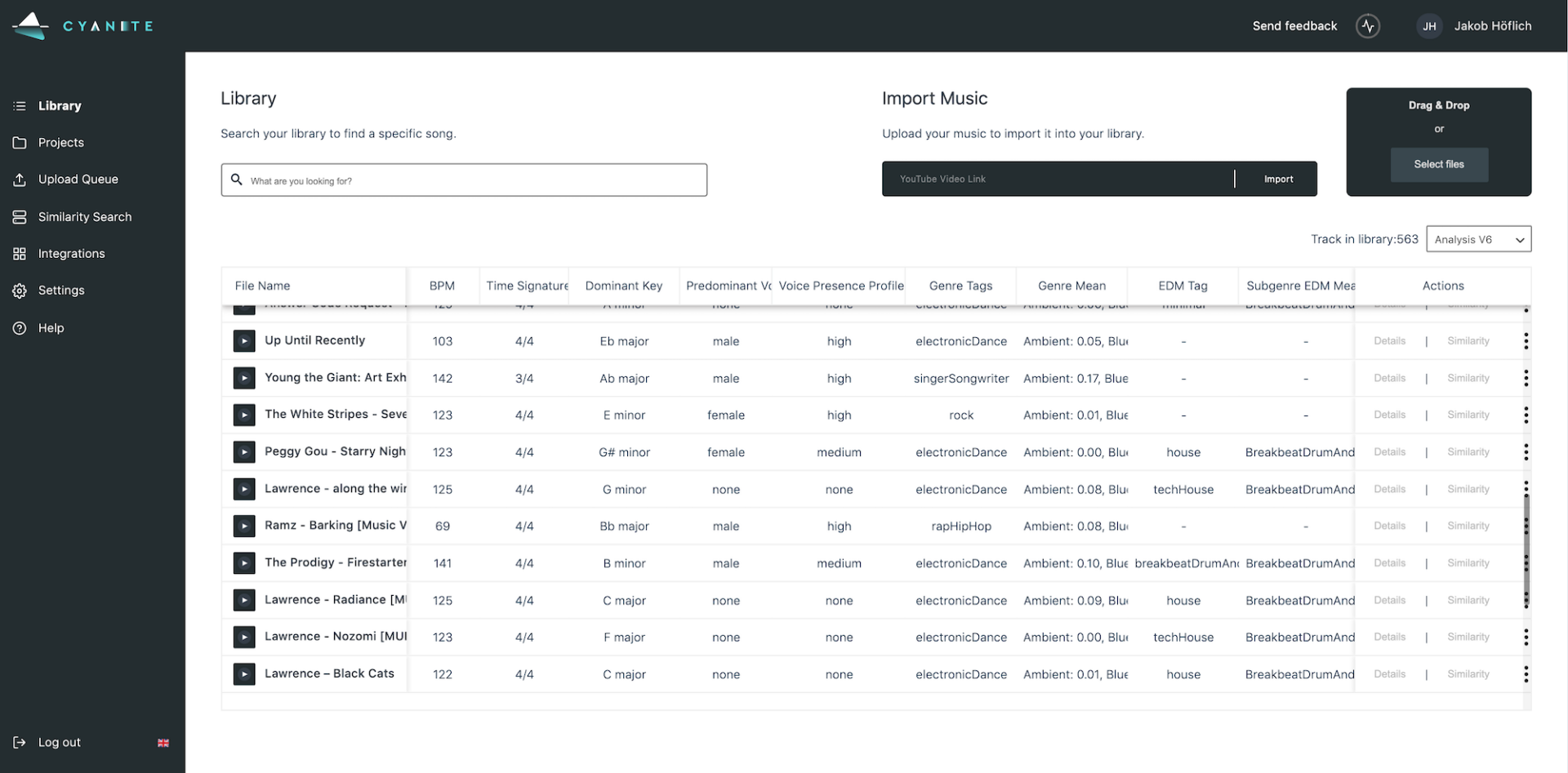

Cyanite Similarity Search interface

2. Analyzing popular playlists to predict matches

We specifically described this use case in The 3 Best Ways to Improve your Playlist Pitching with CYANITE article. You can analyze existing popular playlists such as Spotify New Music Friday or Spotify Peaceful Piano and see what songs are usually featured. This information can then help to understand the profile of the playlist which allows you to find the perfect fit and increase the chances of getting into the playlist. It also supports you in describing the songs to the editors in the way that song is accepted.

Photo at Unsplash @heidijfin

3. Analyzing marketing and sales numbers to allocate marketing budgets and support event planning

For example, record labels can analyze the performance of their artists and adjust marketing campaigns accordingly. Insight can help change the direction of the marketing campaign, choose appropriate channels, and allocate marketing budgets more effectively. In events planning, you can analyze event venues and identify the most relevant cities and venues based on past events.

4. Analyzing the artists’ styles to identify opportunities for song-plugging

If you’re a music publisher looking to get a placement on a new release of a successful artist, analyzing their previous style and matching it to your catalog of demos would be the way to go.

In this case, some audio qualities of the song are not important, as it will be eventually re-recorded. To analyze songs, a regular Cyanite functionality can be used including the Keyword Search by Weights where you can search your demo-catalog by the analysis results of the successful artist on weight-specific keywords to get the most relevant results.

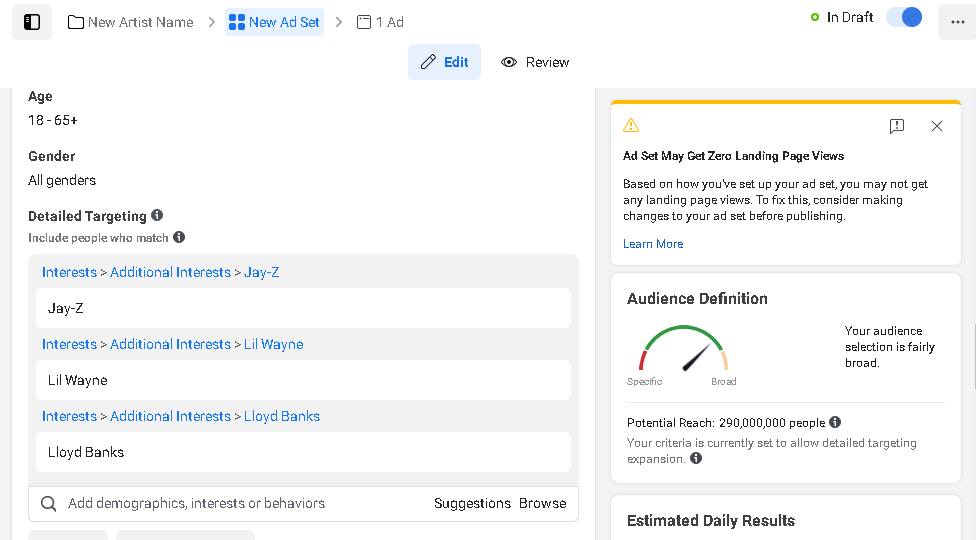

5. Analyzing fan engagement to identify audience segments

You can also analyze artists’ performance by looking into fan engagement on social media and music platforms. Through understanding the fan’s demographics, interests, and lives, you can create custom audiences for new artists or deepen fan engagement for the same artist based on past campaigns. This use case has been thoroughly described in the article How to Create Custom Audiences for Pre-Release Music Campaigns in Facebook, Instagram, and Google.

Photo at Unsplash @luukski

6. Analyzing trending music in advertising to find the most syncable tracks in the own catalog.

Sync licensing which includes finding the sync opportunities and pitching specific songs can benefit from data analysis and benchmarking. Trending music in brand advertising can be analyzed to reveal the brand’s sound. This sound will then be matched to specific songs in your catalog making a strong case in the pitch to the brand in terms of sound-brand-fit. If you are interested in this use case and how data can be used in sound branding and sync licensing check out the interview we did with Vincent Raciti from TRO – About AI in sound branding.

– Knowledge is about the past, not the future. At the knowledge layer, you only have information about what happened before. Usually, information about the present (though some tools provide access to real-time data) or the future is not taken into account. It is important to remember this limitation as past performance is no guarantee to future results.

At the stage of Information, data is structured and organized so it can be interpreted. Information requires less time to find relevant data but there is still a lot of effort involved.

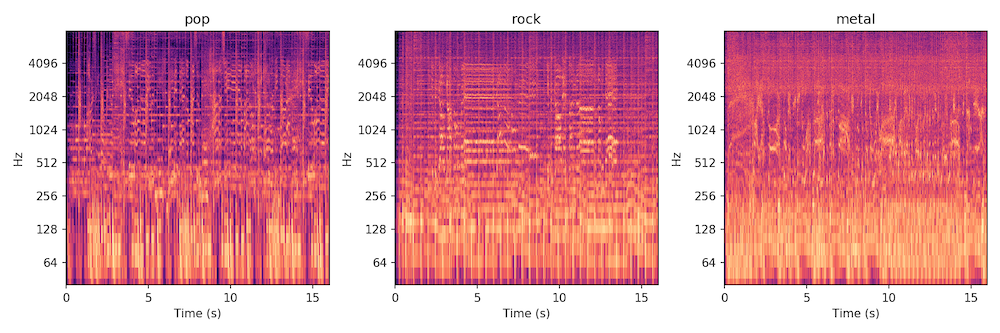

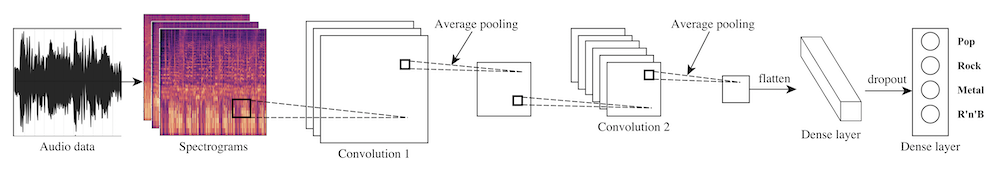

At the Knowledge level, information is put into context and can be used for benchmarking and setting expectations. This context can be historical and involve past successes or it can relate to the position of others in the market. What kind of knowledge is derived from information depends on the initial data set and on the ability to store the memories of past successes. This process of turning data into knowledge takes a whole new form when machine learning and deep learning techniques are used as they significantly speed up the process of data collection and can memorize tons of data. However, a lot of knowledge in the music industry is still derived manually by looking at the past outcomes and trying to apply them somehow in the present.

I want to analyze my music data with Cyanite – how can I get started?

If you want to get the first grip on Cyanite’s technology, you can also register for our free web app to analyze music and try similarity searches without any coding needed.