How-To: Spotify Playlist Pitching Guide with AI in 2025

It’s no secret that your song’s playlist performance can make or break your release. How likely your song is to be picked is significantly influenced by the quality of your pitch on Spotify for Artists. That’s why we decided to create a guide on Spotify playlist pitching guide with AI.

And let’s face it: Artists are great at making music, but not everyone is great at talking about it. Let Cyanite’s AI song analysis talk the talk while you walk the walk.

Three Types of Playlists

There are several types of playlists and you can get your track on each one of them. We distinguish between the following playlists:

- Algorithmic playlists (Spotify)

- Independent playlists (bloggers & curators)

- Editorial playlists (Spotify’s curators)

We’ll focus on the last two and provide a playlist pitch template using Cyanite. Independent playlists usually have their own websites, as well as contact details somewhere on the website. They host their playlist on a multitude of platforms including Spotify.

Editorial playlists on Spotify are created by Spotify editors. These playlists can only be accessed via the portal Spotify for Artists. For those who do not yet know how the portal works, here is a quick guide by Ditto.

What is Spotify for Artists?

If you’re going to pitch on Spotify, Spotify for Artists is the tool for you. Any artist can submit a track to Spotify so that Spotify editors can review it and include it in one of the playlists. The editorial team at Spotify accepts only unreleased tracks, so if your song is already on Spotify you won’t be able to submit it. Therefore, before you choose the submission date on Spotify for Artist, make sure you use the pitching option first. At the same time, editors’ review takes time, so you need to submit a song well in advance.

Tip: Submit a track on Spotify for Artists at least seven days before the release (better 2 weeks), to ensure it can be included in the Release Radar of the artist’s followers.

How to Pitch a Track?

Spotify for Artists gives you a step-by-step guide on how to pitch the song. But as with every platform, some tips and tricks can increase your chances of getting onto the playlist.

Here’s where Cyanite comes into play with its AI song analysis, as it improves the quality of your pitch and makes things more smooth and productive.

Our tips on how to pitch a playlist using Cyanite’s AI include:

- Identify the strongest emotions of the song

- Find the right words for the Spotify song description;

- Find the most suitable playlists with Cyanite’s Playlist Matching.

Let’s explore these steps in detail.

Tip 1: Identify the strongest emotions of the song.

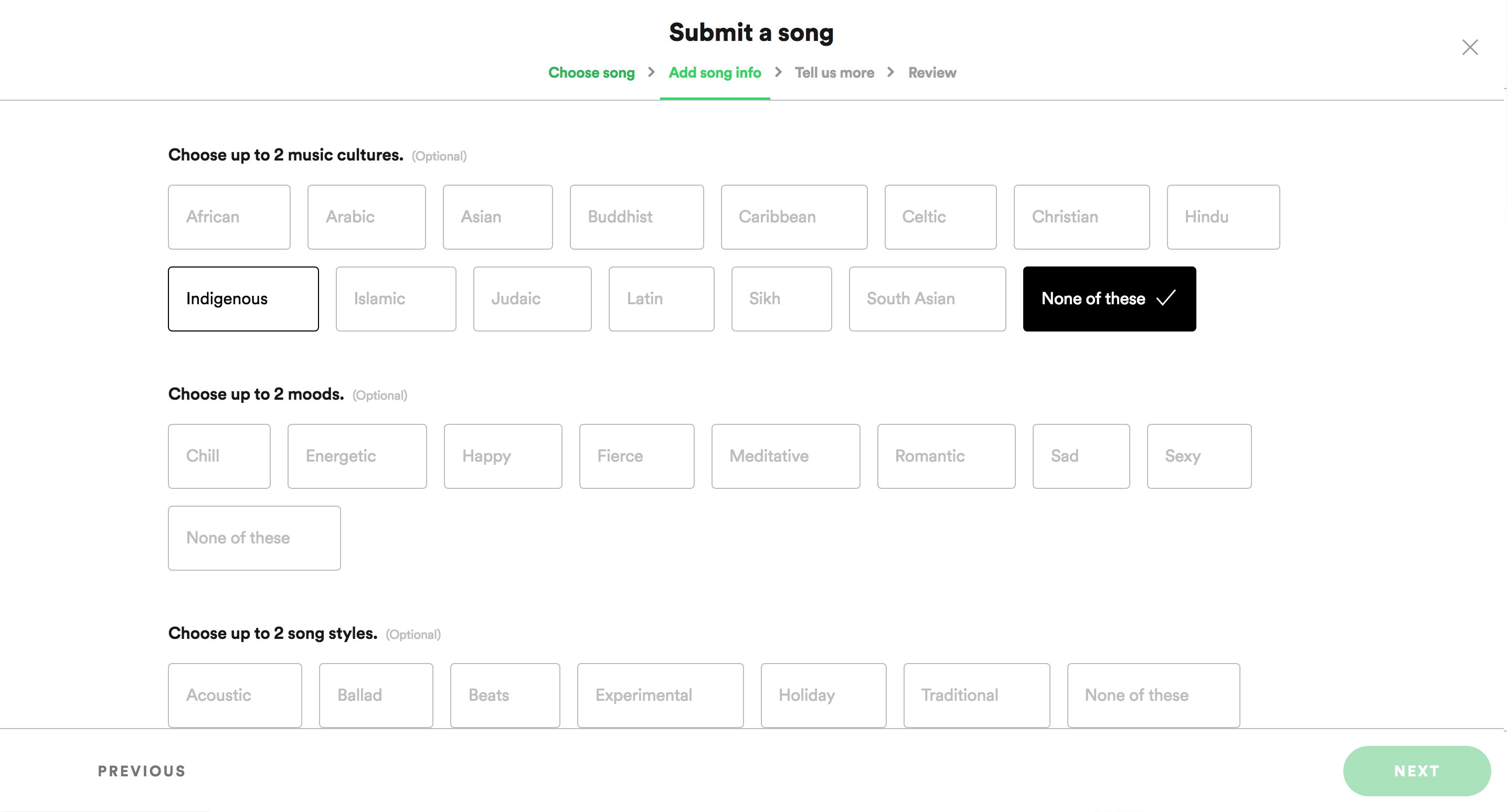

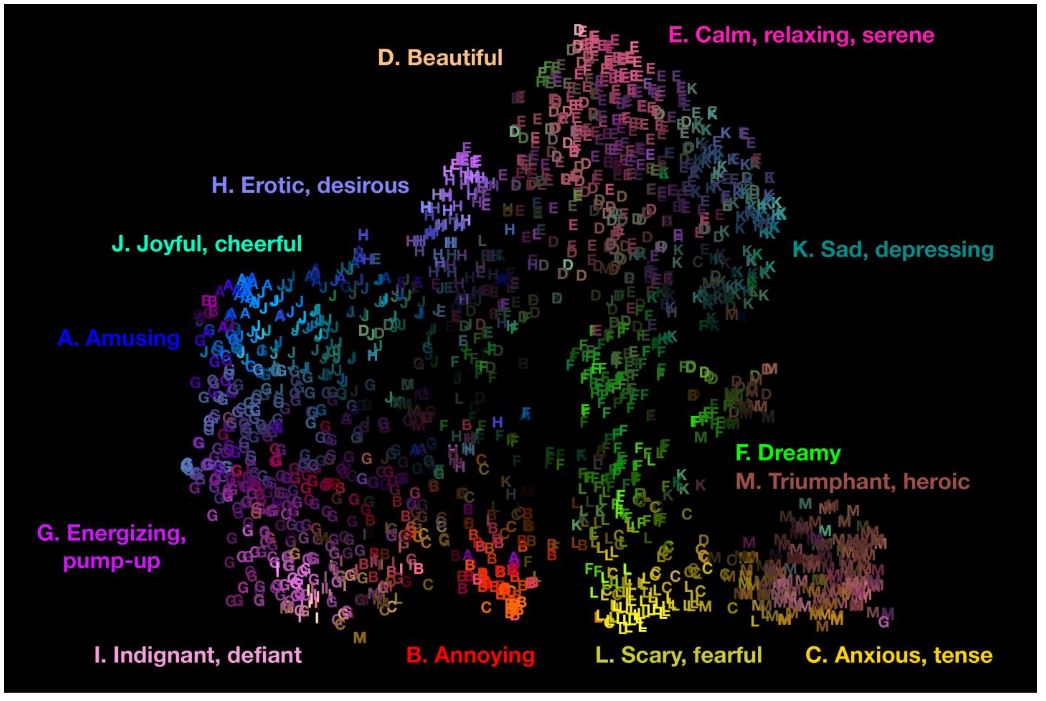

The Spotify for Artists portal lets you select two emotions that classify your song the best. Being limited to only two, it is very important to make the right choice here. The emotional and subjective nature of music makes this task particularly difficult.

Spotify for Artists pitching

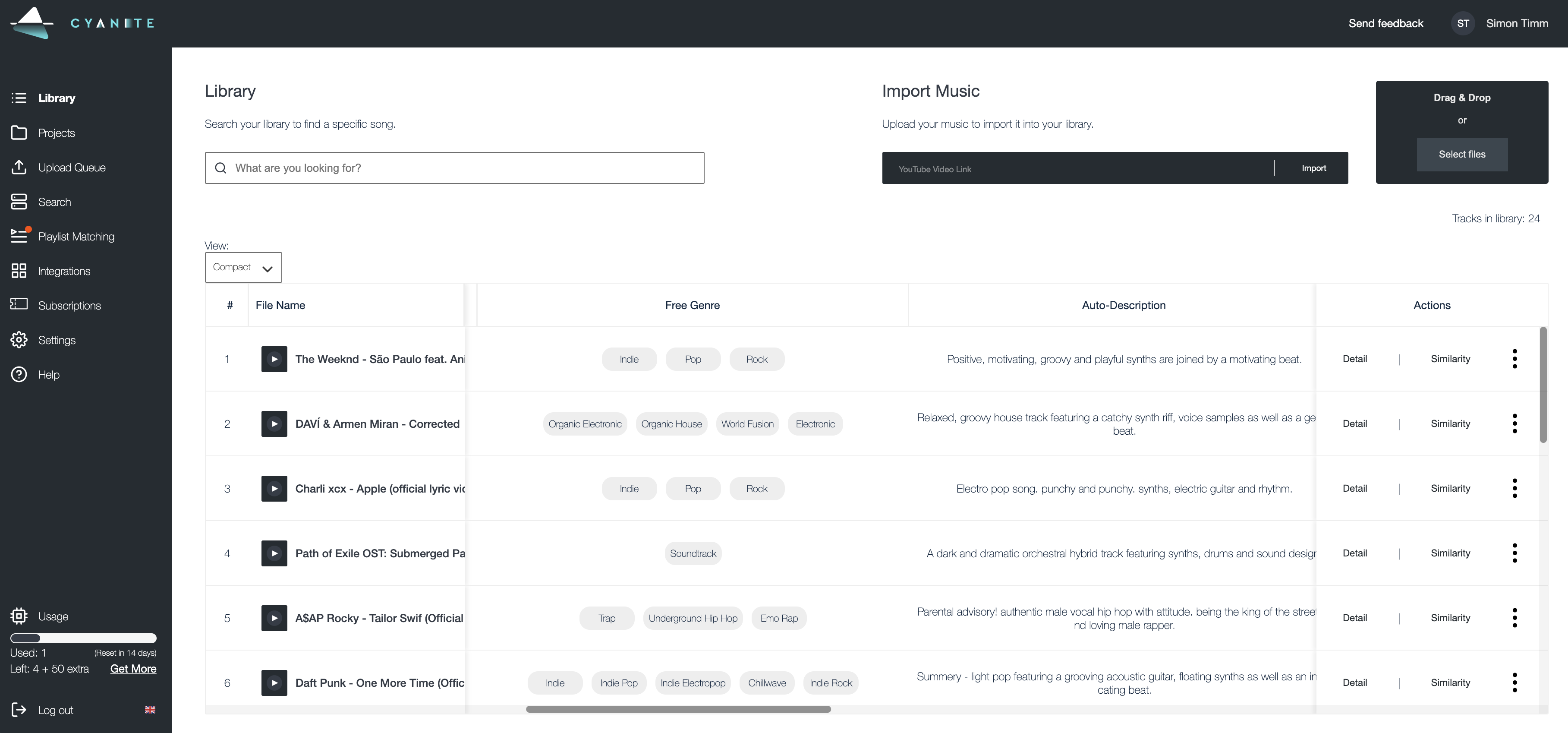

Here is how you do it with Cyanite’s AI song analysis. Upload your song file as MP3 or via a YouTube link into your library on Cyanite. The song will be analyzed and tags such as genre, mood, energy, or instruments will be available. Also, in Cyanite’s Detail View, you will see how the moods, genres, energy levels, or instruments develop over the duration of the song.

Here is a scheme that shows how the emotions of Spotify for Artists can be equated with the emotions on Cyanite.

Spotify/Cyanite Moods Translation

If you manage to find emotions that correctly describe your track, it will save time for the editors and you will make a good first impression. This is confirmed by the professionals in the music industry, who often have to deal with tons of music releases.

Weston McGowen – artist manager at Equal Songs, used Cyanite when submitting songs to Spotify for Artists. Weston remembers that choosing emotions has always been one of the most difficult parts for him.

He says: “The objective view of Cyanite’s AI helps a lot“.

Additionally, some of Spotify’s playlists are mood-based, so mood match is the first criteria editors look at. Stephen Cirino emphasizes the relevance of emotion selection in his article on the pitching process:

“Choosing the right moods to match your song can help get your music in front of curators for mood-focused playlists such as Mood Booster, Dreamy Vibes, Sad Indie, and more“. So Cyanite’s mood tags might be the most important tags to pay attention to when playlist pitching with AI.

Additionally, you can choose and match genres, sub-genres, and instruments using Cyanite. Here is the screenshot of the song analysis with all the data:

Cyanite Analysis of genre & auto-descriptions

Tip 2: Find the right words for the song description.

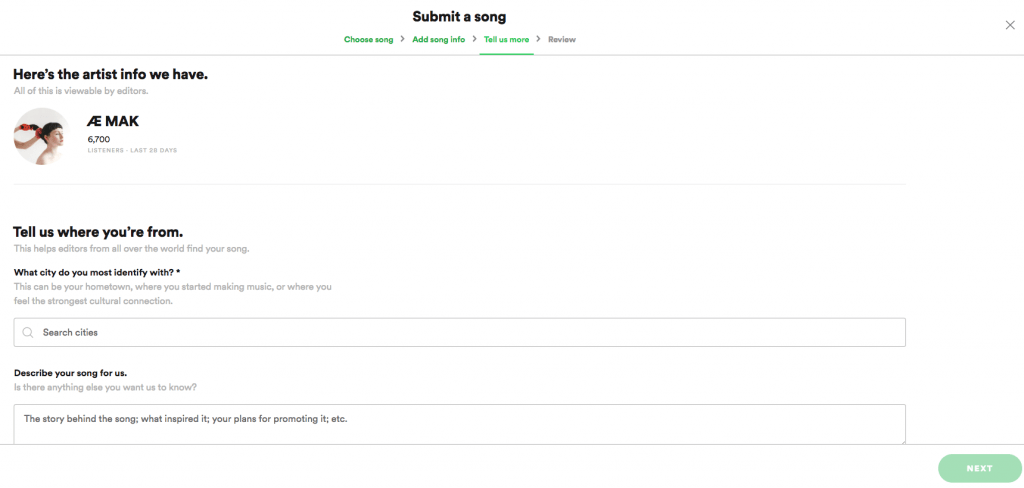

Usually, the most important part of the Spotify playlist pitching guides out there is the song description – according also to the editors. In 500 words you need to describe what your song is about and why it is a good match to any of Spotify’s playlists.

Yes, it is all about the context. Especially when filling in that big blank space where you can describe the song to the editors, everything that gives the editors extra background information about the song has to be packed in here. In the end, it makes their work easier and helps them to build an emotional connection to the music.

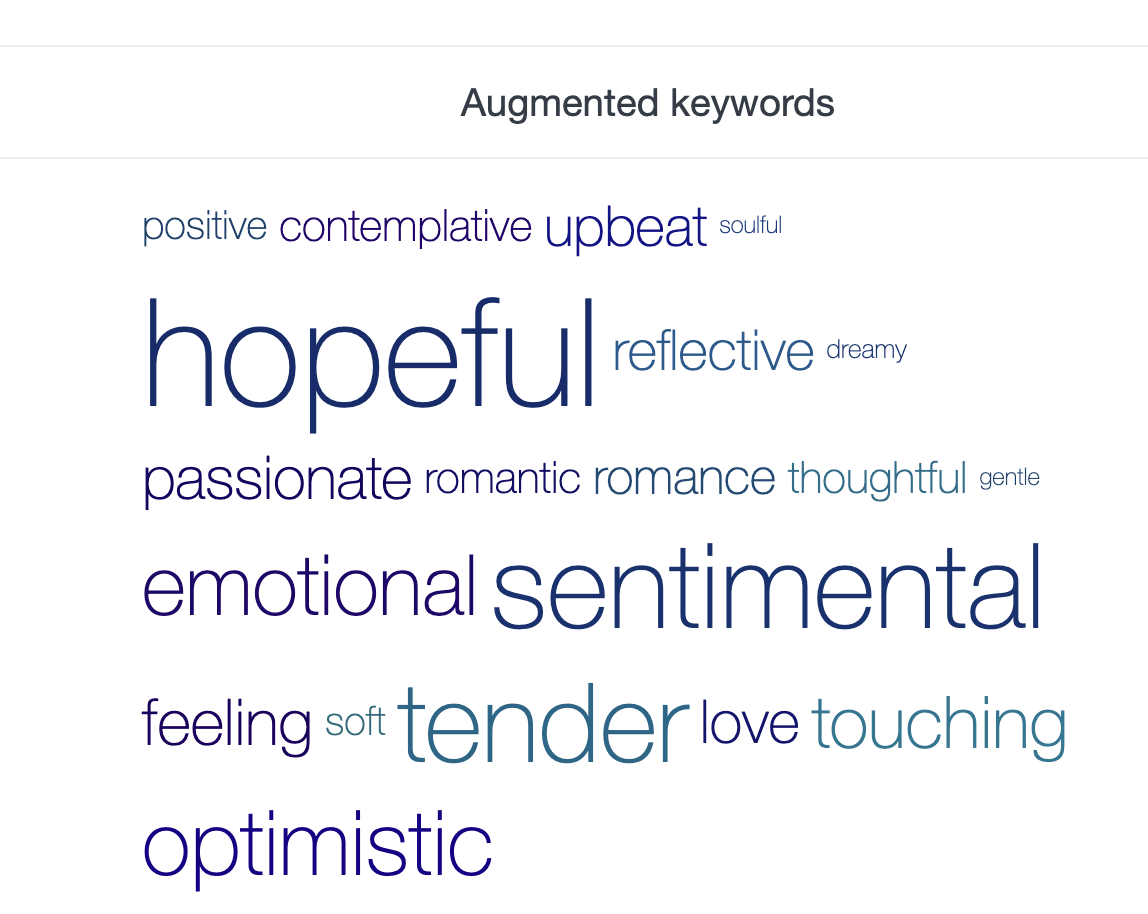

For that, Cyanite’s state-of-the-art Auto-Descriptions and Augmented Keywords are a great choice. Elaborate full-text descriptions plus a word pool of 1,500 music describing terms featuring, genres and moods but also rather abstract terms such as contexts, situations, use cases, and activities solve the blank page problem and make sure the description is bang on.

We give more detailed instructions and Spotify playlist pitching examples in the article: How to Write Press Releases and Music Pitches with Cyanite.

Spotify for Artists text description

The text pitch should present you as an artist and also include details about the song: your artistic approach, inspiration, collaborations, credits, and future plans can be included here. You can also mention which playlist might be a good fit for the track.

AWAL, an artist service offered by Sony Music, writes: “It also requires self-classification, which might offer additional value to a DSP that hopes to match a listener’s mood with the appropriate soundtrack, as quickly and accurately as possible“.

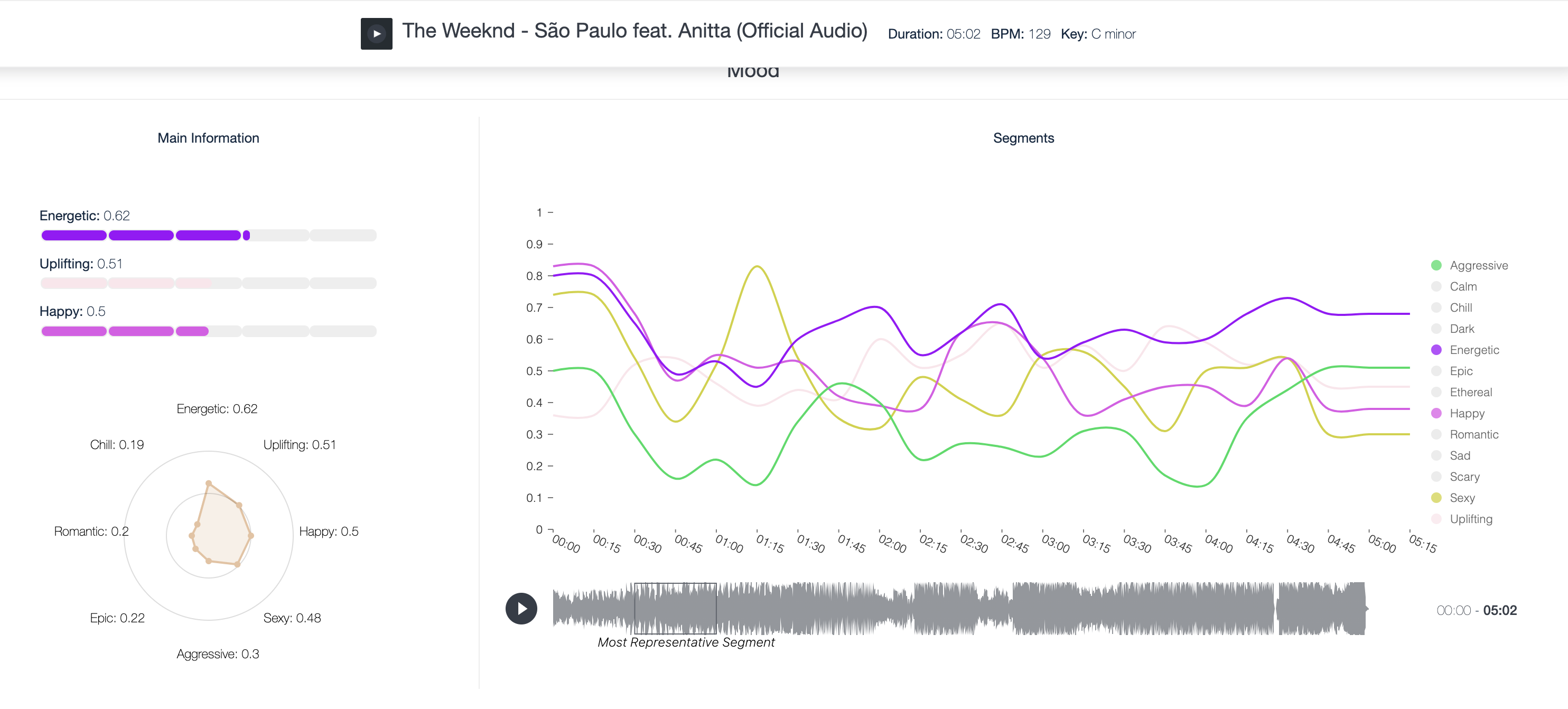

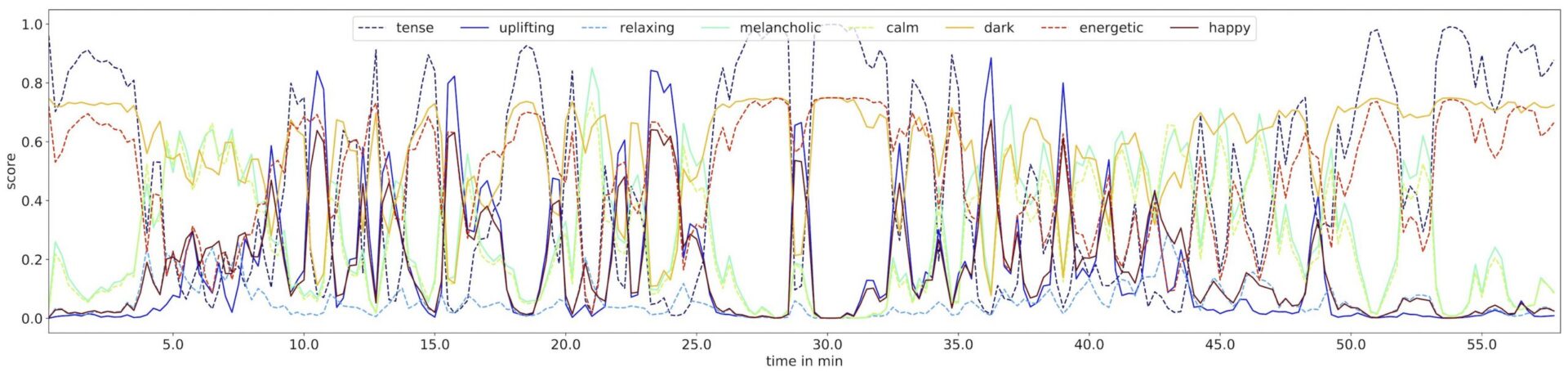

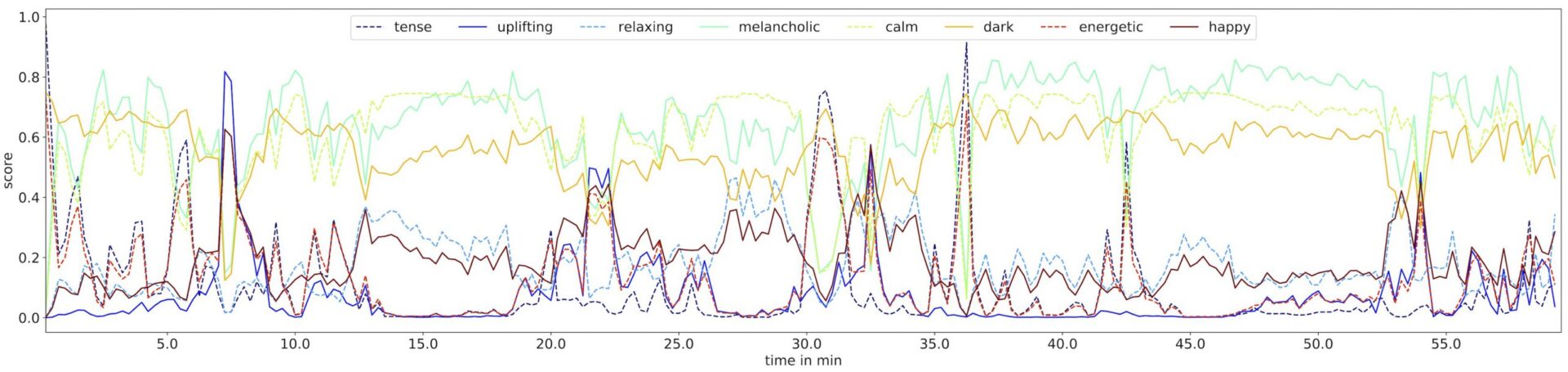

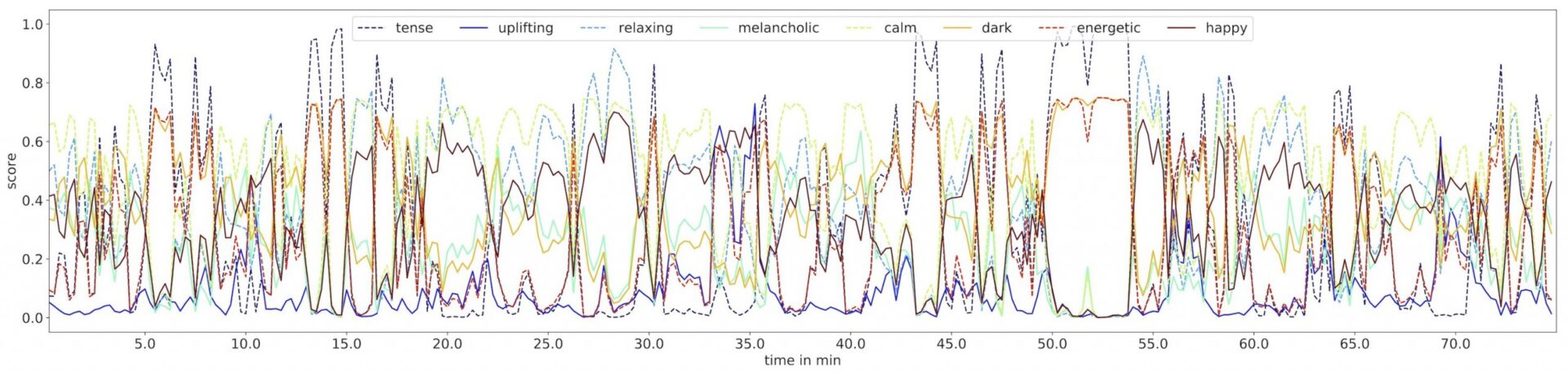

A big part of how listeners experience a song is the way it develops and what turns it takes over the duration of the track. As the name suggests, the Dynamic Emotion Analysis does not only show you what moods a song is made of. It maps the most characteristic peaks and lows and all developments in between. This gives you the data-supported vocabulary to describe certain dynamics of your song and the fine little details that let it stand out. See the screenshot below.

Cyanite detail view with dynamic emotion analysis

Pro Tip: Cyanite Mood Analysis + LLM

Feed a screenshot of the mood analysis chart to an LLM of your choice and ask it to write a description of the song’s emotional dynamic and its duration, to get further inspired. Here’s an example for the song above:

Opening Section (0:00 – ~1:00):

The track kicks off with a strong energetic presence, immediately drawing listeners in with its vibrant intensity. This high-energy start is balanced with hints of an uplifting undertone, giving the introduction a bright and driving quality. The dynamic nature makes it an excellent opener or mid-playlist highlight.

Development and Contrast (~1:00 – ~2:30):

As the song progresses, the energy remains prominent but begins to interact with subtler emotional elements. Uplifting tones shift slightly to make space for a romantic and epic feel, adding depth and intrigue. These layers create a dynamic ebb and flow, ideal for keeping listeners engaged during transitions between more contrasting tracks in a playlist.

Peak and Groove (~2:30 – ~4:00):

In this section, the energy peaks, and the track’s balance of movement and intensity shines. There’s an underlying sensual and smooth vibe, which contrasts beautifully with its punchy rhythm. This moment is perfect for playlists centered on late-night energy or danceable grooves with a touch of sophistication.

Closing Section (~4:00 – End):

The final segment maintains its energetic drive while reintroducing uplifting tones, giving the track a satisfying resolution. The consistent rhythm ensures a strong finish, making it suitable as a climactic point in a playlist or as a segue into lighter, more reflective tracks.

Additionally, to write a text pitch you can use Cyanite’s Auto-Description and Augmented Keywords. These are the keywords that characterize a song in addition to other data on moods, genre, energy level, etc.

Tom Odell’s “Grow Old With Me” analysis – Augmented keywords from Cyanite

You can use these keywords to write a compelling text pitch or just copy and paste them into an LLM of your choice. With some human editing, current LLMs can produce compelling song descriptions and pitches. We tried using a “product description” option, and here is the result for Tom Odell’s Grow Old with Me.

Tom Odell’s soothing new song is the perfect soundtrack for any emotional situation. It reminds you that beauty, love, and joy are always close by and will always be a part of your life. The acoustic guitar and piano melodies help create a calm and relaxed atmosphere where you can’t help but feel comfortable.

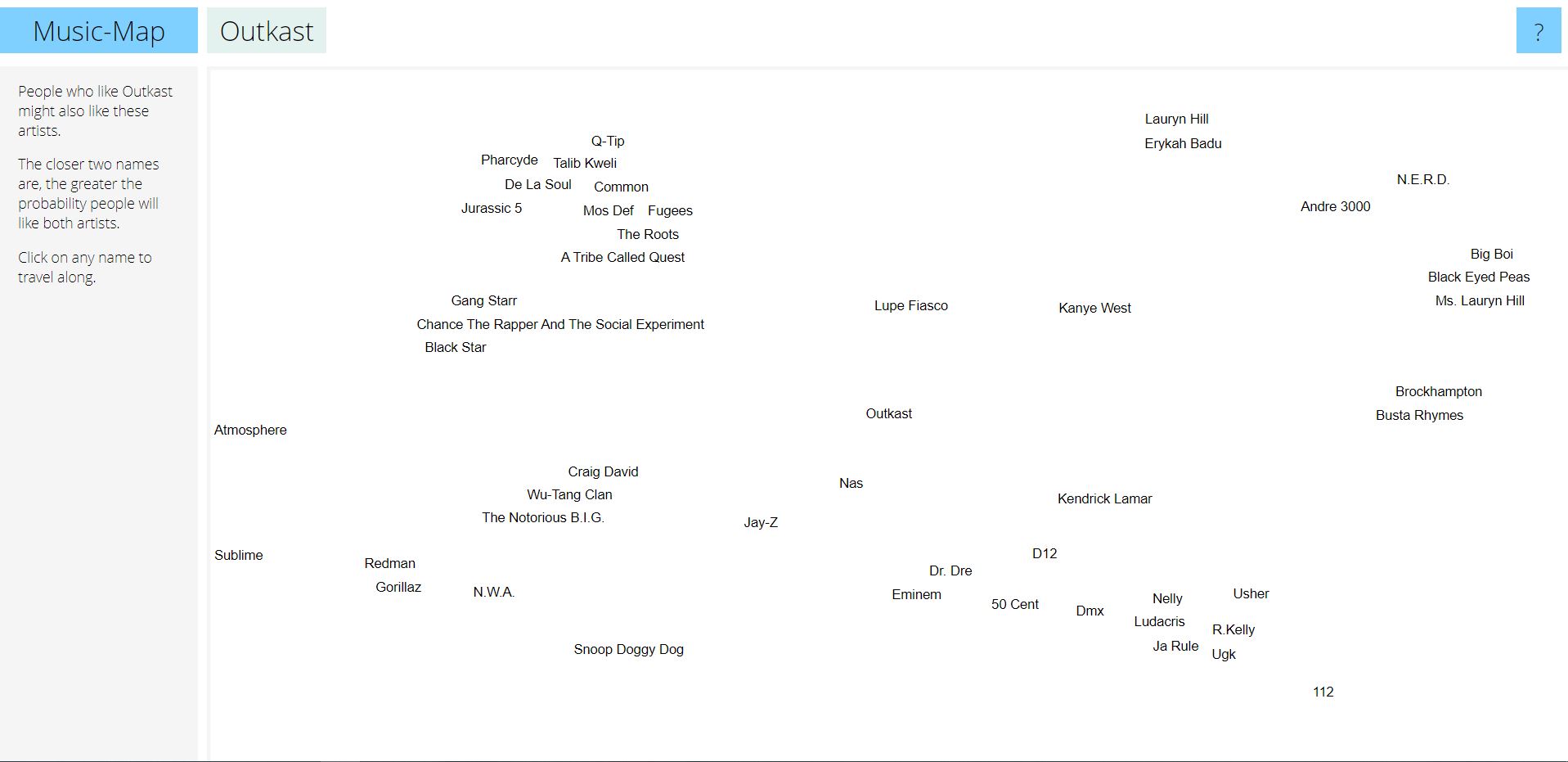

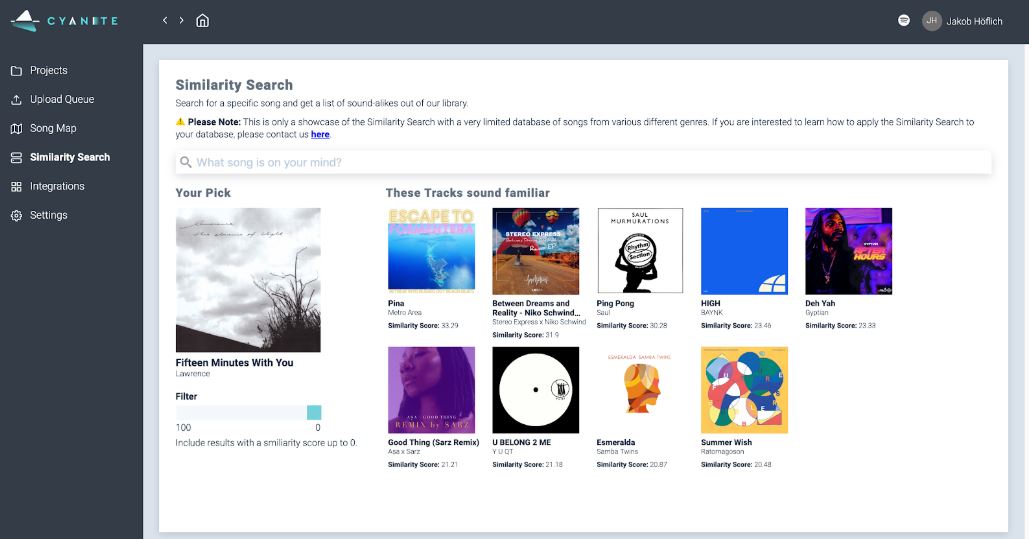

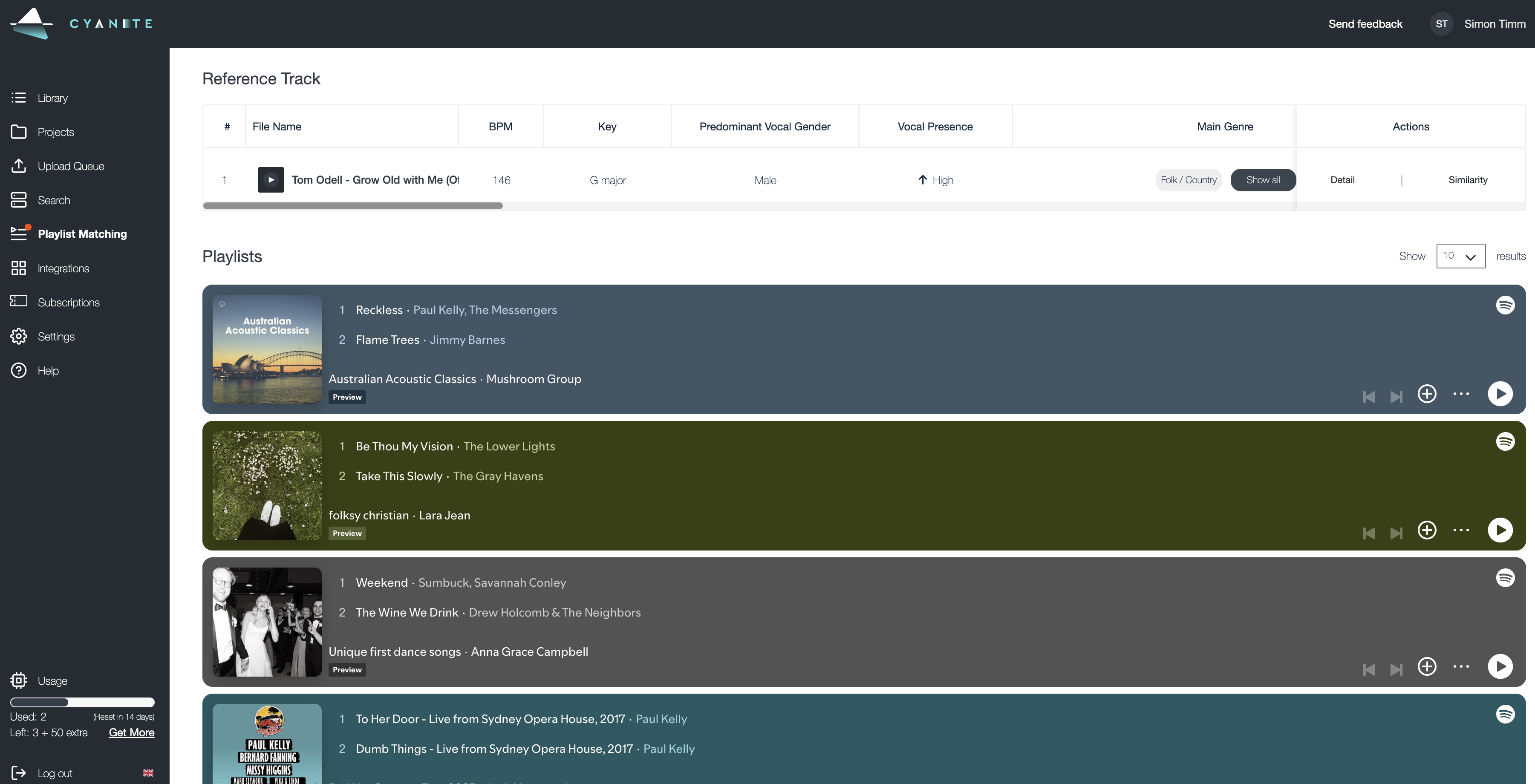

Tip 3: Filter out the most suitable playlists.

When you click on “Playlist Matching” on the navigation bar and select your song, you will get instant Spotify playlist recommendations – both editorial as well as independent.

Cyanite’s Playlist Matching Tab

Browse through up to 20 playlists and find out which one matches the vibe of your song best. If they are editorial, it is indicated by a little Spotify logo on the top left corner and it will say “Spotify” as the editor. To get to those playlists, please use your Spotify for Artists playlists pitching tool.

For everything else, you can often google the curator’s nickname and find their profiles on other social media platforms to get in touch about your release there.

How to best approach these indie curators is well described here and for more great tips on how to promote your music check out this article by Studio Frequencies.

Will I Be Picked?

It is impossible to tell if your track is going to be picked by Spotify. The waiting time to find out is usually from two weeks to a month. If after that time you realize that nothing is happening, don’t worry. Sometimes the track is picked later when it starts to gain traction and listens on Spotify.

That’s why it is important to continue your promotional efforts after the release and use other platforms including social media. We explain why using ads and social media outreach is so important for Spotify editors in the article: How to Create Custom Audiences for Pre-Release Music Campaigns in Facebook, Instagram, and Google.

What's Next?

Given the continuous streaming hype, mastering the art of playlist pitching seems inevitable.

Nevertheless, because playlists have such an influence on the music industry, it’s a topic that needs critical discussion. In addition to our guide, we recommend these readings on Spotify curatorial practices and playlisting on Musically and BestFriendsClub.

Ultimately, the success with playlist pitching comes with finding the right fit and putting work into correctly tagging the song and writing a song description. You can do that manually or you can use tools like Cyanite if you’re tired of listening to the same track over and over again or if you have large volumes of music to pitch.

Use Cyanite for playlist pitching with AI

If you don’t have a web app account yet, you can also register for our free web app below to analyze music and try our playlist matching.