A music library stands out by its content but also by how fast, intuitive and easy the users can find the music they need. In other words: A unique catalog + an outstanding user experience = key factors for success. So how can you optimize the user experience to...

How Cyanite protects your sensitive audio: privacy-first workflows for every catalog

Looking for secure AI music analysis? Discover Cyanite’s integration options.

For many music teams, a significant hesitation about AI analysis is not about its capability or quality. It’s about trust. When teams explore AI-driven tagging or search, the conversation almost always leads to the same question: What happens to our audio once it leaves our system?

At Cyanite, we’ve built our technology around that concern from the very beginning. Rather than offering a single security promise, we provide multiple privacy-first workflows designed to meet different levels of sensitivity and compliance. This gives teams the flexibility to choose how their audio is handled, without compromising on tagging quality or metadata depth.

This article outlines the three privacy models Cyanite offers, explains how each one works in practice, and helps you decide which setup best fits your catalog and internal requirements.

Why audio privacy matters in modern music workflows

For those who manage it, audio represents creative identity, contractual responsibility, and, often, years of human effort. It’s not just another data type. Sending that material outside an organization can feel risky, even when the technical safeguards are strong and the operational benefits are clear.

Teams that evaluate our services often raise concerns about protecting unreleased material, complying with licensing agreements, and maintaining long-term control over how their catalogs are used. They look for assurances around:

- Safeguarding confidential or unreleased content

- Complying with NDAs and contractual obligations

- Meeting internal legal or security standards

- Maintaining full ownership and control

These are not edge cases. They reflect everyday realities for publishers, film studios, broadcasters, and music-tech platforms alike. That’s why Cyanite treats privacy as a core design principle.

Security option 1: GDPR-compliant processing on secure EU servers

For many organizations, strong data protection combined with minimal operational complexity is the right balance. In Cyanite’s standard setup, all audio is processed on secure servers located in the EU and handled in full compliance with GDPR.

In practical terms, this means:

- Audio files are never shared with third parties.

- Songs can be deleted anytime.

- Ownership and control of the music always remains with the customer.

This model works well for publishers, production libraries, sync platforms, and music-tech companies that want to scale tagging and search workflows without maintaining their own infrastructure. For most catalogs, this level of protection is both robust and sufficient.

That said, not every organization is able to send audio outside its own environment, even under GDPR. For those cases, Cyanite offers additional options.

Learn more: See how AI music tagging works in Cyanite and how it supports large catalogs.

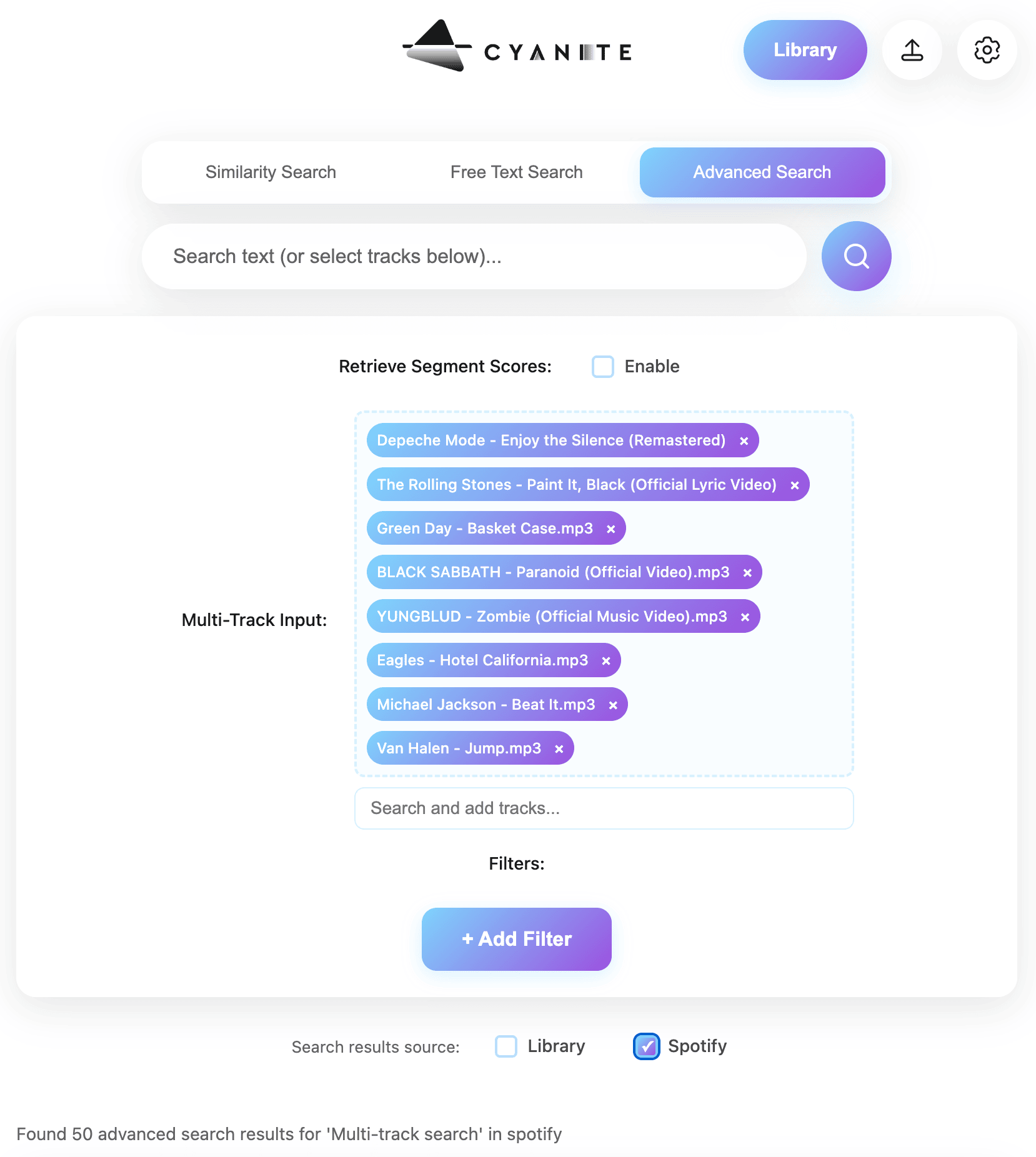

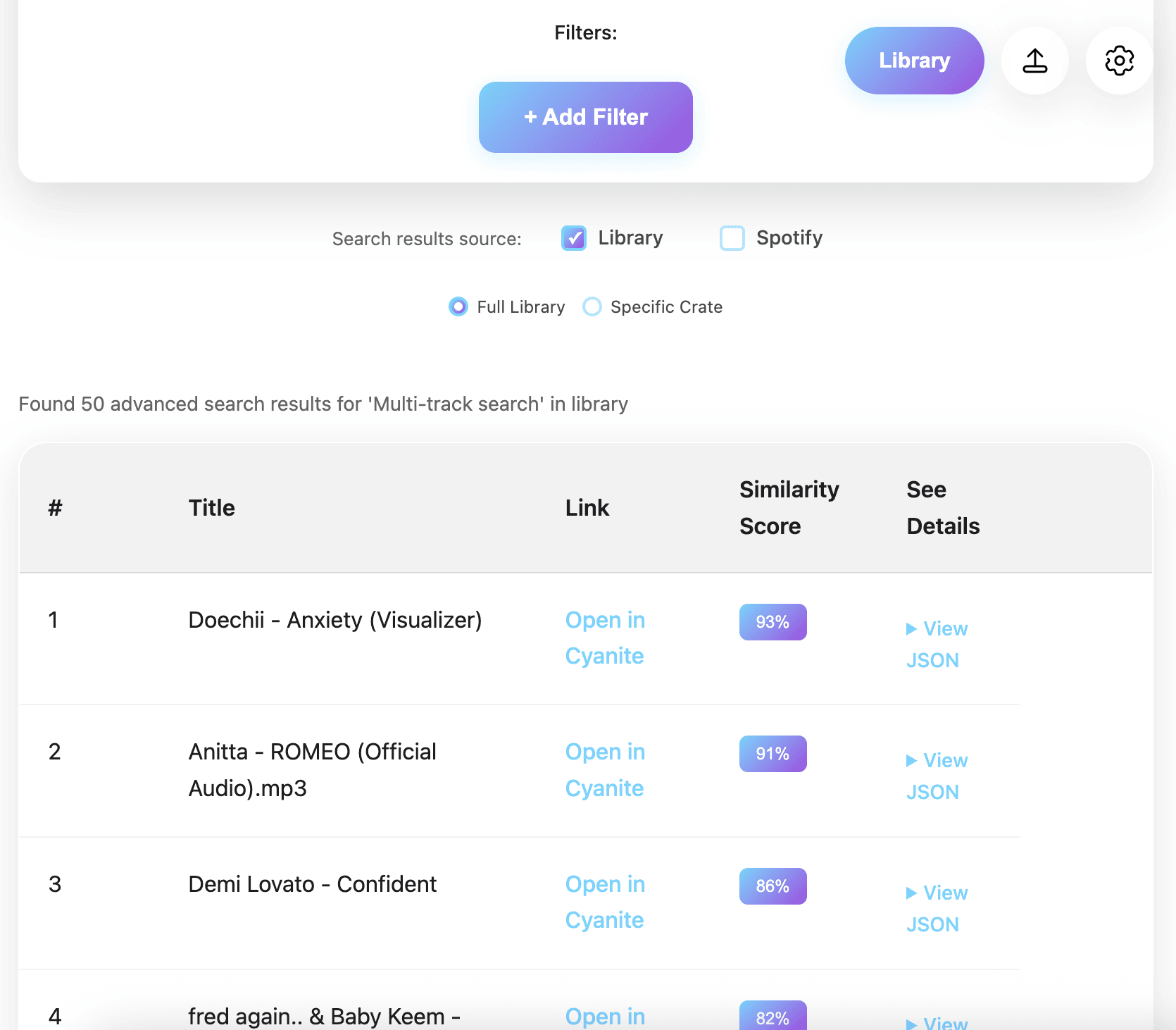

Security option 2: zero-audio pipeline—tagging without transferring audio

Some teams manage catalogs that cannot be transferred externally at all. These include confidential film productions, enterprise music departments, and archives operating under strict internal compliance rules. For these situations, Cyanite provides a spectrogram-based workflow that enables full tagging without the audio files ever being sent.

Spectrograms from left to right: Christina Aguilera, Fleetwood Mac, Pantera

Instead of uploading MP3s, audio is converted locally on the client side into spectrograms using a small Docker container provided by Cyanite. A spectrogram is a visual representation of frequency patterns over time. It contains no playable audio, cannot be converted back into a waveform without significant quality loss, and does not expose the original performance in any usable form.

From a metadata perspective, the results are identical to audio-based processing. From a privacy perspective, the original audio never leaves the customer’s environment. This makes the zero-audio pipeline a strong middle ground for teams that want AI-powered tagging while maintaining strict control over their content.

From a product perspective, all Cyanite features can be fully leveraged.

“For us at Synchtank, the spectrogram-based upload was key. Many of our clients are cautious about where their audio goes, and this approach lets us use high-quality AI tagging and search without transferring any copyrighted audio. That balance, confidence for our customers without compromising on quality, is what made the difference for us.” Amy Hegarty, CEO at Synchtank

Learn more: What are spectrograms, and how can they be applied to music?

Security option 3: fully on-premise deployment via the Cyanite Audio Analyzer on the AWS Marketplace

For organizations with the highest security and compliance requirements, Cyanite also offers a pseudo-on-premises deployment option via the AWS Marketplace. In this setup, Cyanite’s tagging engine runs entirely inside the customer’s own AWS cloud infrastructure via the Cyanite Audio Analyzer.

This approach provides:

- Complete pseudo-on-premise processing

- Zero data transfer outside your AWS cloud environment

- Full control over storage, access, and compliance

- Tagging accuracy identical to cloud-based workflows

This option is typically chosen by film studios, broadcasters, public institutions, and organizations working with unreleased or highly sensitive material that must pass strict internal or external audits.

Because the pseudo-on-premise container operates in complete isolation (no internet connection), search-based features—including Similarity Search, Free Text Search, and Advanced Search—are not available in this setup. In pseudo-on-premise environments, Cyanite therefore focuses exclusively on audio tagging and metadata generation.

Important note: The rates on the AWS Marketplace are intentionally high to deter fraudulent activity. Please contact us for our enterprise rates and find the best plan for your needs.

Choosing the right privacy model for your catalog

Selecting the right setup depends less on catalog size and more on how tightly you need to control where your audio lives. A useful way to frame the decision is to consider how much data movement your internal policies allow.

In practice, teams tend to choose based on the following considerations:

- GDPR cloud processing works well when secure external processing is acceptable.

- Zero-audio pipelines suit teams that cannot transfer audio but can share abstract representations.

- Pseudo-on-premise deployment is best for environments requiring complete isolation.

All three options deliver the same tagging depth, consistency, and accuracy. The difference lies entirely in how data moves, or doesn’t move, between systems.

Final thoughts

Using AI with music requires trust—trust that audio is handled responsibly, that ownership is respected, and that workflows adapt to real-world constraints rather than forcing compromises. Cyanite’s privacy-first architecture is designed to uphold that trust, whether you prefer cloud-based processing, a zero-audio pipeline, or a fully isolated pseudo-on-premise deployment.

If you’d like to explore which setup best fits your catalog, workflow, and compliance needs, you can review the available integration options.

FAQs

Q: Where is my audio processed when using Cyanite’s cloud setup?

A: In the standard setup, audio is processed on secure servers located in the EU and handled in full compliance with GDPR. Audio is not shared with third parties and remains your property at all times.

Q: Can I use Cyanite without sending audio files at all?

A: Yes. With the zero-audio pipeline, you convert audio locally into spectrograms and send only those abstract frequency representations to Cyanite. The original audio never leaves your environment, while full tagging results are still generated.

Q: What is the difference between the zero-audio pipeline and pseudi-on-premise deployment?

A: The zero-audio pipeline sends spectrograms to Cyanite’s cloud for analysis. The pseudo-on-premise deployment runs the Cyanite Audio Analyzer entirely inside your own AWS cloud infrastructure, which is cut off from the internet and only connected to your system. Pseudo-on-premises offers maximum isolation but only supports tagging, without search features.

Q: Are Similarity Search and Free Text Search available in all privacy setups?

A: Similarity Search, Free Text Search, and Advanced Search are available in cloud-based and zero-audio pipeline workflows. In fully pseudo-on-premise deployments, Cyanite focuses exclusively on tagging and metadata generation due to the isolated environment.

Q: Which privacy option is right for my catalog?

A: That depends on your internal security, legal, and compliance requirements. Teams with standard protection needs often use GDPR cloud processing. Those with higher sensitivity choose the zero-audio pipeline. Organizations requiring full isolation opt for on-premise deployment. Cyanite supports all three.