Context is what separates music from AI slop

Add structured context to your discovery workflows with Cyanite’s Advanced Search.

Every week, thousands of new tracks enter music libraries. There’s no real limit to how many can be uploaded. As catalogs expand, it becomes harder to tell why one piece deserves attention over another.

At the same time, generative AI tools make it possible to produce a lot of music quickly and cheaply. This means catalogs can get flooded with “AI slop,” a term used to describe mass-produced generative content created for volume rather than quality.

Context is key to making the distinction between music and AI slop. Knowing who created a track, what shaped it, and why it exists roots it in human experience and creative intent. Without that layer of insight, music becomes interchangeable audio, reduced to tags and search terms.

What makes music human?

The intention behind music and the social connection it creates are what make it human.

That humanity is visible in the decisions that shape a track. No matter how minimal or elaborate a composition is, every musical choice reflects human knowledge and experience. The key, rhythm, production, and instruments used are all guided by cultural exposure, emotional memory, and learned musical language.

And then there’s the risk. When someone releases music, they also release control over how it will be heard and judged. That exposure is vulnerable, and recognizing the risk and context behind a piece makes the connection to it stronger.

The AI limitation

When intention and personal stakes are missing, the difference is noticeable.

AI-generated music can sound like human-made tracks. It can replicate style, structure, and production detail with striking accuracy. In many contexts, it even meets professional standards.

However, it doesn’t come from lived experience and instead reconstructs patterns it has learned from existing music. There’s no vulnerability behind the track. There’s no social stake. And there’s no personal history shaping the decision to produce it. The output is coherent because the sequence fits statistically, not because something needed to be expressed.

Why context matters more than ever

With the sheer volume of modern catalogs, several tracks can sound interchangeable. You can work on a brief and find 10 pieces that would technically meet the requirements.

What actually helps you choose is knowing where the music comes from and who made it. That extra layer of information changes how you hear it. In a space this crowded, context is what keeps everything from blending into the same background noise.

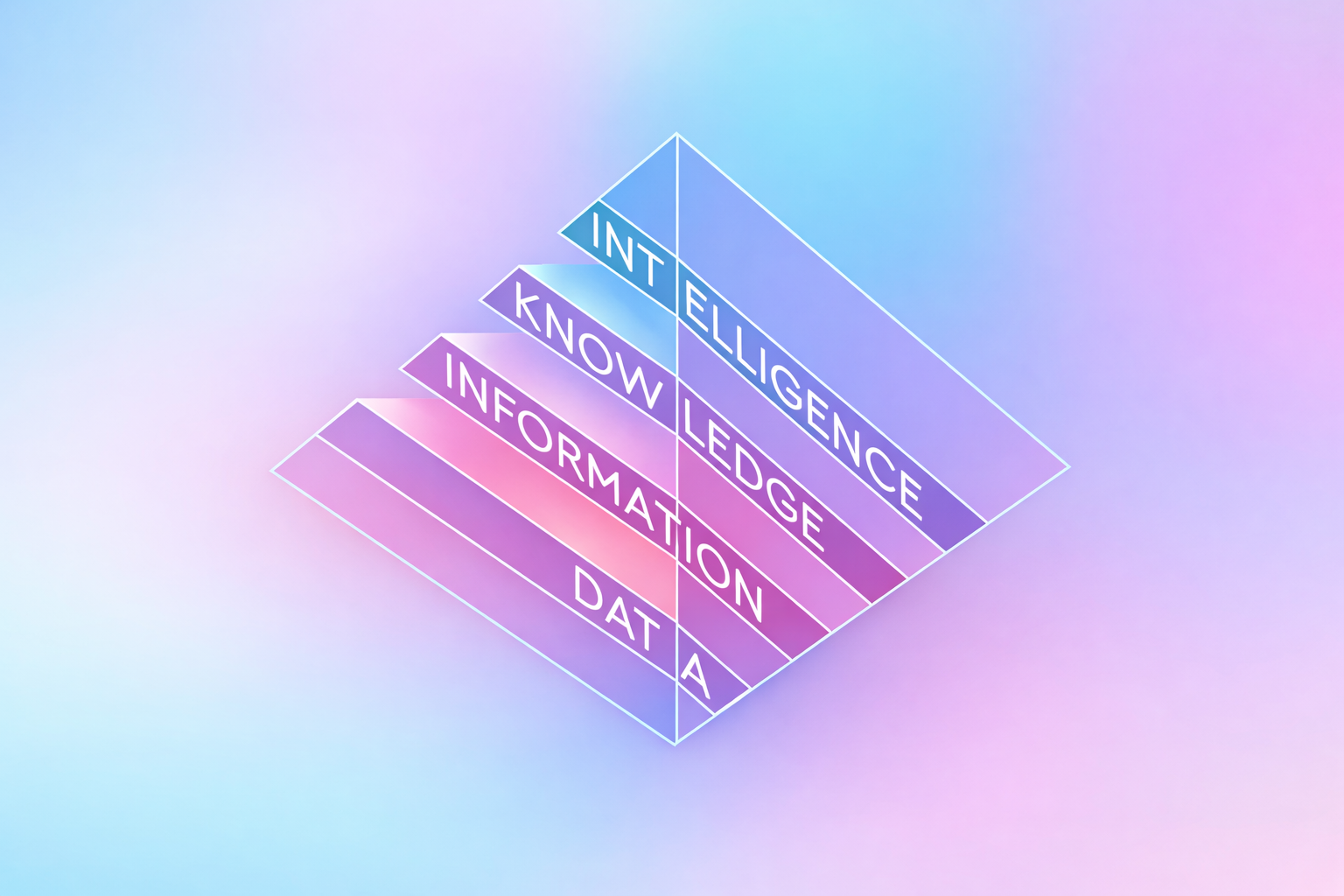

The potential of contextual metadata

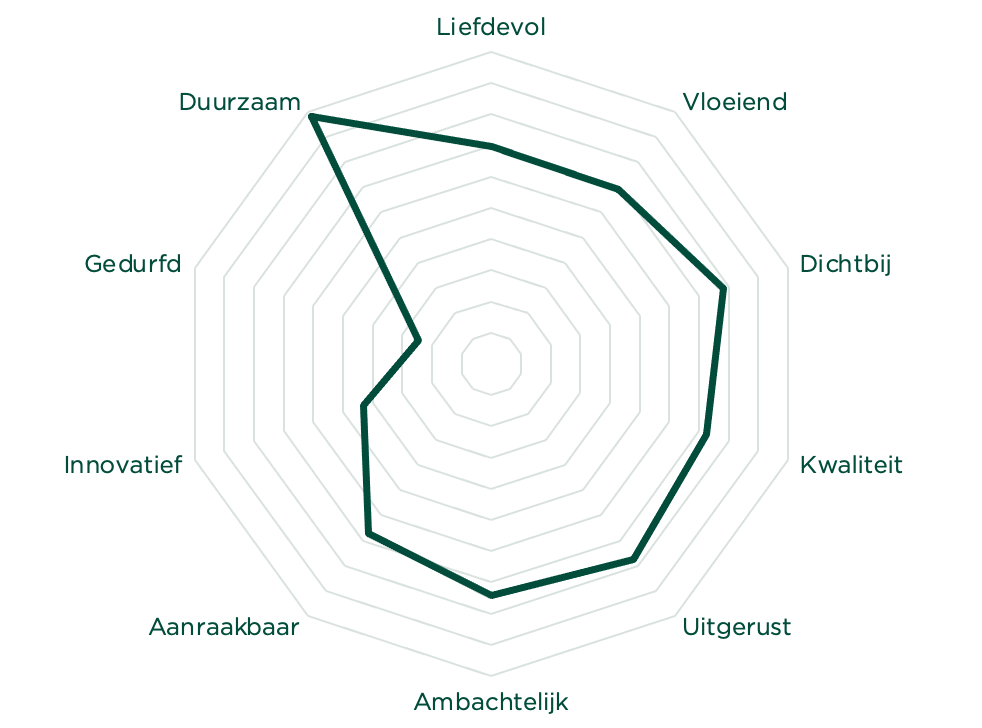

If context gives music meaning, it needs to be structured as metadata so it can be searched and filtered at scale.

Custom tagging makes that possible. Catalogs can include fields for artist origin, geography, creative background, cultural context, and editorial positioning. When that information can be filtered, it starts shaping decisions. Context moves from description to action.

The same principle applies to one of the clearest distinctions in modern catalogs: whether a track is human-created or AI-generated. When that difference is structured as metadata, it becomes searchable inside existing discovery systems.

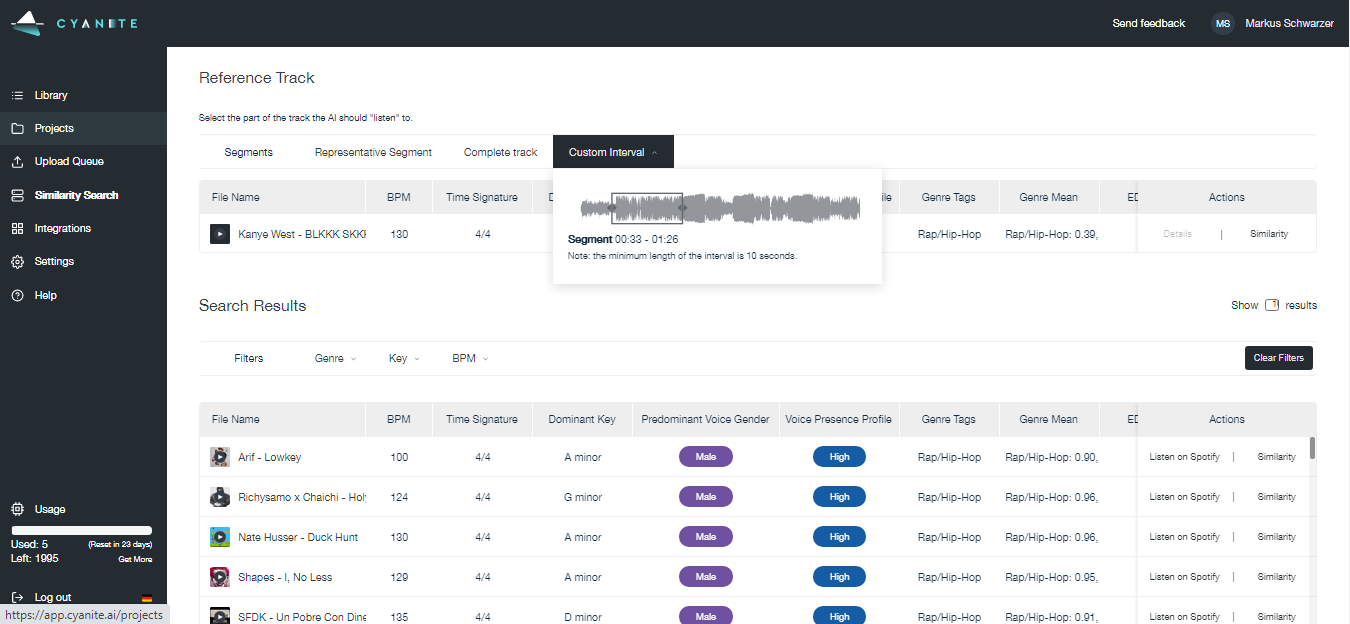

Melodie Music puts this into practice to spotlight original Australian artists. They combine Cyanite’s sound-based AI search with their own editorial and contextual metadata.

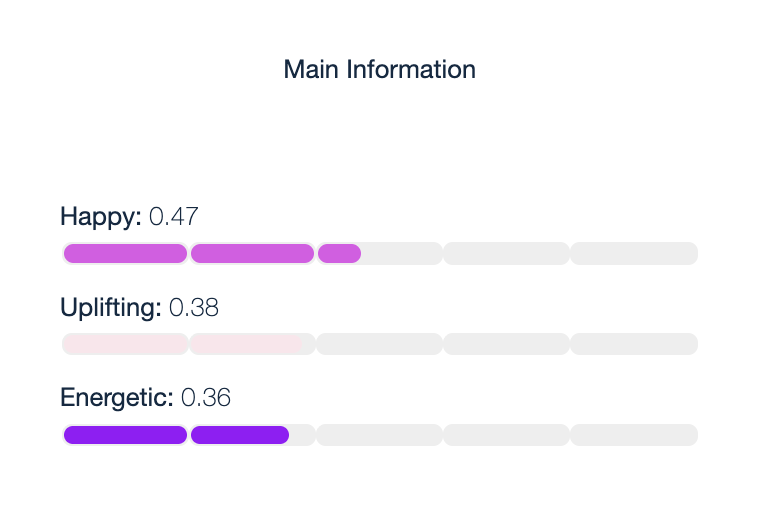

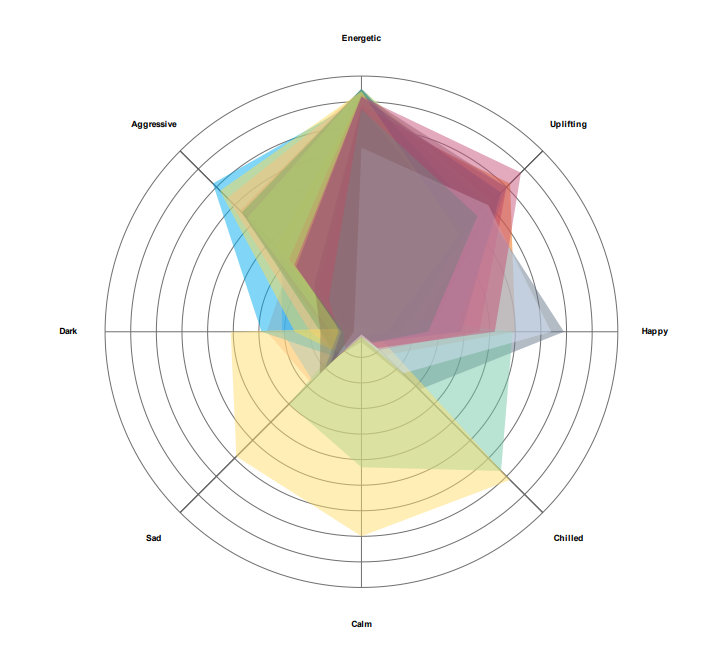

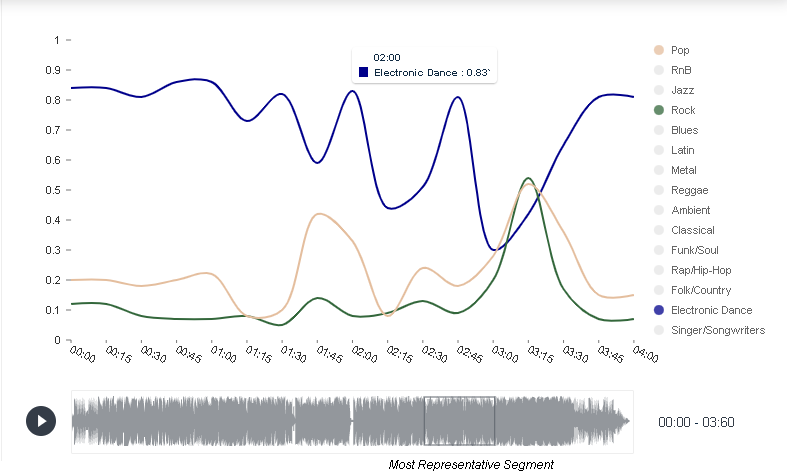

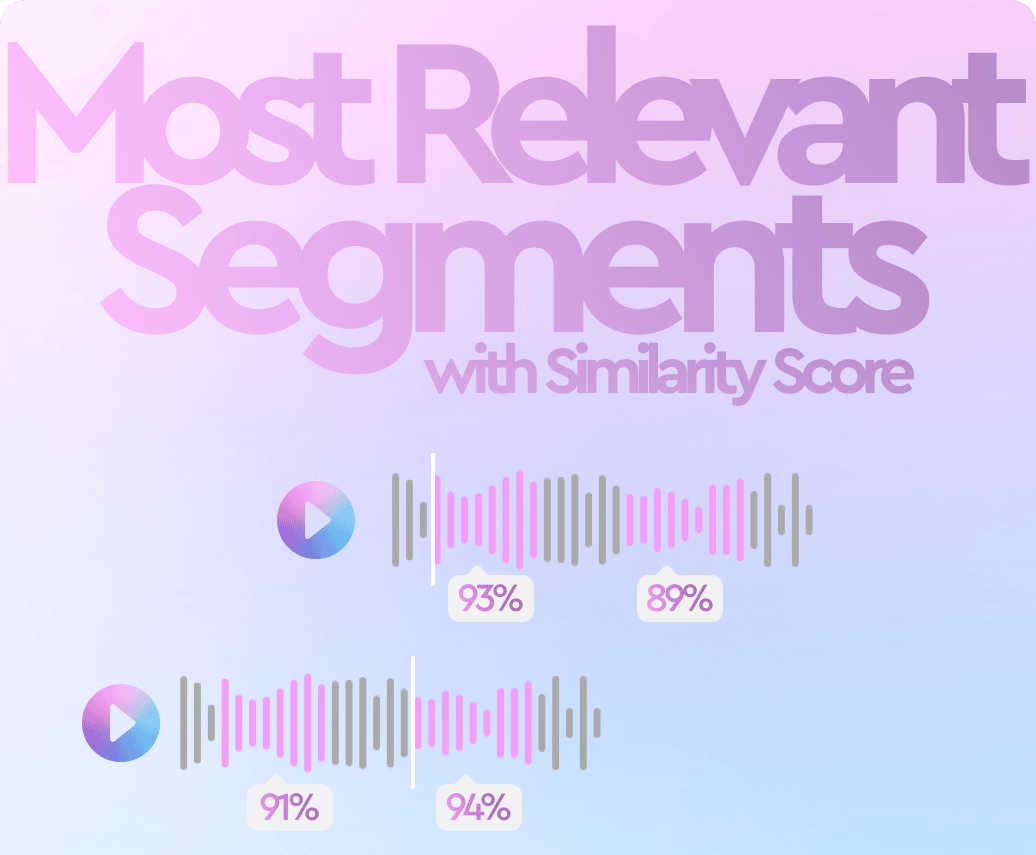

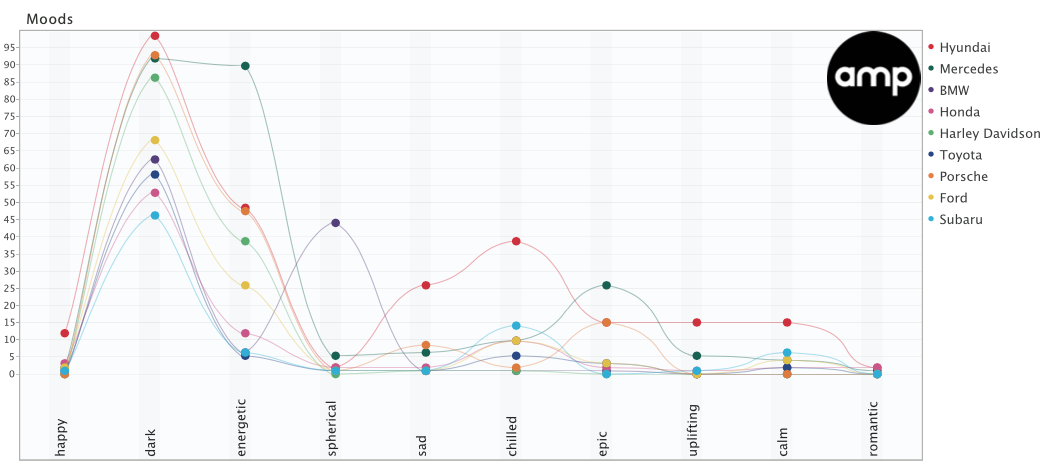

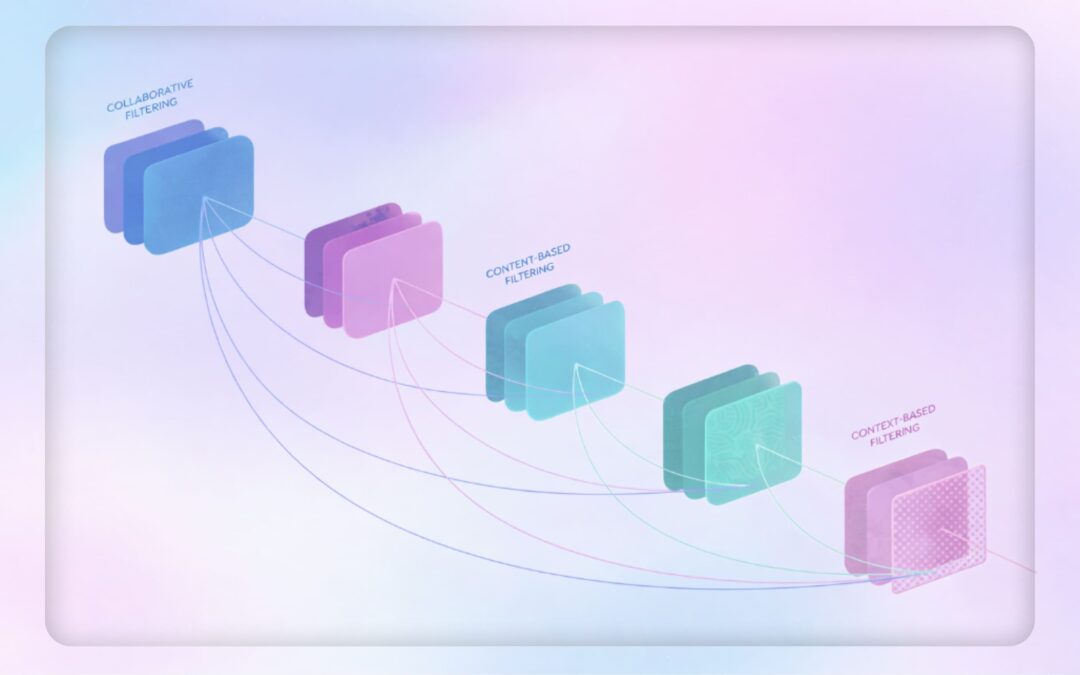

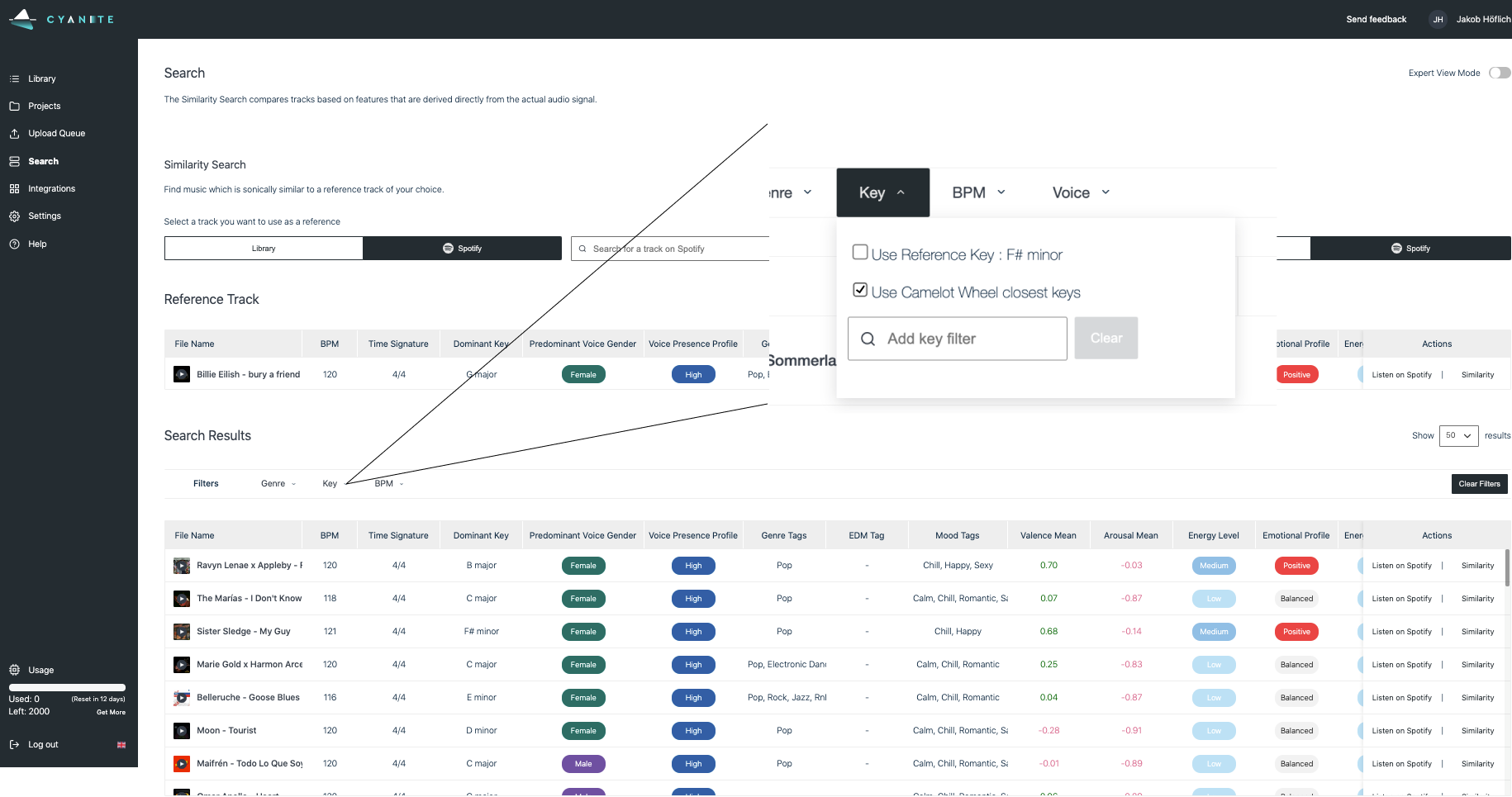

- Cyanite analyzes the sound of a reference track and generates a shortlist based on emotional profile and sonic character.

- Melodie layers contextual filters, such as artist origin, on top of those results.

- Users refine further using editorial tags aligned with cultural or strategic priorities.

- The final selection satisfies both the creative brief and the mandate to support specific artist communities.

What this means for music discovery

Algorithmic recommendations alone aren’t enough. Teams want clarity about origin, authorship, and AI involvement before committing to a track.

In our joint study with MediaTracks and Marmoset, we found that contextual metadata plays a central role in how professionals work through briefs. Respondents described relying on origin details and creator background to avoid misalignment and explain their choices to clients.

Clearly labeling AI involvement was part of that same expectation. Professionals are open to working with AI-generated music, but they want to know explicitly whether a track is AI-generated or human-made. Context, including transparency around authorship, informs decisions.

Read more: Why AI labels and metadata now matter in licensing

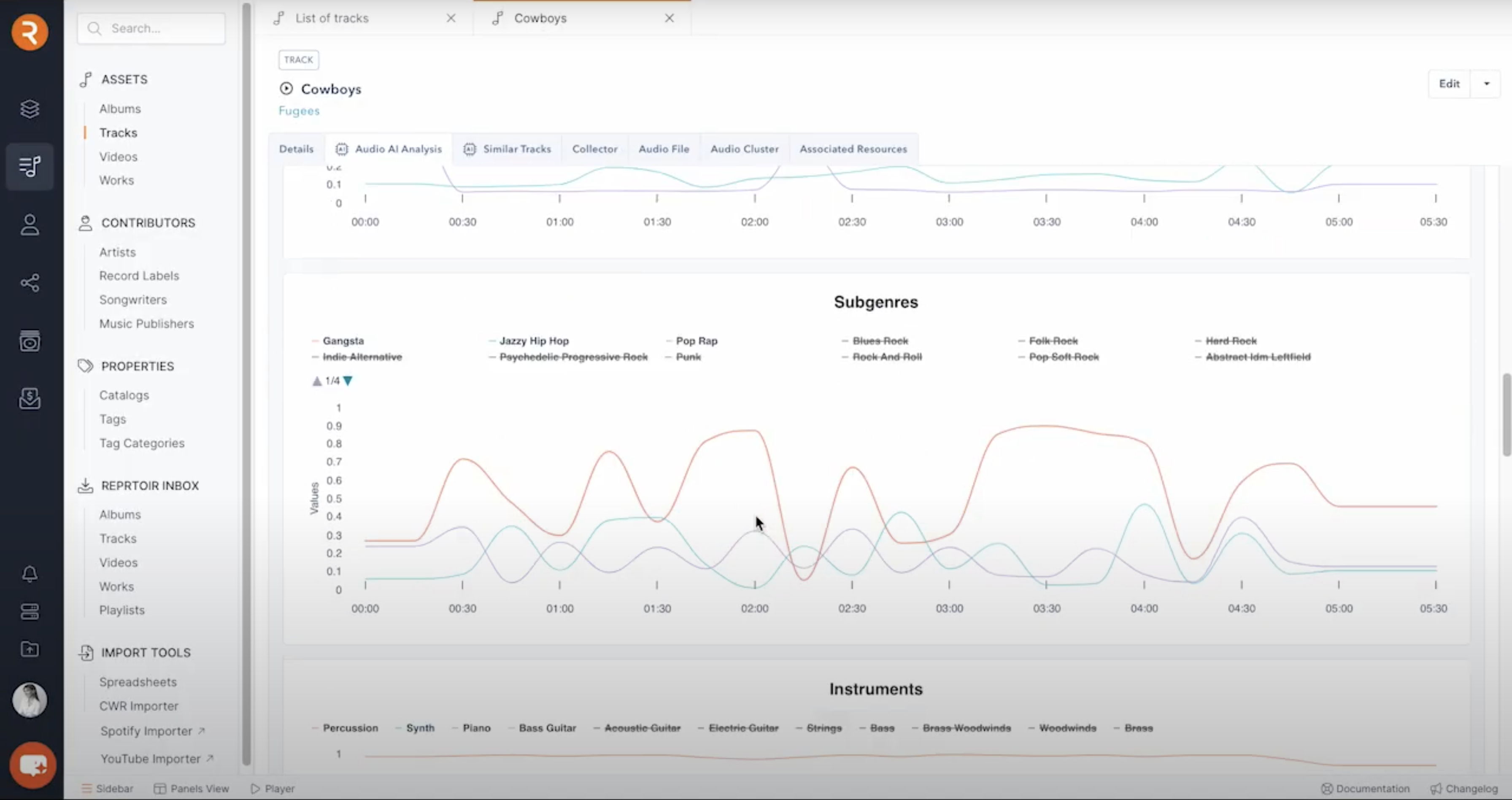

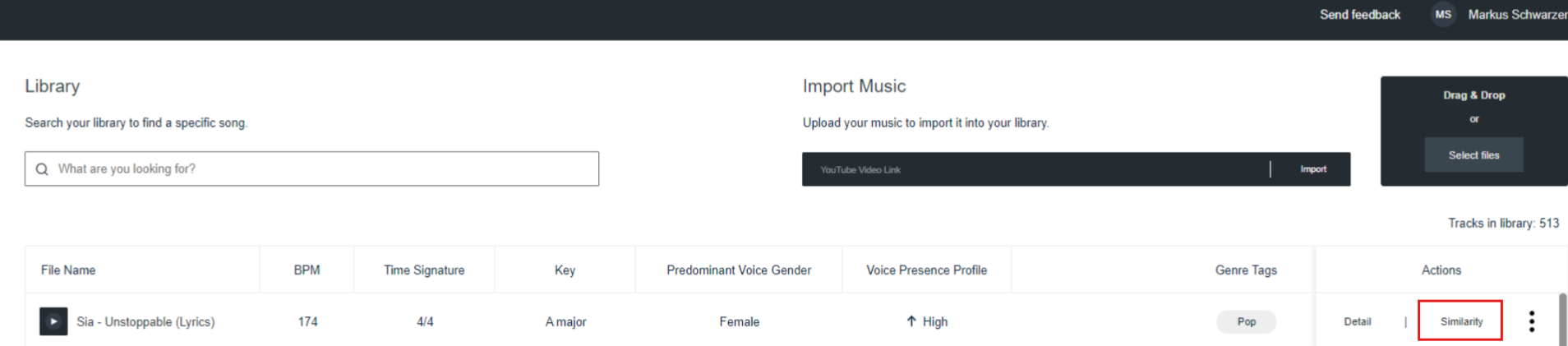

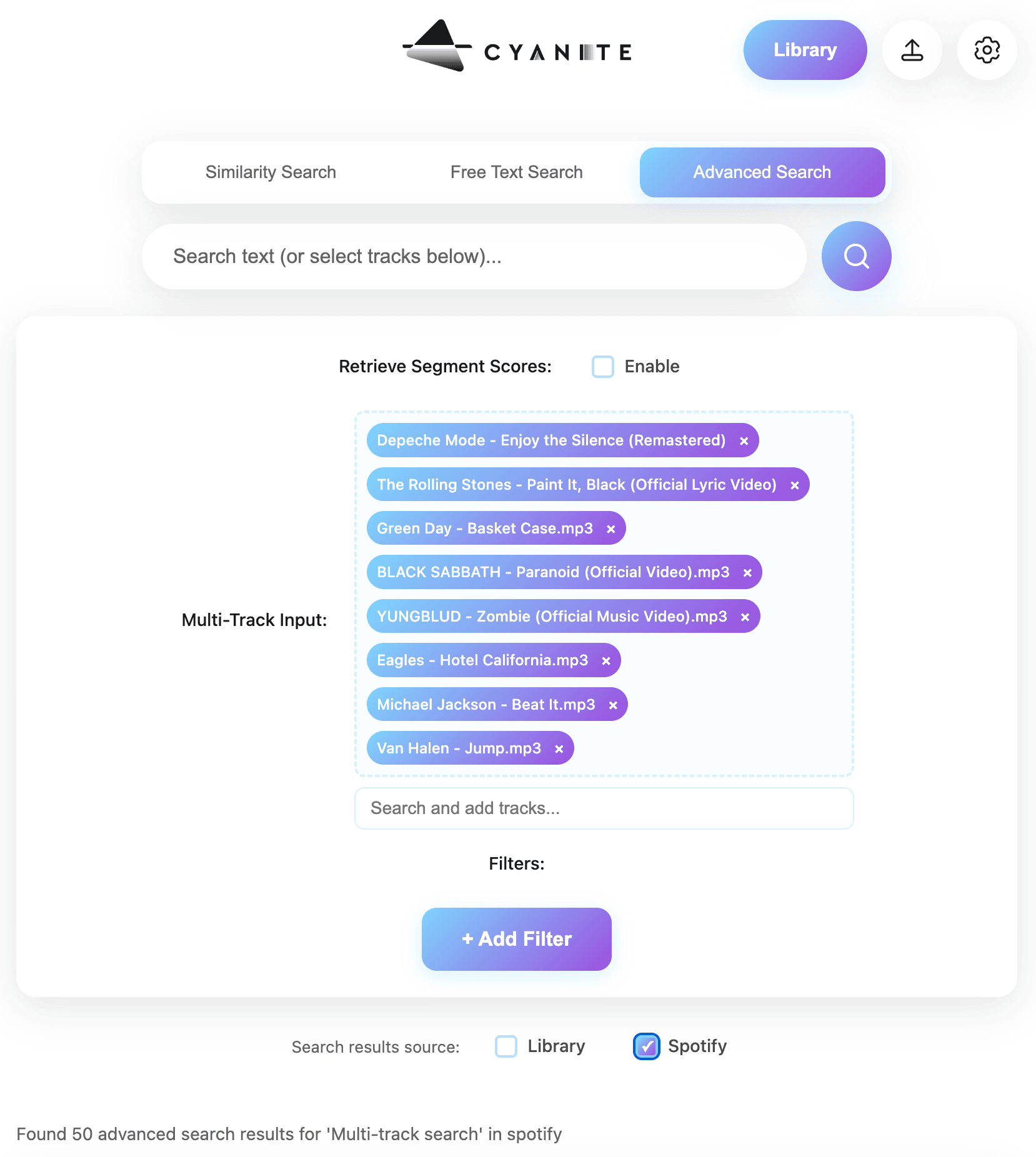

Cyanite’s Advanced Search, available via API integration, allows teams to upload their own custom metadata fields and use them for filtering. Fields can include artist origin, cultural background, clearance information, and editorial categories.

Search queries then run within that defined subset, so sound analysis operates inside contextual boundaries set by the catalog owner, as implemented by Melodie Music.

For platforms embedding Cyanite’s search algorithms into their own systems, this enables structured transparency at scale. Context becomes part of the discovery logic itself.

Choosing meaning over noise

“We have always connected to music because it carries intention, experience, and emotion – not just sound. A song means something because it was created in a specific moment, for a reason, by someone responding to their world. Today, we are surrounded by more music than ever, inevitably making it harder to feel that connection. Delivering context to a song gives a glimpse into what went into it, and with it a chance to understand the people and feelings behind the music.

Even though AI-generated music can sound pleasant, it is fundamentally an imitation – a reconstruction of patterns it has seen before. It lacks intention, situation, risk, and personal stake.

That’s why context matters more than ever. Knowing why a piece of music exists, where it comes from, and what went into it is what turns sound into something meaningful.”

Context can double as infrastructure in catalogs. As AI-generated music becomes easier to produce and distribute, what will separate human-made tracks from AI slop is whether a track’s origin is visible and understood. Catalogs that structure and surface contextual metadata can ensure music is selected based on where it comes from and why it exists, not just how it sounds.

Ready to add context to your discovery workflows?

FAQs

Q: How can contextual metadata help distinguish human-created music from AI-generated tracks?

A: Contextual metadata adds information beyond sound analysis, such as artist background, origin, editorial positioning, and authorship labeling. It can allow teams to filter catalogs based on transparency and creative intent, helping distinguish human-created music from generative content produced at scale.

Q: Does Cyanite detect whether music is AI-generated or human-made?

A: Cyanite is developing AI music detection capabilities designed to support transparent catalog workflows. Early implementations allow teams to label and filter tracks based on AI involvement, helping licensing professionals and curators make informed decisions during discovery.

Q: Can Cyanite’s Advanced Search filter music using custom metadata fields?

A: Yes. Advanced Search allows catalog owners to include their own metadata fields as filters within search queries. These filters narrow the searchable catalog before sound similarity or text-based matching is applied, helping teams surface results that fit their creative and business requirements.

Q: How can music platforms integrate contextual discovery into existing workflows?

A: Music catalogs can integrate Cyanite’s Advanced Search through the API, making it possible to combine sound analysis with custom metadata filters inside their existing workflows.