An Analysis of Club Sounds with Cyanite

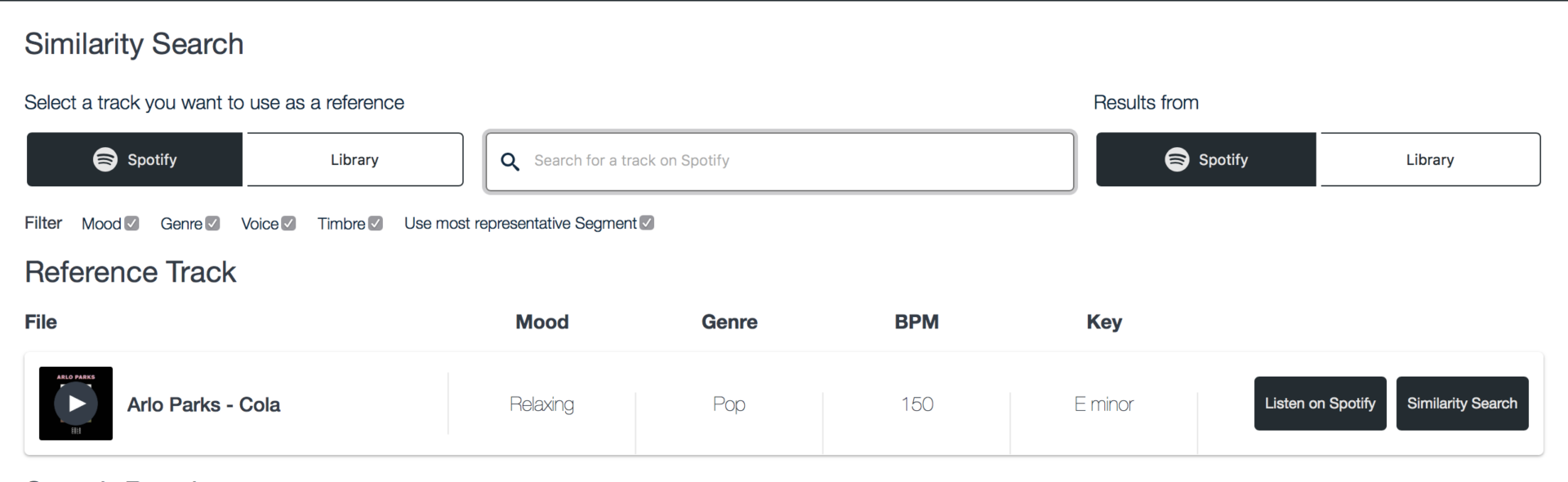

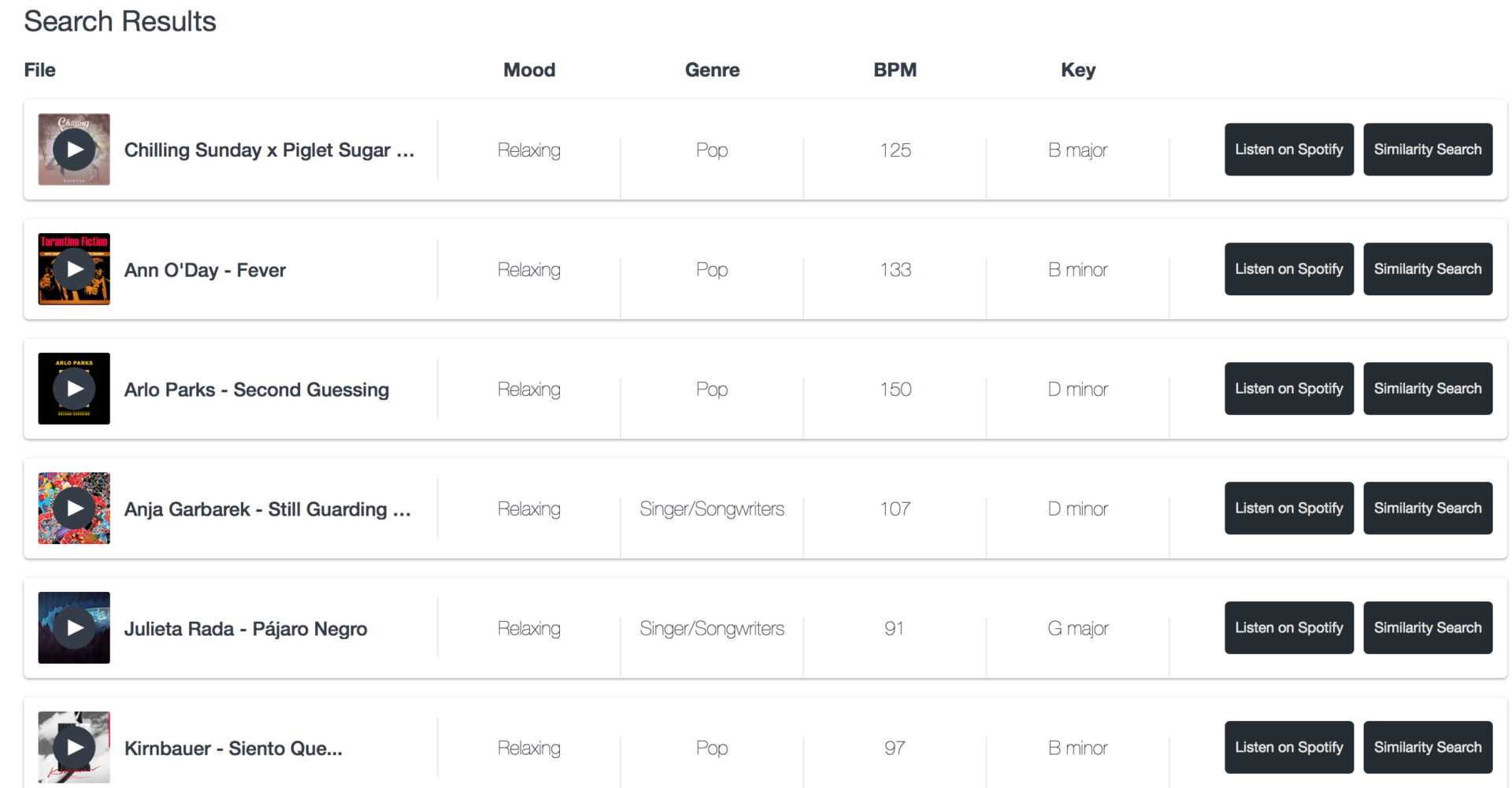

If we asked you to describe the vibe of your favourite nightclub, could you? Today, we show you how we would describe the sounds of some of our favourite clubs, with the help of the Cyanite music AI analysis software.

We analysed album compilations of 9 well-loved clubs across Germany. From Berlin, these were Berghain, Griessmühle, About Blank, Golden Gate and Kater Blau. In addition to these, we analysed music from Hamburg’s Golden Pudel, Leipzig’s Institut für Zukunft, and Omen and Robert Johnson in Frankfurt.

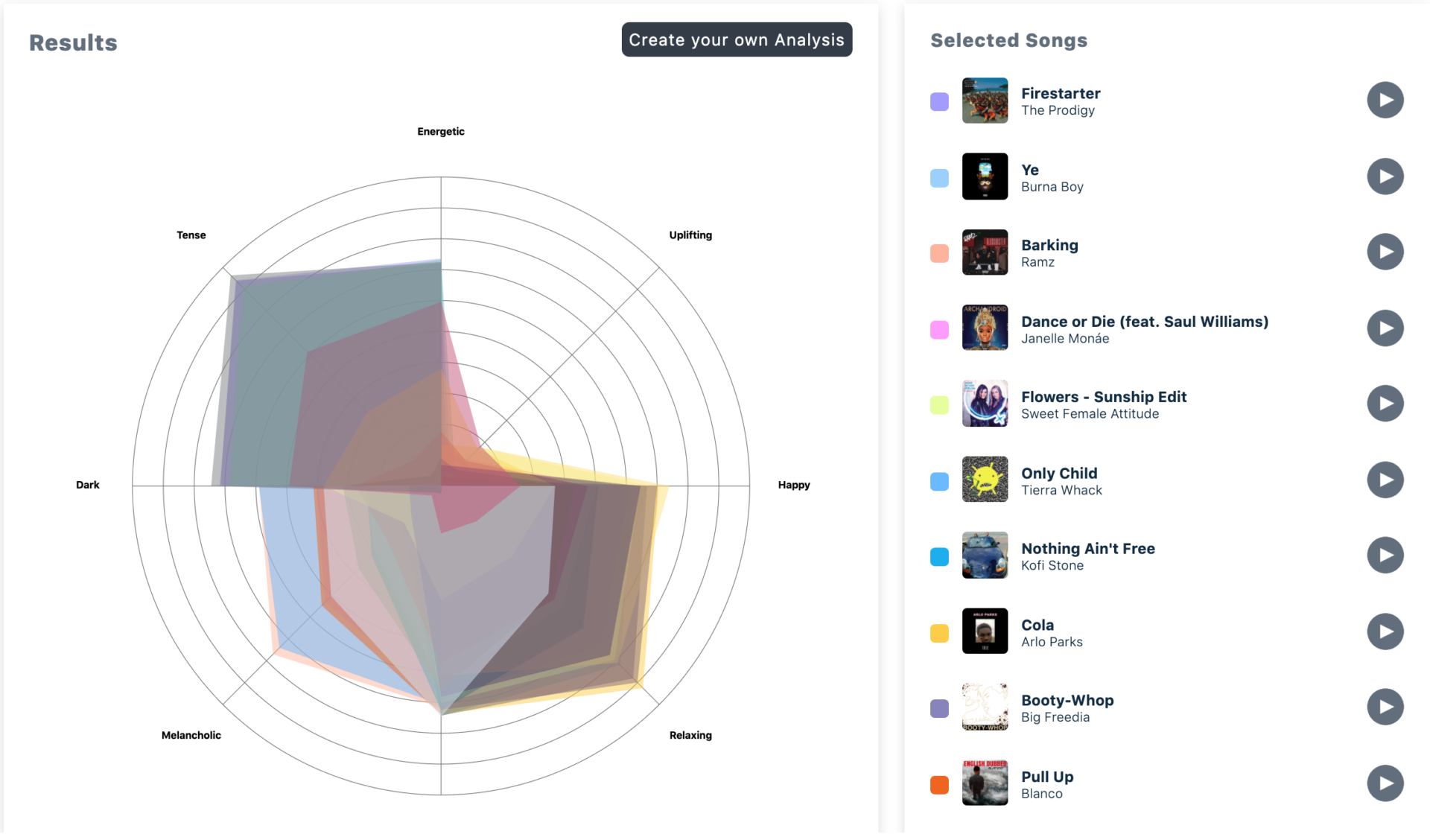

The mood multi-label classifier provides the following labels:

tense, uplifting, relaxing, melancholic, dark, energetic, happy

Each label has a score reaching from 0-1, where 0 (0%) indicates that the track is unlikely to represent a given mood and 1 (100%) indicates a high probability that the track represents a given mood.

Since the mood of a track might not always be properly described by a single tag, the mood classifier is able to predict multiple moods for a given song instead of only one. A track could be classified with dark (Score: 0.9), while also being classified with aggressive (Score: 0.8).

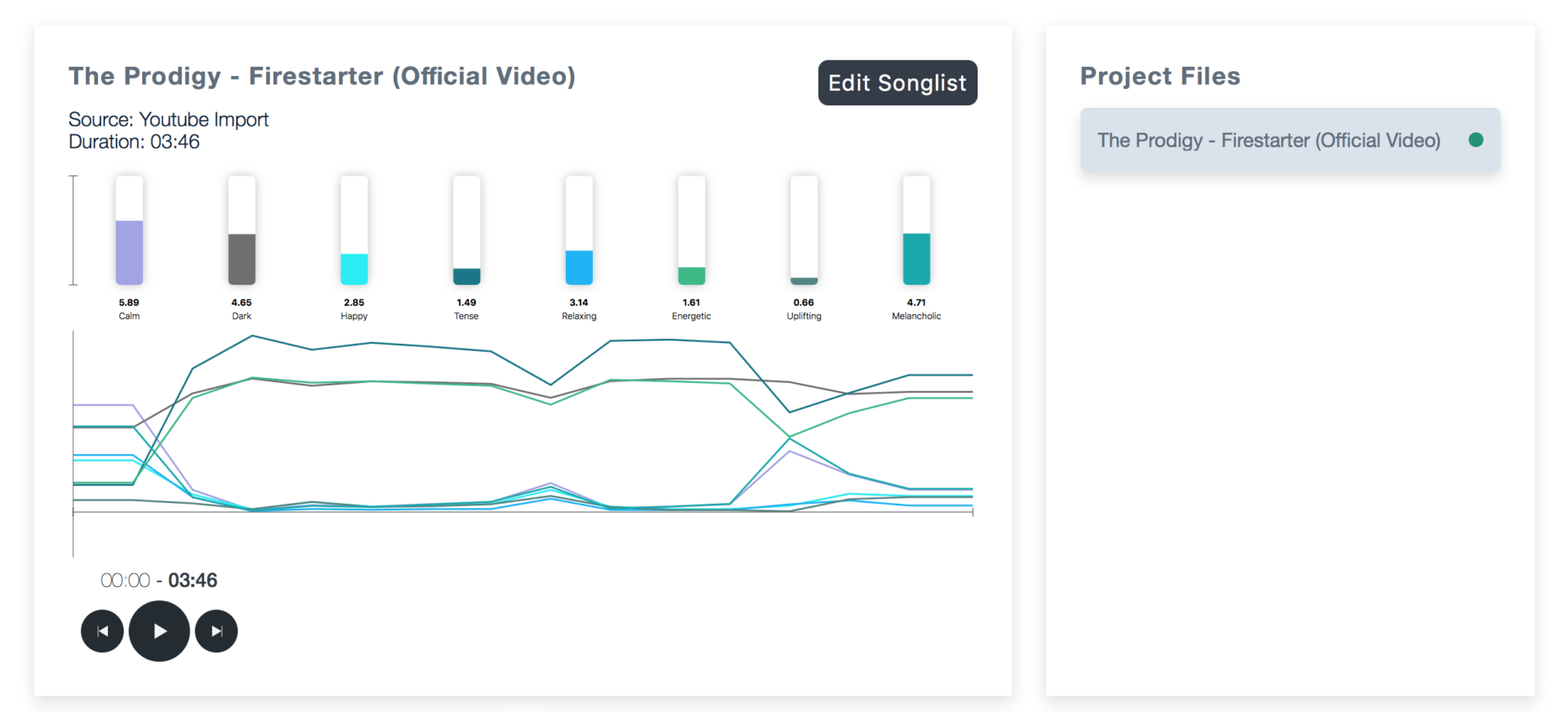

The mood can be retrieved both averaged over the whole track and segment-wise over time with 15s temporal resolution. In addition the score the API also exposes a list which includes the most likely moods, or the term ambiguous in case of none of the audio not reflecting any of our mood tags properly.

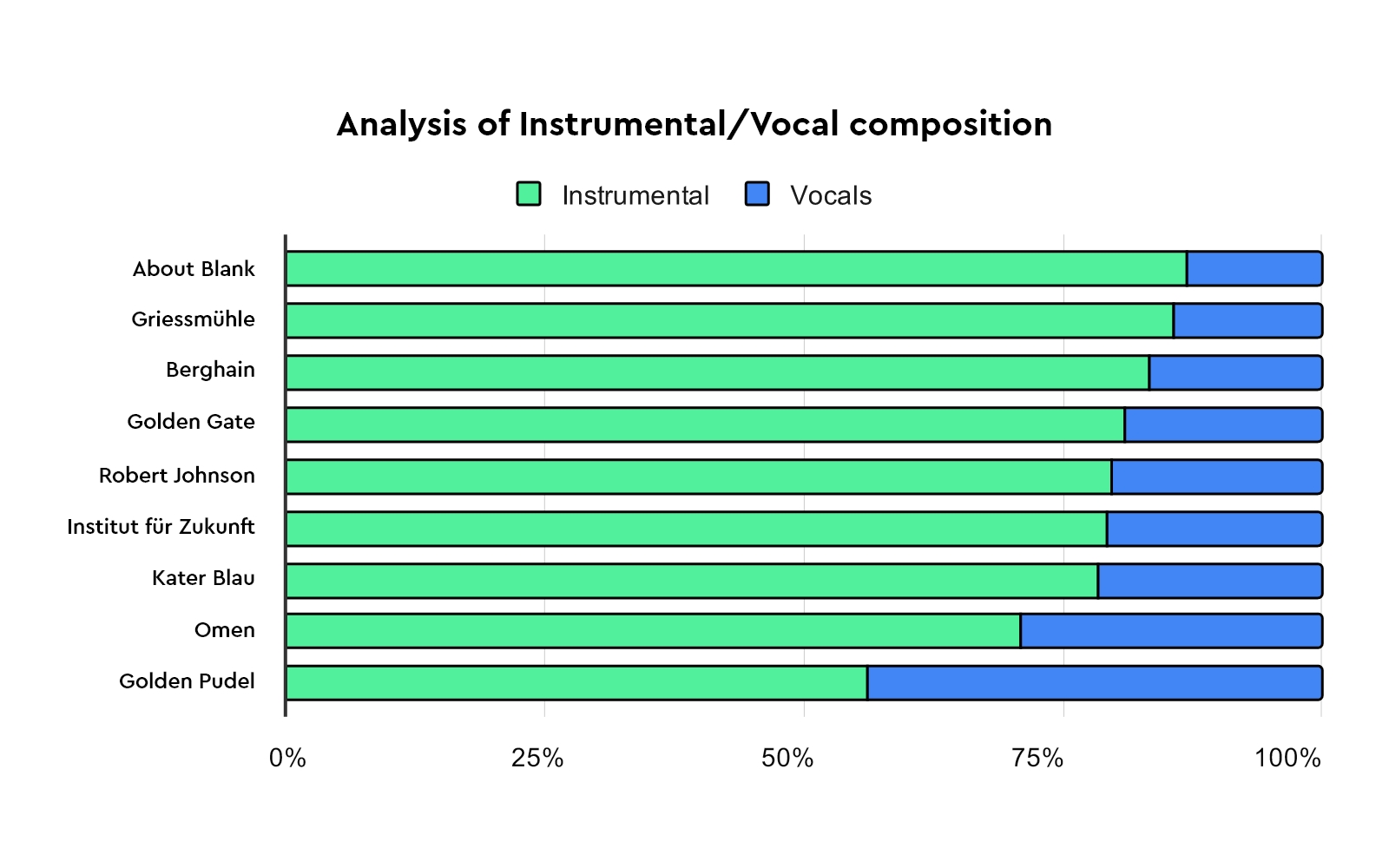

Insights from Instrumental and Voice analysis: Berlin clubs lead the way for greatest use of Instrumental in their tracks.

Based on results from the CYANITE Instrument and Voice machine learning analysis, we see that while most of these club compilations’ tracks are extremely dominated by instrumentals, the top four clubs which contained the most instrumental-heavy tracks were from Berlin.

About Blank contained the highest amount of instrumental, followed by Griessmühle, Berghain and Golden Gate. For all of these clubs, our analysis showed that instrumentals made up more than 80% of the tracks.

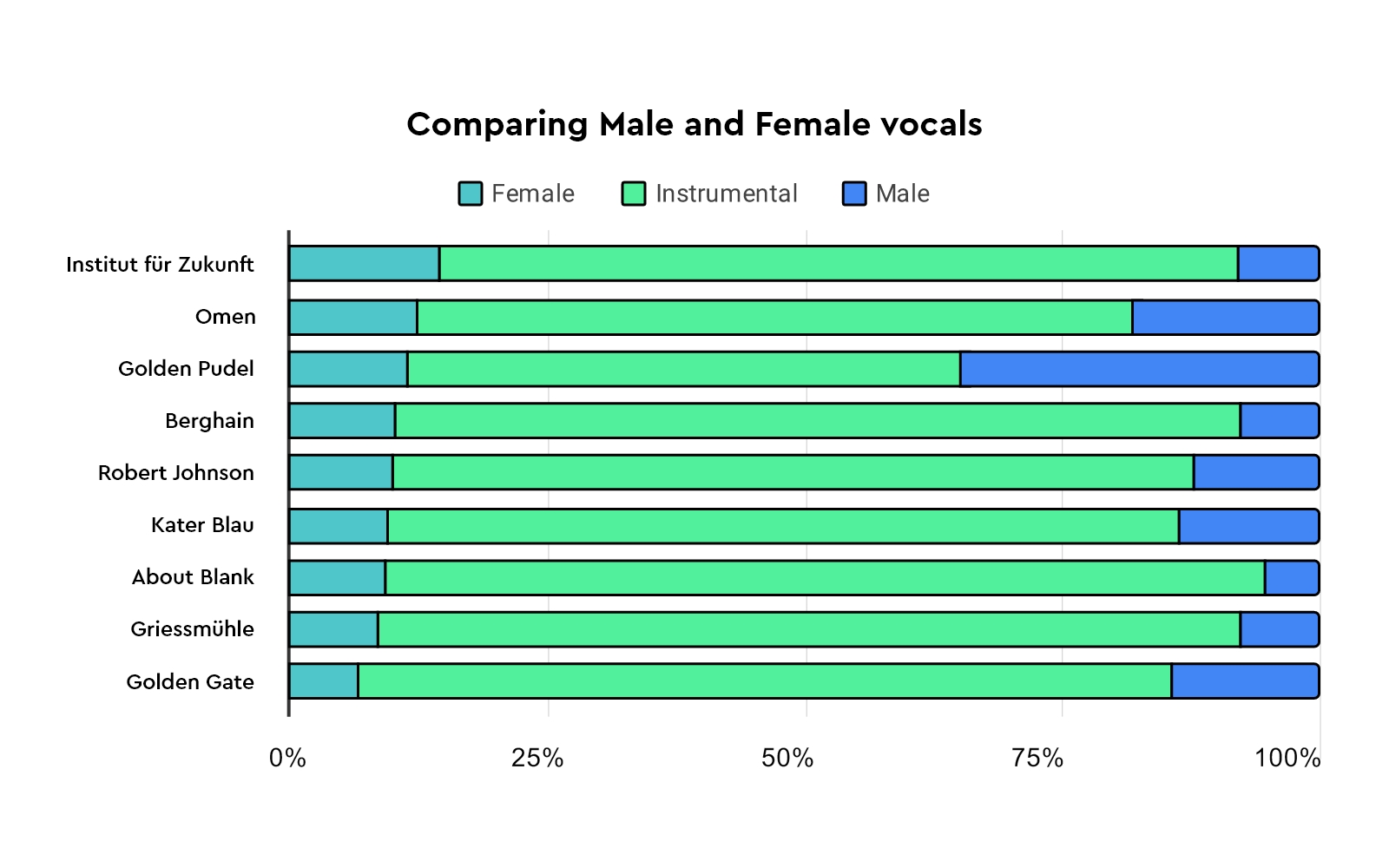

When we look at results of the voice analysis, we see that the clubs with the most use of female voices in their tracks are clubs outside of Berlin. In first place, we have Institut für Zukunft, followed by Omen, and then Golden Pudel. Funny enough, we also found that the four clubs with the least use of female vocals in their tracks were from Berlin! These clubs are: Kater Blau, About Blank, Griessmühle, and Golden Gate.

When looking at the presence of male vocals, we see that Golden Pudel is the club using the most male vocals amongst the clubs we are studying today. This is followed by Omen and Golden Gate.

Based on the results from this analysis, data from Golden Pudel intrigued us the most. We observe that Golden Pudel’s music, unlike the rest of the clubs, has a slightly more even balance between instrumental and vocals that is almost a 50/50 split.

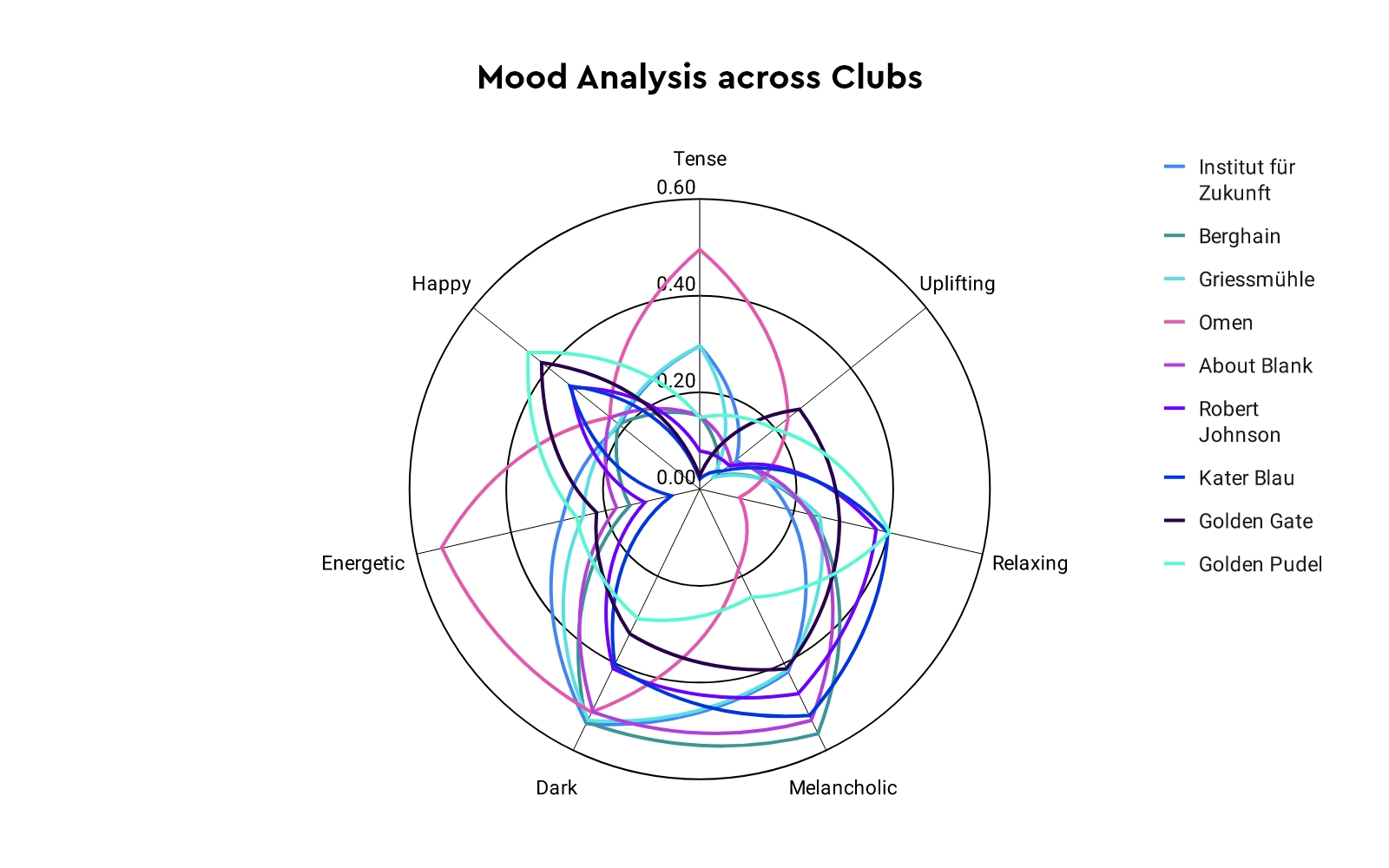

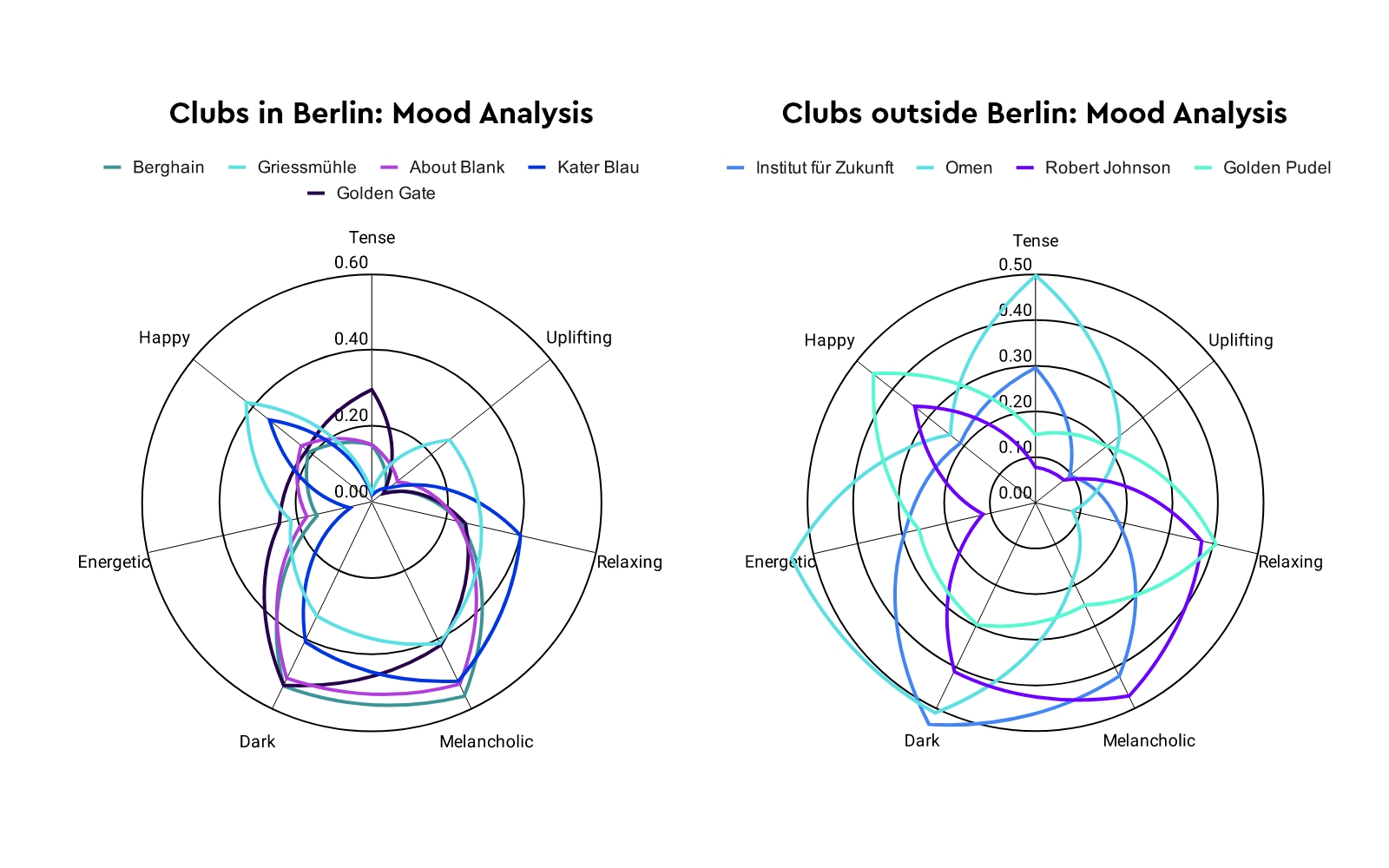

Insights from the CYANITE mood tagging technology: Berghain is the gloomiest of them all.

Looking at the results, here’s what we found:

With its grim industrial aesthetic, it’s fitting that our analysis found Berghain to be the most melancholic club. Berghain ties with Institut für Zunkunft for having the most Dark sound.

Golden Gate, a favourite of ours for a good night of House music, takes the prize for being the most uplifting club. Our mood analysis also showed that Frankfurt’s Omen club is at once the most tense and most energetic, while Golden Pudel in Hamburg was found to be the most happy and relaxing. Our mood analysis also showed that the compilation from Frankfurt’s now-defunct Omen club, is at once the most tense and most energetic out of all the clubs’ compilations. A very apt result indeed- Omen was a prominent symbol of the unrestrained, pure fervour of 90’s rave culture, and one whose sound we definitely miss greatly.

Talking about the ‘Berlin Sound’…

Comparing the clubs, we see that clubs in Berlin have a distinct, extreme skew towards the dark and melancholic, indicating the very characteristic moodiness that we so love and miss in these times!

Looking at the clubs elsewhere, we see that while dark and melancholic moods are still very much present, there isn’t as clear of a skew towards these two only. Instead, our data from the 4 clubs outside of Berlin show more diverse moods, with no clear skew in a certain direction.

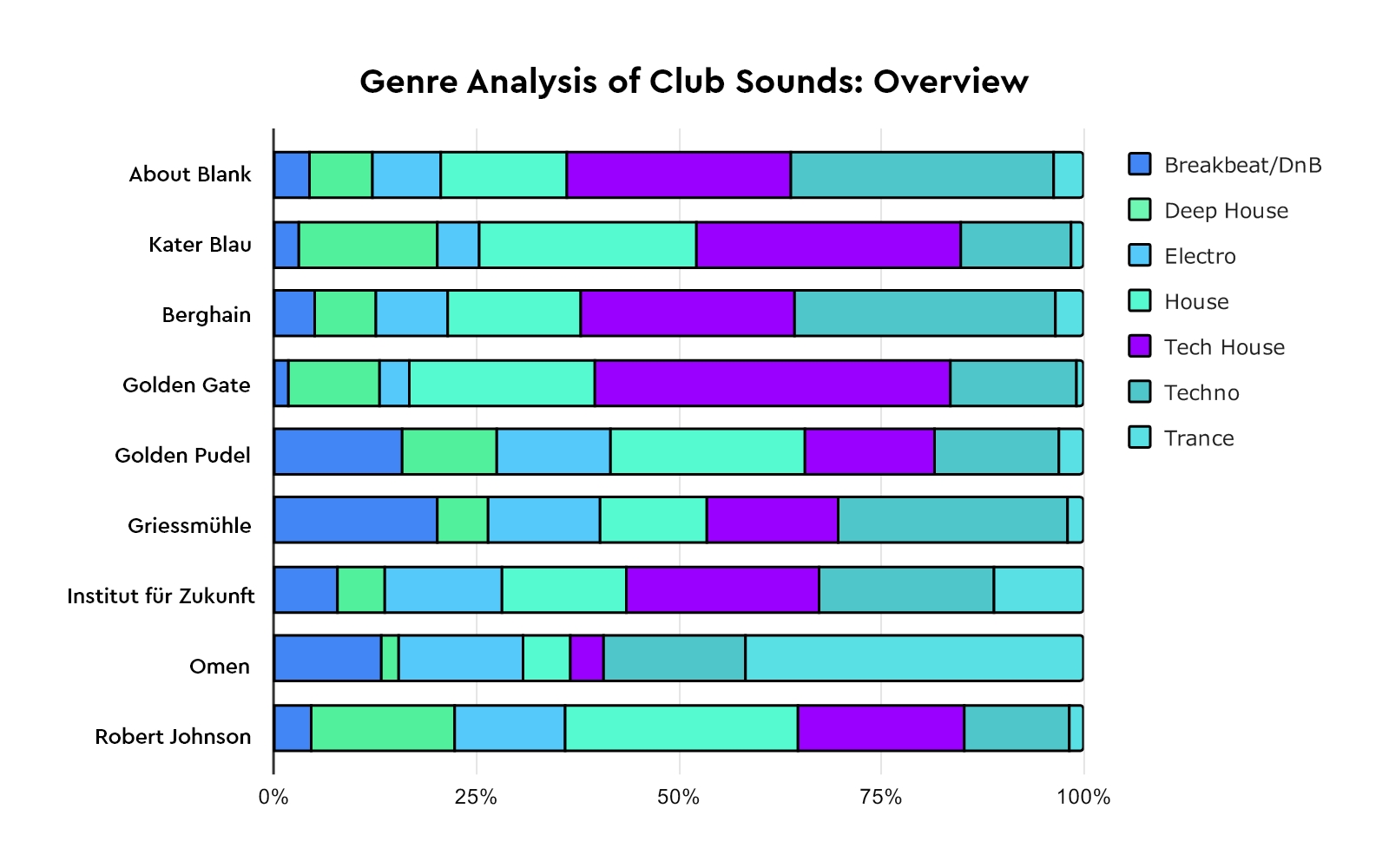

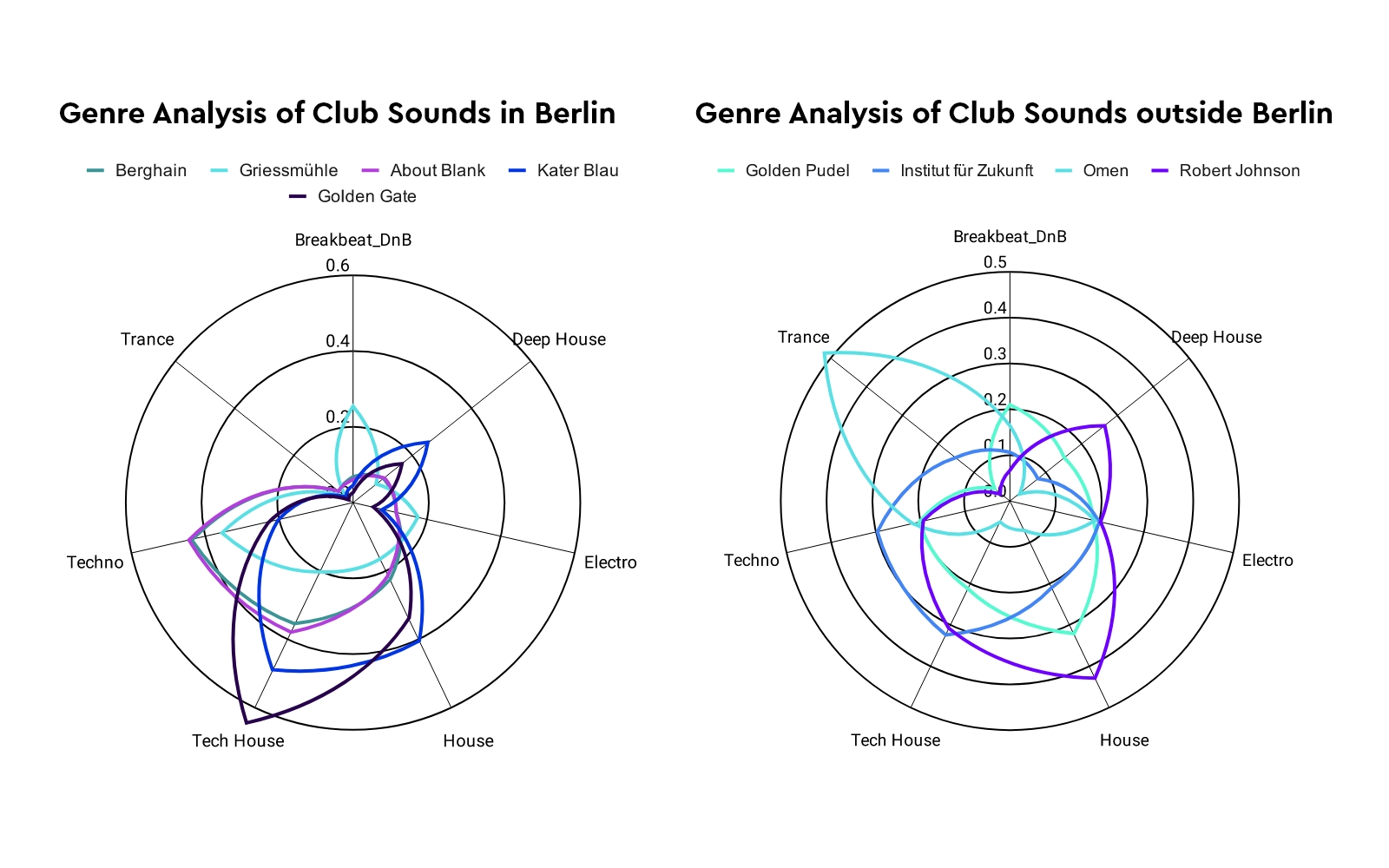

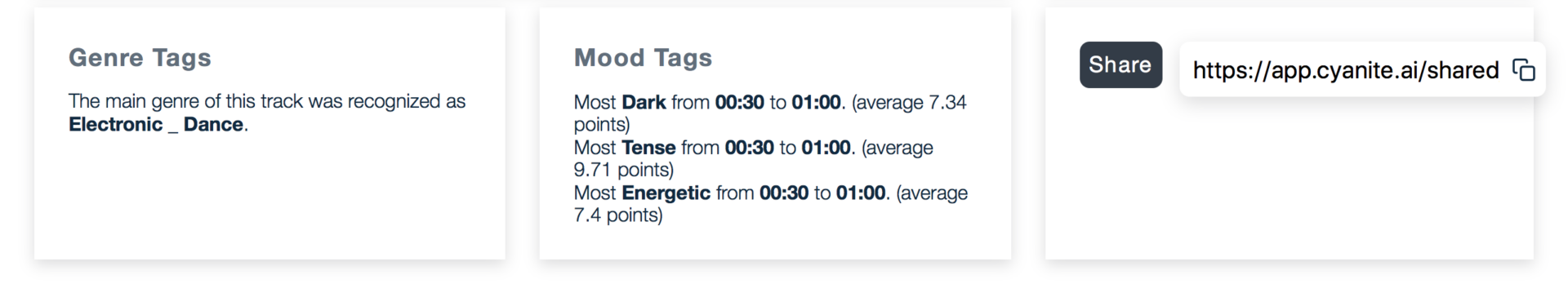

Genre Tagging with our music AI: Some interesting insights

We see that for Berlin clubs, the CYANITE AI analysis of their club compilations reveals a strong skew towards Techno and Tech House. The top 3 places with the most amount of Techno in their songs are: in top place, About Blank, followed by Berghain, and then Griessmühle. For Tech House, the top 3 clubs are Golden Gate, Kater Blau, and About Blank.

Outside of Berlin, we see a more varied mix of genres in the club compilations. Omen ranks highest in the amount of trance in the selection, a genre that was almost not found at all in the Berlin clubs we studied.

You can listen to some of the compilations we analysed here:

About blank: :// About Blank ( 2018) , :// About Blank 002 (2017), :// About Blank 004 (2018), :// About Blank 006 ( 2019) and :// About Blank 007 (2019)

Kater Blau: Katermukke 150 Compilation (2017)

Berghain / Panorama Bar: Ostgut Ton – Zehn (2015)

Golden Gate: Compilation (2012)

Golden Pudel: Operation Pudel (2001)

Omen: Moka DJ Compilation (1996)

Institut Fur Zukunft : Various 5IVE ( 2019)

Robert Johnson: Livesaver Compilation 2 (2015) & Livesaver Compilation 3 (2017)

Overall, our quick research into these clubs with AI showed us some very interesting things. It seems that with a larger data set, it might be possible to quantify the Berlin sound and perhaps also sounds for other key party cities.

For more Cyanite content on AI and music, check these out:

Ellen Allien, Roman Flügel and Dub Isotope: An AI Analysis of Techno and Drum ‘n’ Bass

“I think it’s important to learn the rules of music planning and music use in order to then break them in a meaningful way. In this way, individual and creative ideas can emerge.”