Cyanite Talks #3 with Josephine Geipel – Music Therapist & Researcher at SRH Heidelberg

For the third part of our interview series #CyaniteTalks we sat down with Josephine Geipel, music therapist and researcher at the SRH University Heidelberg. Josephine’s insights show us the power of music far beyond its use for entertainment and leisure purposes.

Learn more in this interview about the healing effect of music for depressive teenagers and how all of us can actively use music as a tool for emotional and mental stability. Enjoy the read.

Cyanite: Hi Josephine, you are a music therapist and teach at the SRH University of Applied Sciences in Heidelberg, to begin we would like to ask how you found your way into this profession and how can music therapy be defined?

Josephine Geipel: First of all, thank you very much for the invitation to the interview. My journey to music therapy is actually a very classic one, which most of our students also can tell. I made a lot of music at school, went to a musical high school and when it came to choosing a profession, I thought that a social job would be nice. I could imagine myself as a pediatric nurse, a midwife, or special education teacher, and in my investigation I discovered that you can study music therapy and I thought: how crazy is that? I took a small detour, studied theatre studies and worked in cultural management. But then I realized that I needed direct contact with music again and that I didn’t just want to take care of the administrative part of the music business. Finally, when I studied for the second time, I added a master’s degree in Music Therapy on top.

There are actually only 5 universities in Germany where you can study music therapy, which is certainly the reason why the subject is not so well known. Music therapy is now also listed as a small discipline at German universities – a list of small disciplines that are particularly worthy of support and protection.

We define music therapy in Germany as: the use of music within a therapeutic relationship to restore, maintain and promote mental, spiritual or physical health. And what is very important is that this happens within a therapeutic relationship. This distinguishes music therapy from music medicine, which uses music for the same purpose, but does not do so within a therapeutic relationship. Instead, a health professional turns on the jukebox and the music plays. There is no exchange of the effect of the music with the patient and there is no playing of music together. And this offers a good demarcation of the two areas, since these terms are often confused.

“We define music therapy in Germany as: the use of music within a therapeutic relationship to restore, maintain and promote mental, spiritual or physical health.”

Cyanite: Is music therapy already an accepted field in medicine or do you still have to fight to justify it?

Josephine: It is actually a very, very old field. Music has been used in medicine for thousands of years. Both with indigenous people and with the advanced ancient civilizations like the ancient Greeks. It is not so much anchored here in our Christian culture. Illness was long seen as God’s punishment and music was used to proclaim the word of God. Only since the 17th/18th century has ‘music as a remedy’ been discussed again. And that is why it is not yet as deeply rooted in our culture as it is in other parts of the world. Nevertheless, today’s music therapy is present in many guidelines for the inpatient care of patients and is an relevant part of the treatment of psychiatric and psychosomatic illnesses. Psychiatric and psychosomatic clinics are the places where most Music Therapists work. However, they are also found in acute medical areas or in rehabilitative institutions. In Germany neurological music therapy, for example, is a growing field where music is used very functionally, e.g. to improve the postural control of stroke patients who have lost certain bodily functions or Parkinson’s patients, where rhythm is used to restore motor functions.

Further, I also work practically in the field of neonatology, i.e. with premature and sick newborns and their families. Here, the main aim is to encourage parents to hum and sing for their child to strengthen their relationship and promote relaxation. Other areas of application are in oncology, palliative care and also in curative education context or in the field of community music.

” In many hospitals music therapy is a relevant part of the treatment of psychiatric and psychosomatic illnesses.”

© Photo by George Coletrain – Unsplash

Cyanite: What can music do that other forms of therapy cannot? What makes music so special in therapy?

Josephine: Well, I think the most pronounced thing is that music therapy is one of the therapy methods that also enables the treatment of non-verbal patient groups that cannot come to psychotherapy. These can be people who, due to a limitation, can no longer understand or produce speech, e.g. after a stroke or disability. Or people who no longer have the strength, e.g. in palliative care at the end of life. For them, music can be a different approach to thoughts and feelings that they are dealing with. Or groups of patients who have literally lost their power to speech, e.g. after traumatic experiences or people with depression and anxiety disorders who find it difficult to talk about their feelings and thoughts – to put them into words at all.

I am mainly researching music therapy with depressive teenagers. Young people are already going through such a difficult phase of change. The brain is being remodelled and might lead to slight mood swings; if an illness such as depression is added, they often find it difficult to access, express and regulate their emotions. Active music-making is a great way to express those feelings that cannot be expressed verbally and then find the words to express them. Music is a kind of opener.

If we look at the symptoms of depression – people withdraw, have little social contact, a depressed mood, low self-esteem and a low level of activity – and then look closely at what actually happens when I make music with a young person; write a song and then record it: We have a common activity in a social relationship. It is something active to make music, it increases the level of activity and music is fun. We make music because we enjoy music. And in the case of a depressive mood, it is doing something that is fun and encourages people to open up. Music picks people up quite well, especially young people. There is no age group that listens and creates music as much as young people.

“Active music-making is a great way to express those feelings that cannot be expressed and then find the words to express them ”

© Photo by Hans Vivek – Unsplash

Cyanite: What does music do to us, that it touches our inside, that it can trigger us or bring certain things to light?

Josephine: Well, I am not a neuroscientist who can explain this in detail. But the regulation of mood or one’s own activity level is one of the most important reasons for people to listen to music. Music activates many different areas of the brain that are important in terms of emotional reactions. It directly addresses the limbic system, which is responsible for processing emotions: The body’s own reward system is activated. Music can therefore cause the release of dopamine and endogenous opioids – similar reactions we see with sex or certain drugs. These substances increase our drive, motivation and mood.

Cyanite: Can we then also generalize that certain music triggers a positive mood and a high energy level? Or does it differ from person to person?

Josephine: Well, there are certain musical parameters that cause similarities. Music can trigger certain emotions in us, but there are many, many different mechanisms that can underlie this. Some are universal and some are individual. Universal would be the mechanism of musical contagion, i.e. when a song is in a very slow tempo and in a minor key and perhaps the song also has a sad lyric. Through musical contagion, the mood in the music can be transferred to our mood. Scientist discuss whether this really happens via the mirror neurons. Imagine I have a patient who had a car accident – therefore a traumatic experience, and during the car accident ‘Dancing Queen from Abba’ was playing. It’s a very positively charged upbeat song, which most of us would perceive as a happy song, and therefore puts many people in a good mood. However, for the patient who experienced the accident listening to this exact song could trigger a flashback which could brings them directly back into the difficult emotional state experienced during the accident. Then playing this song would be absolutely contraindicated.

There is no music that works the same for everyone, but it depends on the situation you are in, what your current state is, what experiences you have had and so on. There are multiple variables at play in this process, which makes the process highly complex.

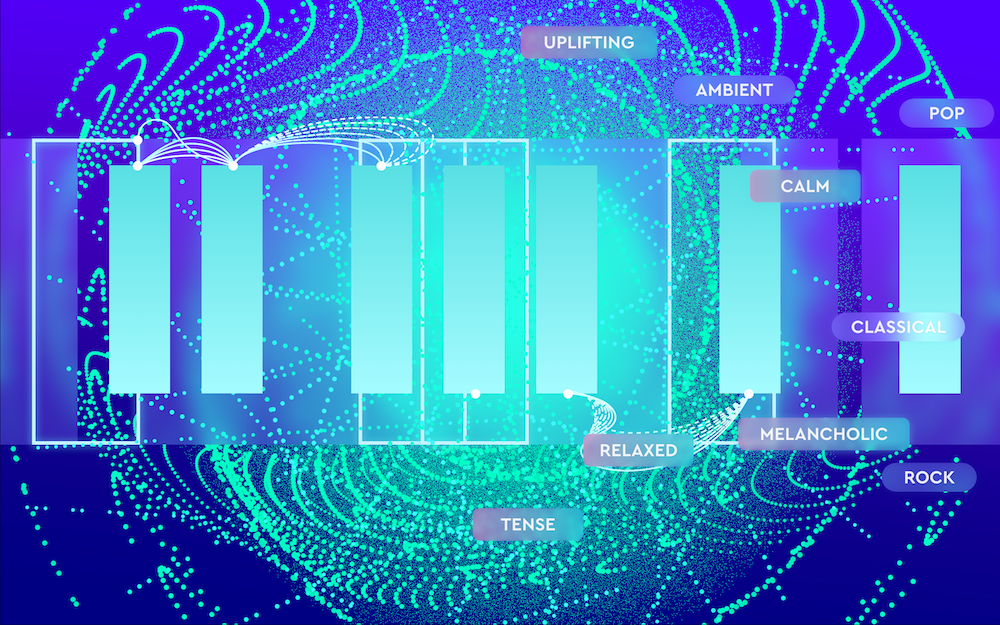

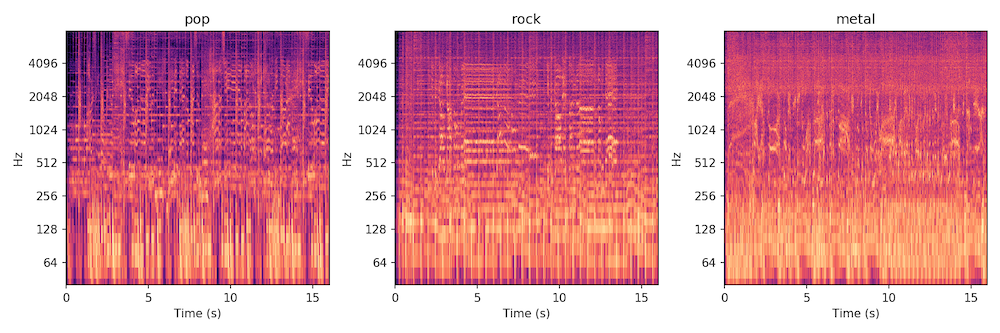

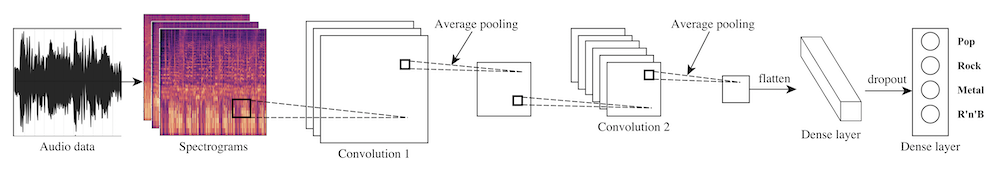

Cyanite: Algorithms try to make exactly such generalizations. To what extent do you come into contact with artificial intelligence in your profession, and where do you see the greatest potential for integrating this technology into music therapy and medical applications?

Josephine: In my practical work as a music therapist I have less contact with artificial intelligence, but of course both patients and I are surrounded by our environment and therefore also by AI. Patients use health monitoring apps with sleep and movement trackers and are reminded by the app: ‘Now is the time to get up to benefit your health’, so we are already getting in touch with AI. If you look at research projects in the field of psychotherapy, it is also very exciting for the field of music therapy. For example, an embodied AI – like a robot, can be useful for interactions with elderly people who often suffer from social isolation, or for children with autistic children to practice social interaction. Also apps that are used as virtual therapists can, for example, chat with people with depression and thus simulate a therapeutic conversation. AI development is not directly affecting my work, but I can see its presence in fields around me: Research projects are also taking place in our sister disciplines of music medicine and psychology.

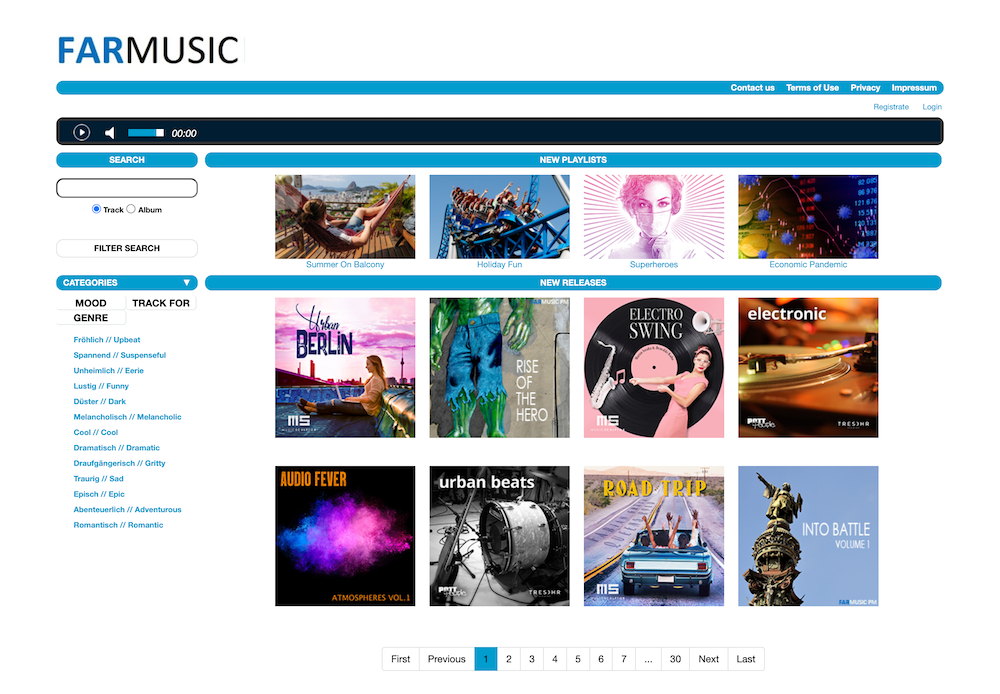

For example, there are many who try to explore the correlation between psychological and physiological parameters to music listening behaviour – that they then analyse and implement in machine learning models. I think that we are still at the beginning, and there is certainly potential for us music therapists to be open to – or at least we should know about what is being developed. In the end our patients will use the products that are developed with the help of these research results, so we have to stay informed.

There are very exciting projects. There is a research group in Finland at the University of Jyväskylä that is developing a machine learning model to support affect regulation of young people through listening to music via an app. And this is, of course, a topic I am very much involved with, because I often develop playlists with the young people who are in therapy with me. Not based on AI, but completely human. I also believe that in the long term such apps could be included as a support for music therapy treatment. But as with the use of AI in the diagnosis of cancer, in the end the doctor has the last word. And I also think that in music therapy treatment, the music therapist and the patient should participate in the process and have the last word on what is being listened to. Think of the example before with the car accident and the woman, the machine didn’t know the individual case.

Cyanite: What do you wish for – from a music therapy point of view, for the developers of modern algorithms?

Josephine: Keep the human being in mind.

From my own dealings with technology I know the enthusiasm of: “Wow, what you can do with it!” I think you just have to be careful not to get carried away, to put the machine above the human being. Apart from the fact that the machine does not know the individual case, there are also ethical and social aspects, and social consequences which are not yet foreseeable. We do not yet know how we – humanity – will react to them. With machine learning, it’s madness the speed at which it is developing. If we look at how slowly evolution is proceeding, the question is ‘how quickly we can adapt to these new developments?’. And I think we have to take a good look at this and, despite all the research in the technical field, we must not lose sight of the ethical, social and data protection issues.

© Photo by Fixelgraphy – Unsplash

Cyanite: As a last question: What are your tip for everyday people on how to use music at this moment in time where isolation, home office, and lockdowns are still realities for a lot of us?

Josephine: Well, I found the balcony music which has taken place in many cities very nice, because it makes a typical music-psychological phenomenon visible: making music together creates a feeling of community, solidarity and cohesion. I find highly exciting that in such an exceptional situation, we humans intuitively use music functionally as social cement.

For personal listening to music: pay attention to what you put on your ears! Pay attention to what the music you listen to triggers in you – especially in times when you are not feeling so well. Take care that you do not get into a loop. I see this often with depressed patients, that when they are not doing so well, they listen to songs that relate the depressed mood they are in. They have to be careful not to get caught up in this and end up in a rumination loop with musical accompaniment.

And for all of us: start where you are right now and make a playlist that gets you out of a bad mood. The first song can be a song that picks you up out of a depressed mood and then think about what kind of mood you want to be in? Search for a song that reflects this mood, put it at the end of the playlist and then gradually fill it up.

Thank you Josephine for sharing your insight with us and for your valuable contribution in the field of music therapy!

If you are interested in knowing more about music in relation to therapy, psychotherapy and brain functions, here’s a list of recommendation on the topic:

Books:

“This Is Your Brain on Music: The Science of a Human Obsession”

by Daniel J. Levitin

” Good Vibrations”

by Prof. Stefan Kölsch

“Handbook of Music, Adolescents, and Wellbeing”

by Katrina McFerran, Philippa Derrington, and Suvi Saarikallio

Podcasts:

Clinical BOPulations

Instru(mental)

Musical Health

The European Music Therapy Confederation

Deutsche Musiktherapeutische Gesellschaft

Article on Playlists

Music Therapy ( M.A) – SRH Heidelberg

For more of our thoughts on Artificial Intelligence and Music, check these out: